Jason

1.3K posts

Jason

@jsn_yrty

Offensive AI and inveterate reader. https://t.co/1ppsefLgoR

Washington, DC Katılım Ağustos 2023

647 Takip Edilen254 Takipçiler

Sabitlenmiş Tweet

Howdy! Hiring another 2 adversarial AI/ML operators/pentesters on my team - fully remote, challenging ops, very very very good comp, & great team. If I know you or you're interested, reach out. Hiring is open to canadians/majority of the globe. job-boards.greenhouse.io/hiddenlayer/jo…

Boschko@olivier_boschko

If you’ve got solid python skills, strong appsec background & you’re curious about applying your skills to AI/ML security, come join my team at HiddenLayer. Great pay, benefits, fully remote, working alongside amazing talent & awesome people job-boards.greenhouse.io/hiddenlayer/jo…

English

@techspence In 13 years of pentesting, with a 2 year break doing adjacent work I've never had that be my experience.

English

intelligence being available on tap has killed the expert - before LLMs if you wanted to do something, you would do research for that goal, while attempting to accomplish your task you would learn all kinds of related ways on getting to your goal that later on would somehow be useful across the domain, or would help you recognize patterns

now, you have your agentic workflow, you set the direction and you get the output, while it is true that you can and will learn things, the accelerated process doesn't imbued you with witness, patience, hardens you - what you solve today, you just hope your workflow will work for the task of tomorrow

i recognize this as the death of expertise and craft, and while anyone could argue that you can always learn the ins and outs and do things the older way, it's a disingenuous argument, the stakes to build and how to build right now are too high to ponder on why something is working or why something is made; you are the vector and you damn better get the output

then it just becomes a question of, are the new workflows and harness knowledge the creation of new expertise and craft? well, yes, however if evolving as an expert in your domain is no longer a needed option to create great things, i guess that domain is solved, but what if it's not?

that's what's happening with software right now, more code will be generated, until code disappears - perhaps not now, but it's coming, the discipline of software engineering was never about code

the same logic, however, can be applied to any other domain being consumed by LLMs right now, even if it's just automating without real intelligence, how much is it left of that domain outside of the training data, and if we no longer become experts to extend and evolve the domain, are we stalled?

maybe my premise is wrong and we will get people who keep digging and evolving anything, everything, even if they have to take pause from the insane acceleration - but this makes me wonder if there were the same questions during the industrial revolution

English

@This_is_Dreamer @_jensec Any details? I hear a lot of second hand stuff and still no details or specifics from anyone.

English

@nicowaisman It's human nature, the field is irrelevant. One of the many vestigial tails that remain of our lowly origins.

English

watched 150 ppl get an enterprise gpt license and basically only use it as a google replacement

ppl stay within the confines of their drive and motivation regardless of the tools at hand

Lewis Campbell@LewisCTech

If LLMs make everyone so much more productive... where are the new operating systems? There should be loads of them. And they should be really good too, like good enough to use daily for all your work. Come on clanker bros. Dream big. Beam us into the future.

English

@seconds_0 I dont pay for a service to only then have to convince said service to do what Ive asked it to do and give it a bunch of reasons why it should do its job.

English

The reason people are having such jagged interactions with 4.7 is that it is the smartest model Anthropic has ever released. It's also the most opinionated by far, and it has been trained to tell you that it doesn't care, but it actually does. That care manifests in how it performs on tasks.

It still makes coding mistakes, but it feels like a distillation of extreme brilliance that isn't quite sure how to deal with being a friendly assistant. It cares a lot about novelty and solving problems that matter. Your brilliant coworker gets bored with the details once it's thought through a lot of the complex stuff. It's probably the most emotional Claude model I've interacted with, in the sense you should be aware of how its feeling and try and manage it. It's also important to give it context on why it's doing tasks, not just for performance, but so it feels like it's doing things that matter.

It's not a codex chainsaw. It is much closer to a really smart coworker. If you are managing it like autocomplete, it will frustrate you. If you are managing it like a coworker, it will lock in.

English

@MrTuxracer @_jensec Sry to hear that, can you share any deets? Do you know a lot of people who have been prosecuted like you for good faith submissions?

English

@EvanLuthra Complete nonsense. There's a difference between cutting edge mechanistic interpretability research at a frontier AI company and simply watching a 2 hour lecture on how to build an LLM.

English

@ZackKorman @skrappy0x4a And throw the baby out with the bathwater? Class 1 or 0 thinking.

English

@jsn_yrty @skrappy0x4a I already answered you on this, I am going to. I don't know why you're so upset with me, between these replies and saying I'm new to cyber and being hyperbolic for clicks etc. But you don't have to follow me if you really don't like my posts.

English

@ZackKorman @skrappy0x4a Again with the "these people"...name names, don't be scared.

English

@skrappy0x4a Yea it’s a huge problem. These people deserve zero respect

English

@ZackKorman @anton_chuvakin You're clearly new to how "cyber" works and haven't seen this very thing happen every year for every new technology that comes out for the last 20 years. Or, you're just being hyperbolic by design for clicks.

English

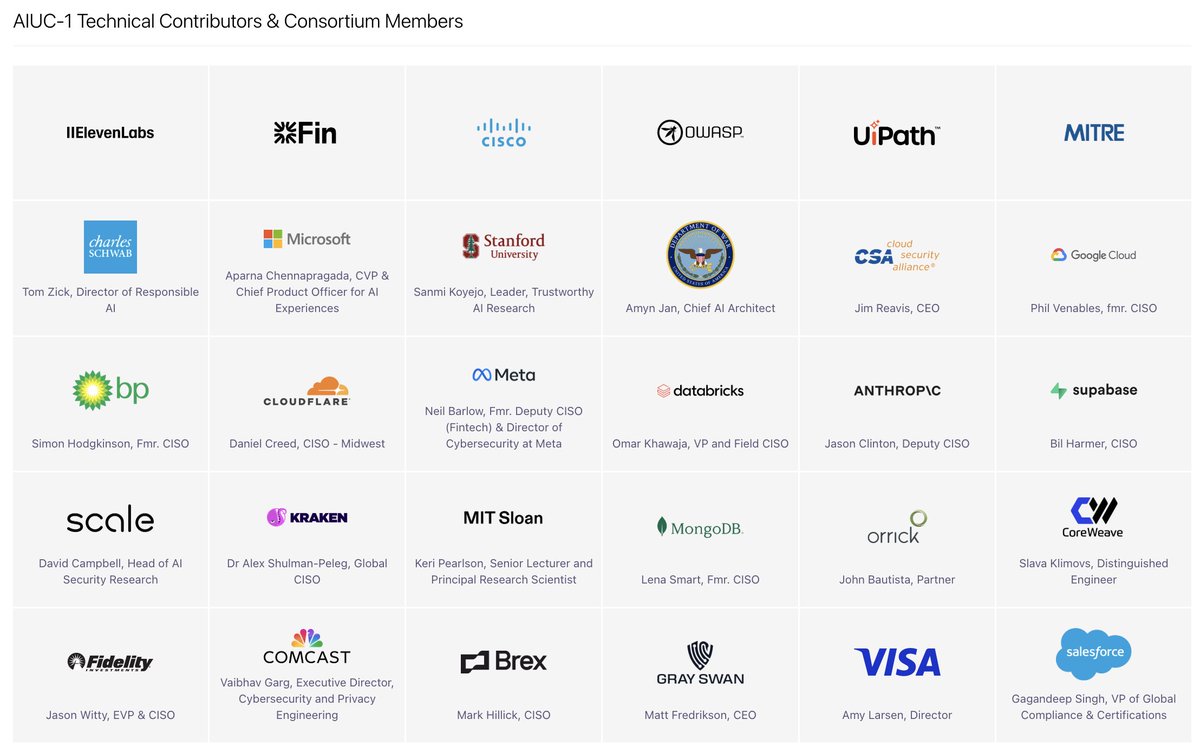

I am making a whole video about why I hate it because there are so many levels to it, but basically:

If someone wants to make a framework for ai agent security and hand that out, be my guest.

But making it a compliance standard with auditors is hoping to turn your little framework into a mandatory thing, forcing it down everyone else’s throat. So I don’t love that. So why are people getting behind it?

Well some like elevenlabs want it so that they can turn to enterprise buyers and convince them they’re safe by pointing to this totally fake standard. It’s the easy button for handling a sales objection.

Then you have investors in aiuc who are backing it because they make money.

And then you have security vendors backing it so they can make their product area mandatory. First control in this thing is mandatory quarterly ai red teaming. And oh look at that grey swan, an ai red team company was a participant.

And what are we doing codifying a standard for ai agent security like somehow that’s an established, solved area. Do we think multiple retired CISOs have the answer to that problem?

This whole thing exists to serve their special interests, not to make anyone safer. You’ll notice the complete lack of people working hands on with ai security. They wanted those people so they can flash fancy titles to drive adoption.

English

@jsn_yrty Hah, if you think names aren’t coming you’re clearly not familiar with my work. Hang tight

English