Kwanghee Choi

115 posts

Kwanghee Choi

@juice500ml

PhD student, working on speech AI with David Harwath @utsaltlab (@UTAustin) and David R. Mortensen @dmort27 (@LTIatCMU).

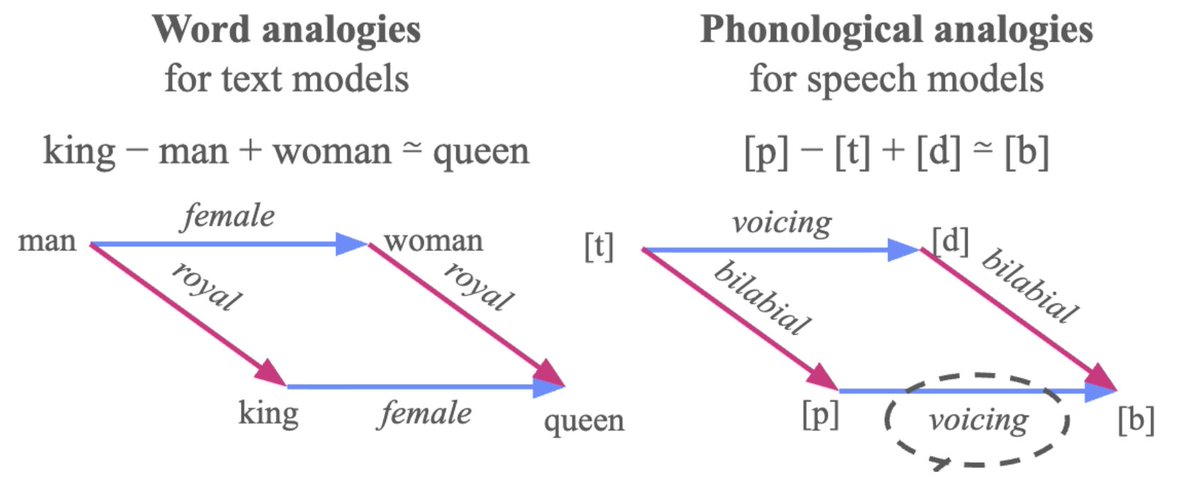

𝐒𝐞𝐥𝐟-𝐬𝐮𝐩𝐞𝐫𝐯𝐢𝐬𝐞𝐝 𝐒𝐩𝐞𝐞𝐜𝐡 𝐌𝐨𝐝𝐞𝐥𝐬 𝐚𝐫𝐞 𝐏𝐡𝐨𝐧𝐨𝐥𝐨𝐠𝐢𝐜𝐚𝐥 𝐕𝐞𝐜𝐭𝐨𝐫 𝐌𝐚𝐜𝐡𝐢𝐧𝐞𝐬! 🗣️ Excited to be giving an invited talk this Thursday (March 19th, 3pm Amsterdam time)! Huge thanks to @mariannedhk at Univ. of Amsterdam for the invite 🙏

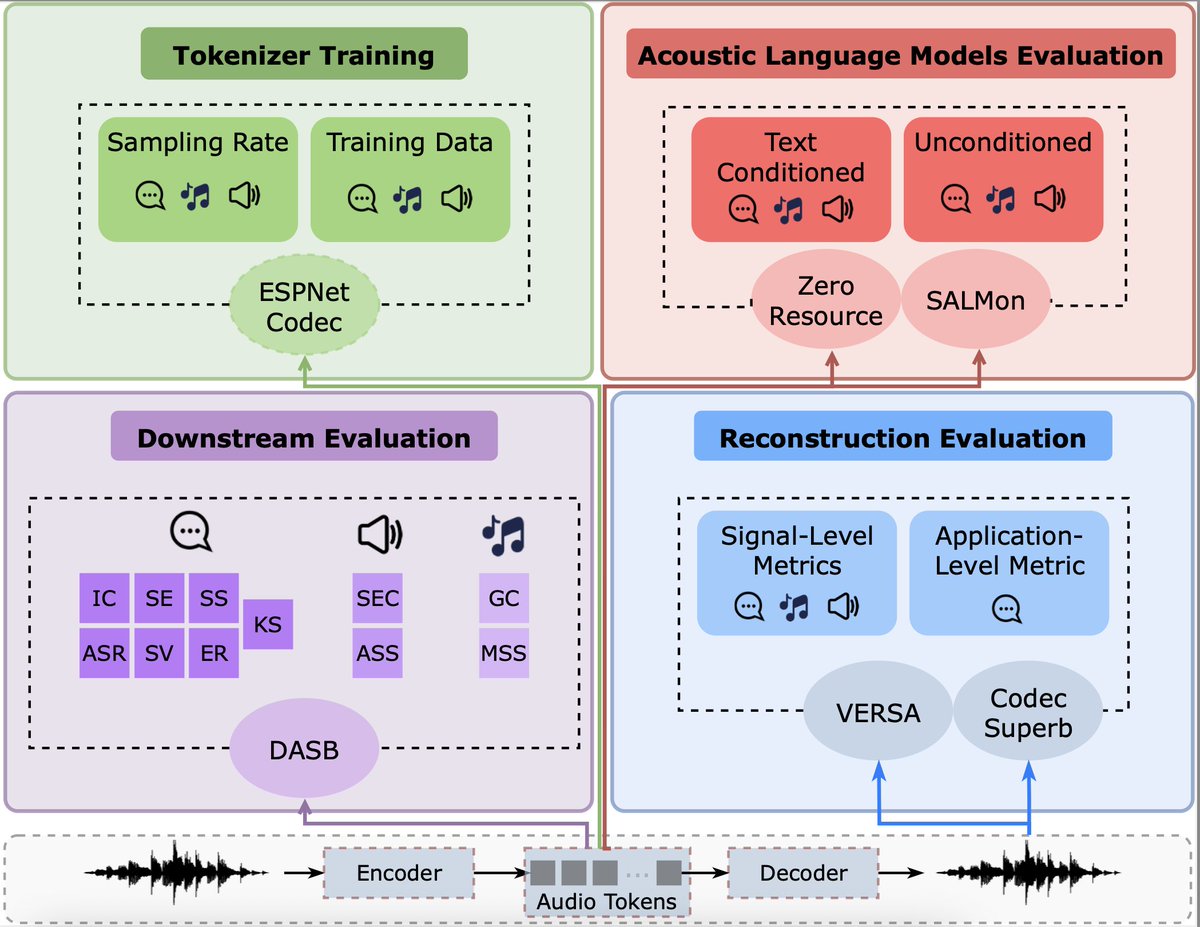

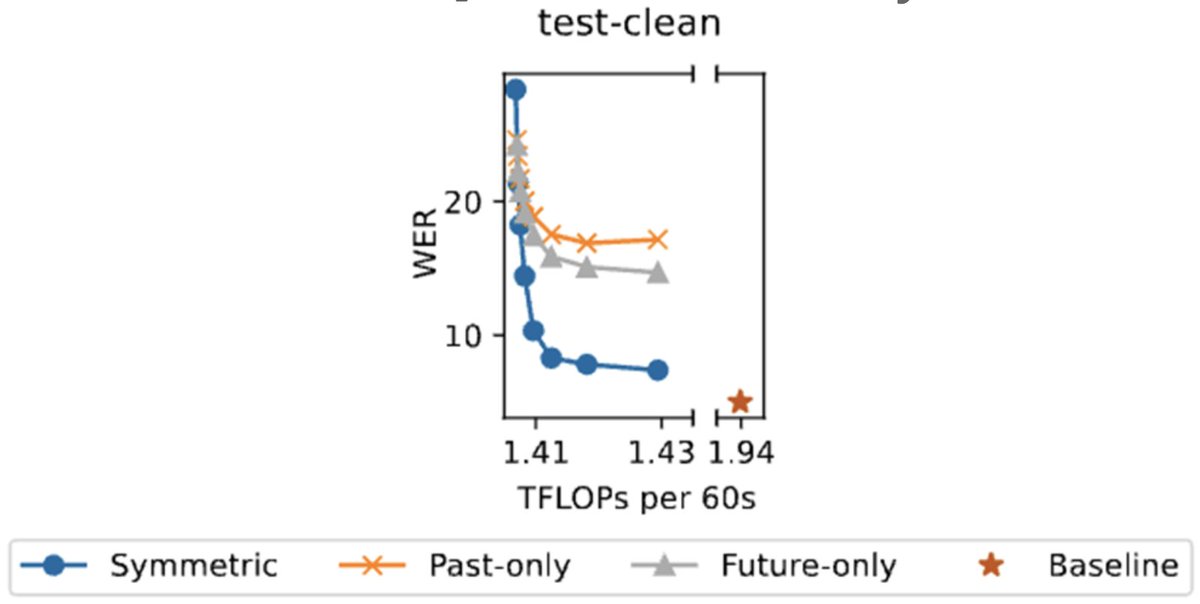

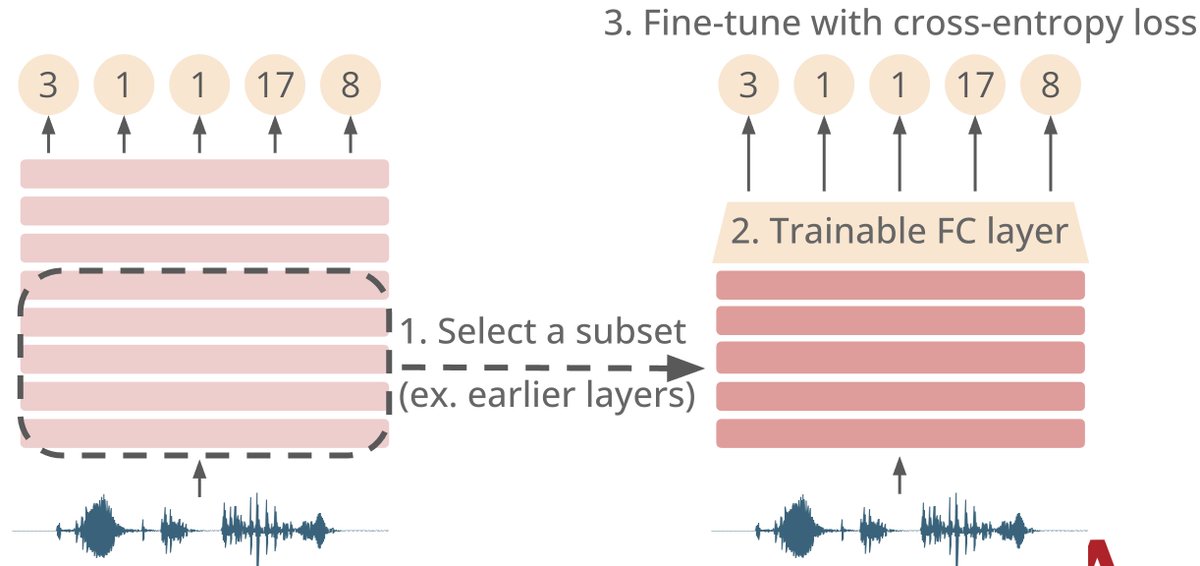

Our work on OWSM v4 received the Best Student Paper Award at #Interspeech2025! 🏆🎉 Huge congratulations to the team! 🚀👏 I’m especially happy to see our open science efforts for speech foundation models recognized by the community. 🙌 🔗 isca-archive.org/interspeech_20…

Our work on OWSM v4 received the Best Student Paper Award at #Interspeech2025! 🏆🎉 Huge congratulations to the team! 🚀👏 I’m especially happy to see our open science efforts for speech foundation models recognized by the community. 🙌 🔗 isca-archive.org/interspeech_20…