Kamil Tyborowski retweetledi

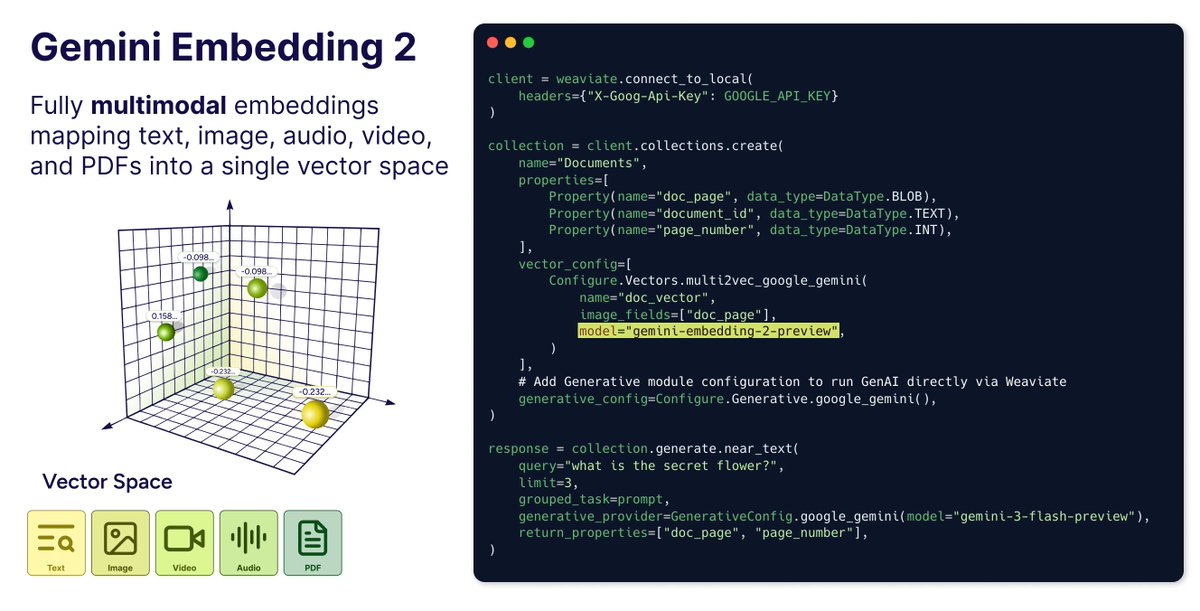

Just told Claude to import a PDF into Weaviate.

It figured out the schema, vectorised every page, batched the whole thing.

I typed one sentence

(works with CSV and JSON/JSONL too, but that feels less impressive to say)

This is the part where I'm supposed to feel useful

Try the Weaviate agent skills: github.com/weaviate/agent…

English