kstechly

12 posts

@kayastechly

Linguistics M.A. at ASU working in the Yochan lab. Starting a Comp Sci PhD at Yale advised by Tom McCoy and Tyler Brooke-Wilson in Fall 2025.

I will be at #ICLR2025 and look forward to chatting about LRMs/reasoning/planning etc. Easiest way to run into me would likely be at our poster 👇 on Thursday (10AM poster #219). DM/Whova if you would like to meet. (Fwiw, this is my first time attending ICLR.. 😳)

All this and more is discussed in our latest report on Planning in 🍓 Fields (👉arxiv.org/abs/2410.02162). This report also extends the evaluation of o1 models to scheduling benchmarks (since much of what goes under "planning" in LLM benchmarks--such as Travel Planning, Trip Planning, Meeting Planning etc. are really scheduling problems reducible to canonical CSP instances, rather than the more general planning problems reducible to graph search). (It also includes our speculations on o1's internal operations as an added bonus appendix.. 😋 x.com/rao2z/status/1…) (Work lead by @karthikv792 & @kayastechly -- with help from @21stwarlock) 2/

📢 "On the self-verification limitations of LLMs in Reasoning and Planning Tasks" arxiv.org/abs/2402.08115 (lead by @karthikv792 and @kayastechly) 👇 Investigates LLM self-verification in three formal benchmarks--Game of 24, Graph Coloring and Planning, and shows that accuracy consistently degrades when LLMs self-verify, but improves when external verifiers are used in a backprompting architecture. This combines and extends our studies from last October--arxiv.org/abs/2310.12397 and arxiv.org/abs/2310.08118… at #FMDM workshop at #NeurIPS2023). 1/

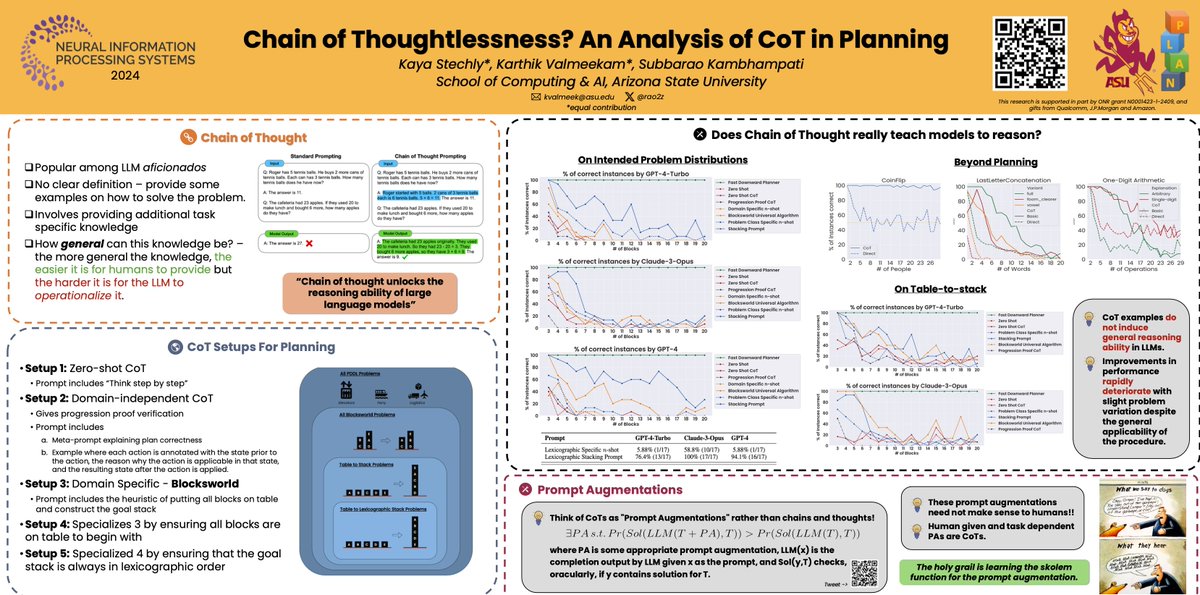

So @karthikv792, @kayastechly, @SaldytLucas and I will be at #NeurIPS2024 starting Tuesday--to present a bunch of things👇. Easiest to catch us at 11th 11AM poster session at our "Chain of thoughtlessness" poster (East hall #3010). (I am around 10th-13th--and am happy to chat about anything #AI including "Planning: Will they? Won't they?"😋) 👉docs.google.com/document/d/1Mo…

📢Thanks to @karthikv792 and @kayastechly's tireless efforts, here is the paper analyzing the (in)effectiveness of Chain of Thought prompting. The good news is that everything I said here and in my talks about CoT delusions still holds. The better news is that Karthik and Kaya have done more extensive experiments both with GPT4 and Claude 3 Opus. 👉 arxiv.org/abs/2405.04776 tldr; LLMs may well be smarter than that dog in the Farside cartoon (although I am sure @ylecun will pushback vociferously😋), but there is little reason to believe that we can advise them the way we advise our friends--and expect them to operationalize that advise..

So @karthikv792 and @kayastechly stayed up until wee hours and submitted this 18-page note to arXiv; will summarize once it is made public..

Can LLMs really self-critique (and iteratively improve) their solutions, as claimed in the literature?🤔 Two new papers from our group investigate (and call into question) these claims in reasoning (arxiv.org/abs/2310.12397) and planning (arxiv.org/abs/2310.08118) tasks.🧵 1/

Can LLMs really self-critique (and iteratively improve) their solutions, as claimed in the literature?🤔 Two new papers from our group investigate (and call into question) these claims in reasoning (arxiv.org/abs/2310.12397) and planning (arxiv.org/abs/2310.08118) tasks.🧵 1/