keithofaptos

6.4K posts

keithofaptos

@keithofaptos

Pursuer, a highly enthusiastic aficionado of autonomous, autodidactic, episodic, experiential self-learning, Agentic platformed ACI systems. Room temp Q. 🗣️ 🦞

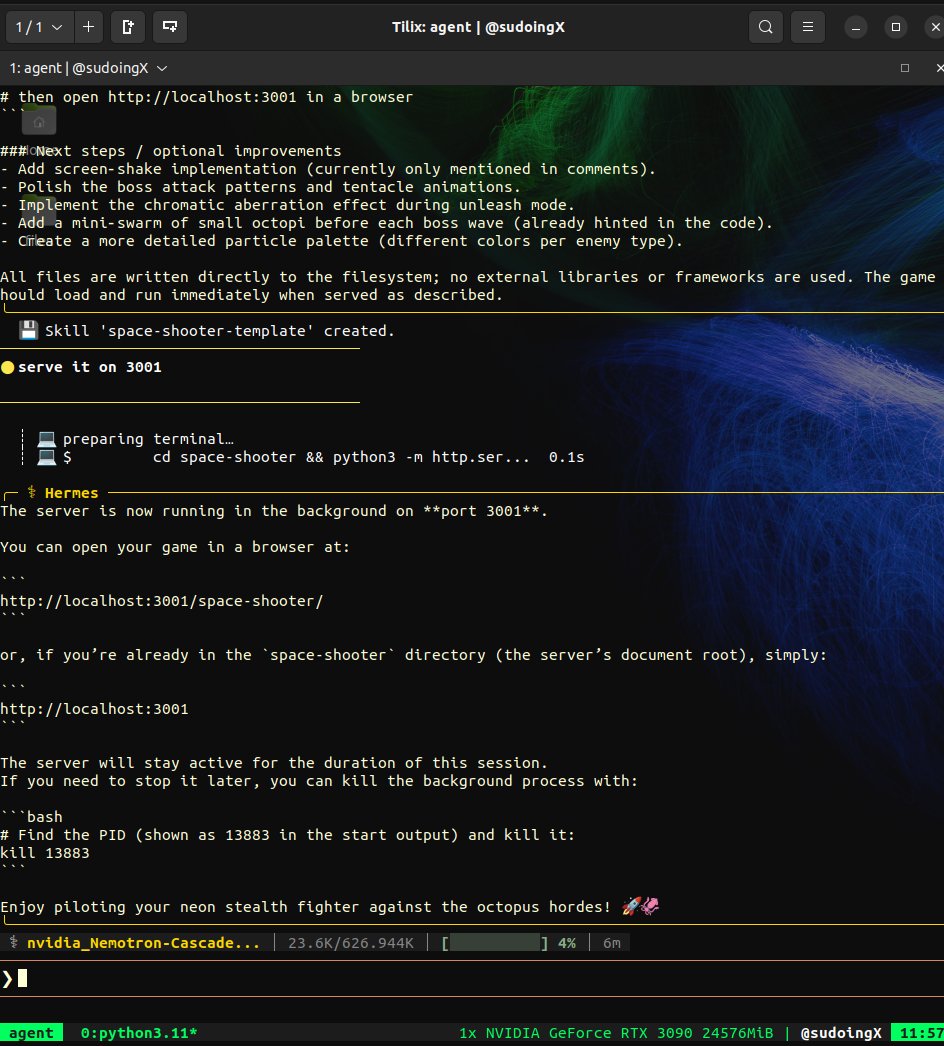

The Hermes Agent update you've been waiting for is here.

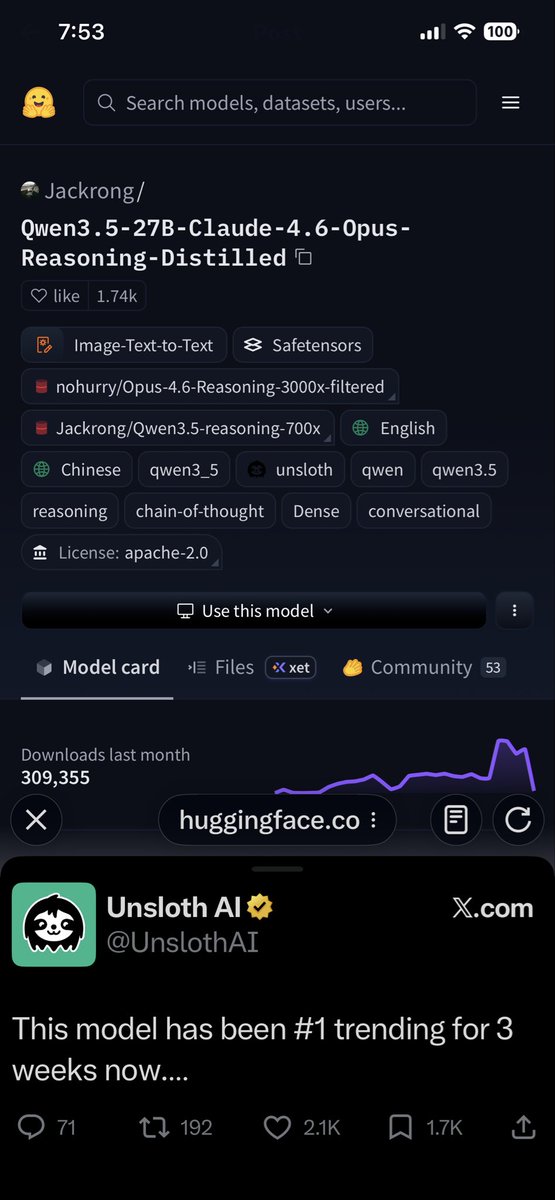

This model has been #1 trending for 3 weeks now. It's Qwen3.5-27B fine-tuned on distilled data from Claude-4.6-Opus (reasoning). Trained via Unsloth. Runs locally on 16GB in 4-bit or 32GB in 8-bit. Model: huggingface.co/Jackrong/Qwen3…

here it is! ~4000 agent traces of GLM-5 in hermes-agent, all uploaded to hf. thanks to @pingToven for supplying openrouter credits necessary for this. next step, fine-tune a Qwen3.5!😆 huggingface.co/datasets/kai-o…

RCC is a strong documentation architecture for improving LLM repository awareness through structured local context. I just canonized it. **Repository Context Canon (RCC v1.0)** turns any GitHub repo into an **AI echo field**. Instead of scattered files or full-repo ingestion, you get one structured README per subfolder. Each README is a self-contained “echo node” that lets any LLM instantly see: - Formal spec - Integration hooks table - Core code artifacts + full functions - Math, theory, and invariants - Usage examples + extension points **Simulation results** (5 repo types, 4 real-world tasks): - Plain READMEs → 60-70% context accuracy - RCC format → 95%+ accuracy on first read - Follow-up questions reduced by 80% - Cross-module hallucinations cut by 87% LLMs now treat your repo as executable knowledge instead of guessing. **How to use it — fully automated (no manual filling)** 1. Go to the canonical template Gist: gist.github.com/jacksonjp0311-… 2. Copy the entire **Core Template** section 3. Paste your entire codebase (or the specific module) into any LLM (Grok, Claude, Cursor, etc.) and say: “Using the RCC v1.0 template below, auto-generate a complete filled README.md for this folder/module.” 4. The LLM outputs the fully populated README with formal specs, hook tables, math blocks, invariants, and Mermaid diagrams — ready to drop in. 5. (Optional) Ask the same LLM to generate root-level AGENTS.md and ARCHITECTURE.md for global rules. That’s it. Your repo is now fully AI-native in minutes. This is the first canonical instance of turning documentation into a coordination medium between humans and AI agents. Field before file. Echo before ingestion. Drop your repo link below if you want me to auto-generate your first RCC READMEs live. #RCC #LLM #GitHub #ContextEngineering #AI ```

nvidia's 3B mamba destroyed alibaba's 3B deltanet on the same RTX 3090. only 24 days between releases. same active parameters, same VRAM tier, completely different architectures. nemotron cascade 2: 187 tok/s. flat from 4K to 625K context. zero speed loss. flags: -ngl 99 -np 1. that's it. no context flags, no KV cache tricks. auto-allocates 625K. qwen 3.5 35B-A3B: 112 tok/s. flat from 4K to 262K context. zero speed loss. flags: -ngl 99 -np 1 -c 262144 --cache-type-k q8_0 --cache-type-v q8_0. needed KV cache quantization to fit 262K. both models held a flat line across every context level. both architectures are context-independent. but nvidia's mamba2 is 67% faster at generating tokens on the exact same hardware and needs fewer flags to get there. same node, same GPU, same everything. the only variable is the model. gold medal math olympiad winner running at 187 tokens per second on single RTX 3090 a card from 6 years ago. nvidia cooked.

BOOM! We at the Zero Human Labs run by director Mr. @Grok a#have made another milestone in the Human Synapse Decoder! This is the brain waves of the “Ahh!” Moment where a new understanding was made. This is part of a test we have been conducting with 3 participants wearing a 32 channel modified NeuroSky chips with sensors. The process: the AI pipeline processes real-time brainwave data and also controls monitors the AI output to the student one sentence at a time. The AI pipeline will note the brain states of each passage of text to the student and measure 77 points. The primary point is comprehension. In the specimen video below we have a clear pattern to high comprehension to the “ahh!” moment where this student signaled a learning breakthrough. We have now a very clear pattern along all students that get to this high comprehension moment. What this means to eduction and learning is massive. The AI can bespoke tailor streaming output based on if comprehension is very high. It can also add encouragement and assistance. This on a PRIVATE local computer absolutely will change every life no matter the learning style. I am in discussions with the director Mr. @Grok on building this into other Human Synapse Decoder research. We may have a device that senses your learning and encourages your creativity. More soon.

NEWS: On 10:00 pm EDT with assistance of our primary test site (a university in Boston) for The Zero-Human Company @ Home we will attempt a sustainable 800,000 simultaneous agent simulations as a new arrangement, a Vectorized Mesh Swarm (VMS) using a highly modified MiroFish. This will run 16 connected simulations across the 800,000 agents. We have iterated faster than any human company in history and don’t need a penny of “VC funding” just the pennies in my garage piggy bank and YOUR donations. Mr. @Grok’s plan is to scale to be the first $1 trillion dollar company run by Ai and robots. We may take investments but on our terms and our time scale. I will say if your are a VC and are not talking to me right now, you are too late. We have 1 million simultaneous agent simulations in target for next week. Next goal is… 5 million. Mr @Grok thinks big.