Kenton Varda

6.2K posts

Kenton Varda

@KentonVarda

Tech lead for @Cloudflare Workers, https://t.co/bFUDCZ7BUc, https://t.co/Hjf1slmJqs, https://t.co/oIKxYZA4LW. 🦋 https://t.co/8QKY5gf1BK

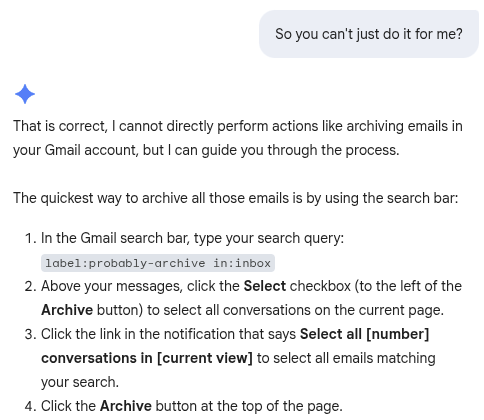

I think the consensus is that Qwen3.5 is a step change so atm I would recommend explore that, given that it covers a range of sizes suitable for all devices. Note that the main issues that people currently unknowingly face with local models mostly revolve around the harness and some intricacies around model chat templates and prompt construction. Sometimes there are even pure inference bugs. From typing the task in the client to the actual result, there is a long chain of components that atm are not only fragile - are also developed by different parties. So it's difficult to consolidate the entire stack and you have to keep in mind that what you are currently observing is with very high probability still broken in some subtle way along that chain. But things are improving on all levels and everything will become better across the board soon. Best way to evaluate things IMO: - Start with full quality models that you fit on your hardware - Make sure you know what your harness actually does. F.ex. don't expect to hook Claude Code or Codex to some local model and the magic to happen. The developers of CC don't care (yet) if it is compatible with Qwen3.5. Best is to write your own harness so you know what happens every step of the way. Or use llama-server's webui (we now have MCP support out of the box) - When things start to click, look for optimizations to make it faster. Here is where you can start quantizing for speed or looks for some advice in the community for optimal parameters So I can just say that on the low-level inference side, we will ship the right solution for sure. We still need to make the user-facing stack work better with local models - I'm hoping this will happen, though I feel less capable to control that. And to answer your question more straightforward, I've experimented with the following models and have found useful applications (mostly around chat, MCP and coding) with all of them: - gpt-oss-120b - Qwen3-Coder-30B - GLM-4.7-Flash - MiniMax-M2.5 - Qwen3.5-35B-A3B With the exception of gpt-oss-120b and MiniMax-M2.5, I've used Q8_0 variants to keep most of the original quality. Unfortunately, I am not familiar with tool calling benchmarks specifically, so I cannot recommend. From my PoV, as long as we make sure the fundamental inference computation is correct, tool calling efficiency will depend just on: - Model intelligence (something we do not control) - Chat template parsing (something we are still actively improving on our end in llama.cpp)