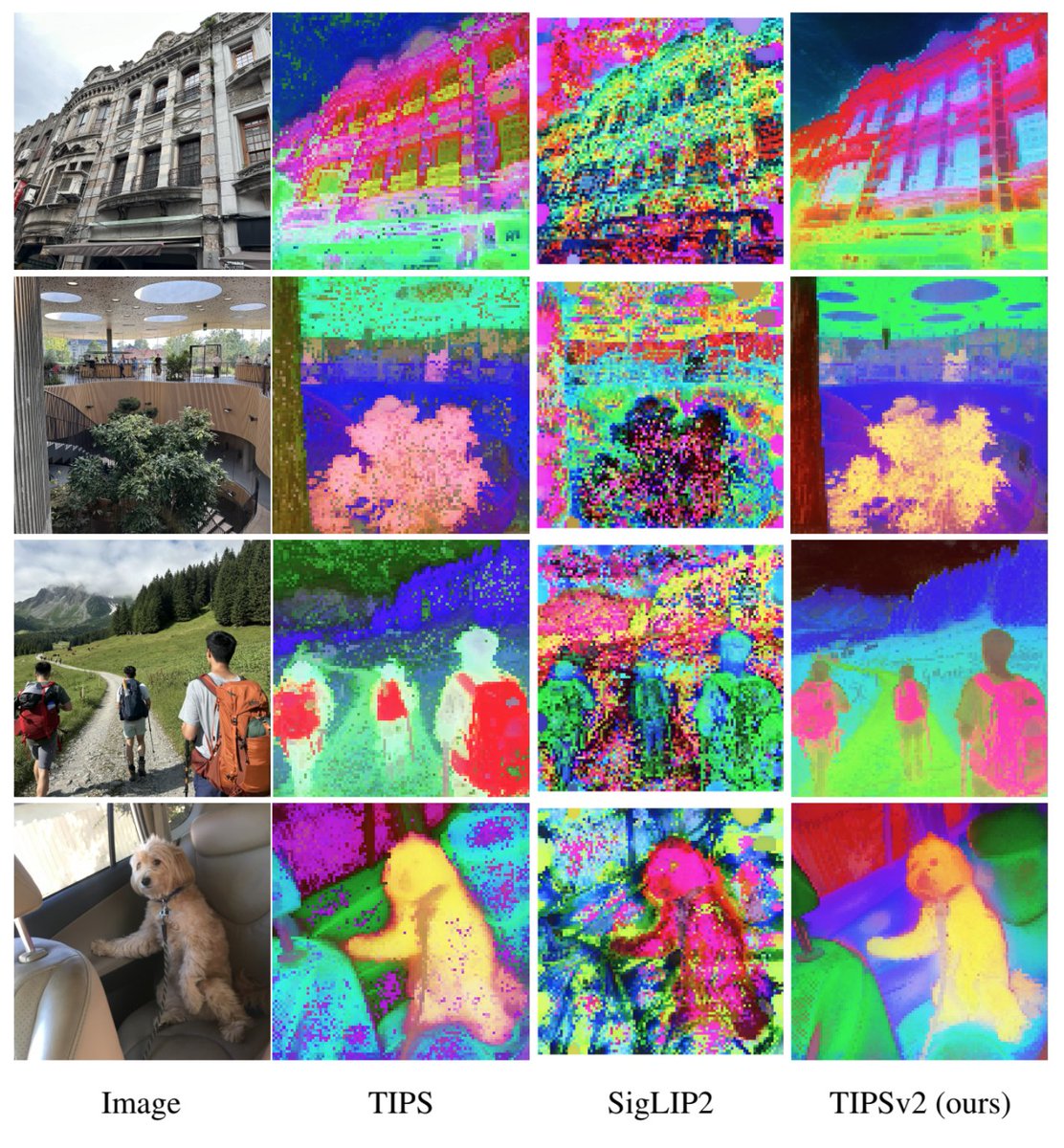

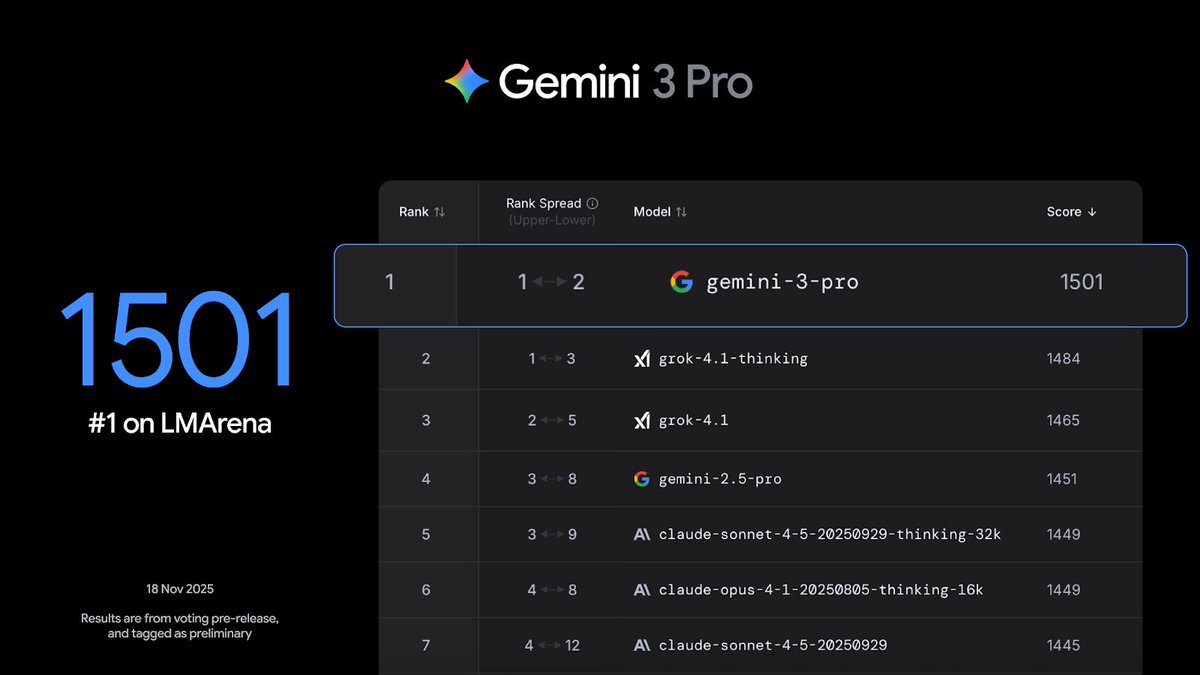

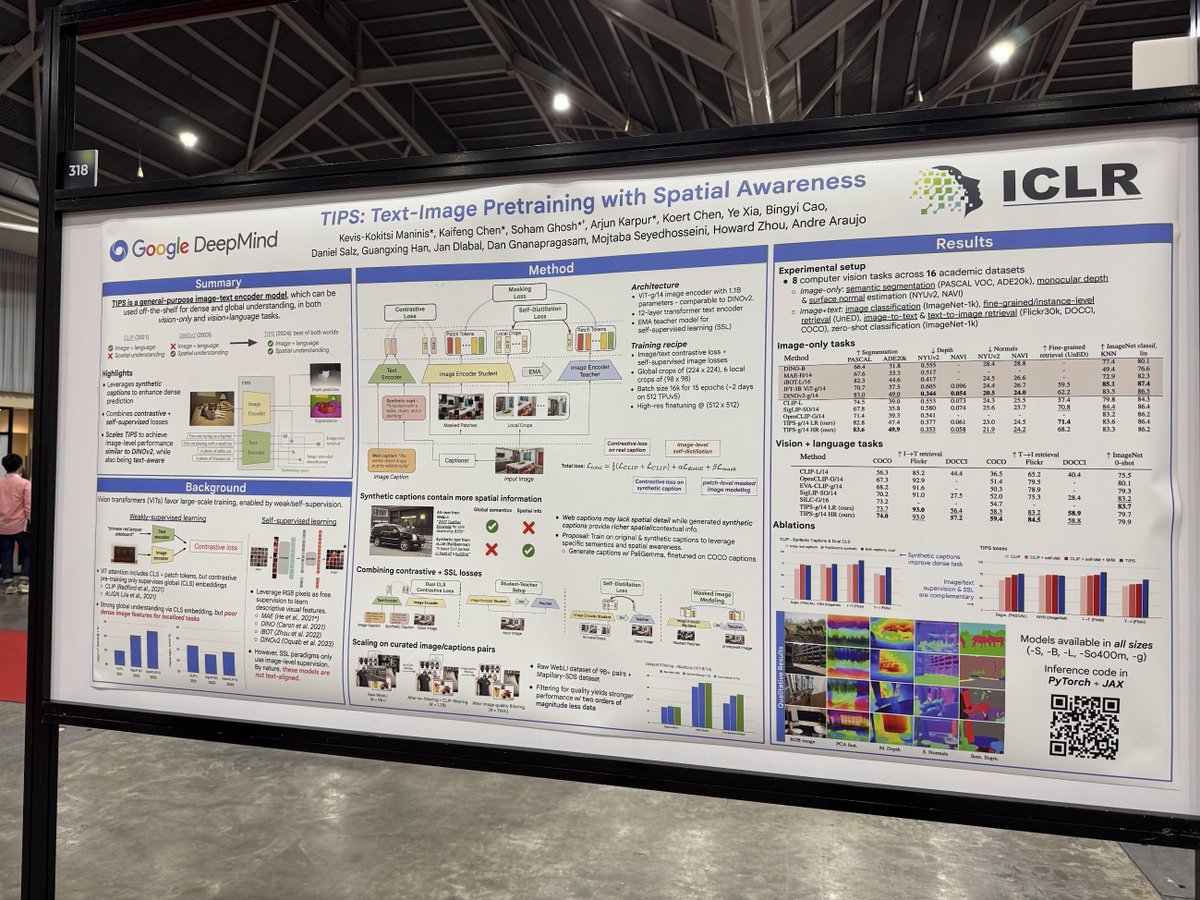

Excited to release a super capable family of image-text models from our TIPS #ICLR2025 paper! github.com/google-deepmin… We have models from ViT-S to -g, with spatial awareness, suitable to many multimodal AI applications. Can’t wait to see what the community will build with them!