Sabitlenmiş Tweet

Learn Kubernetes Today!

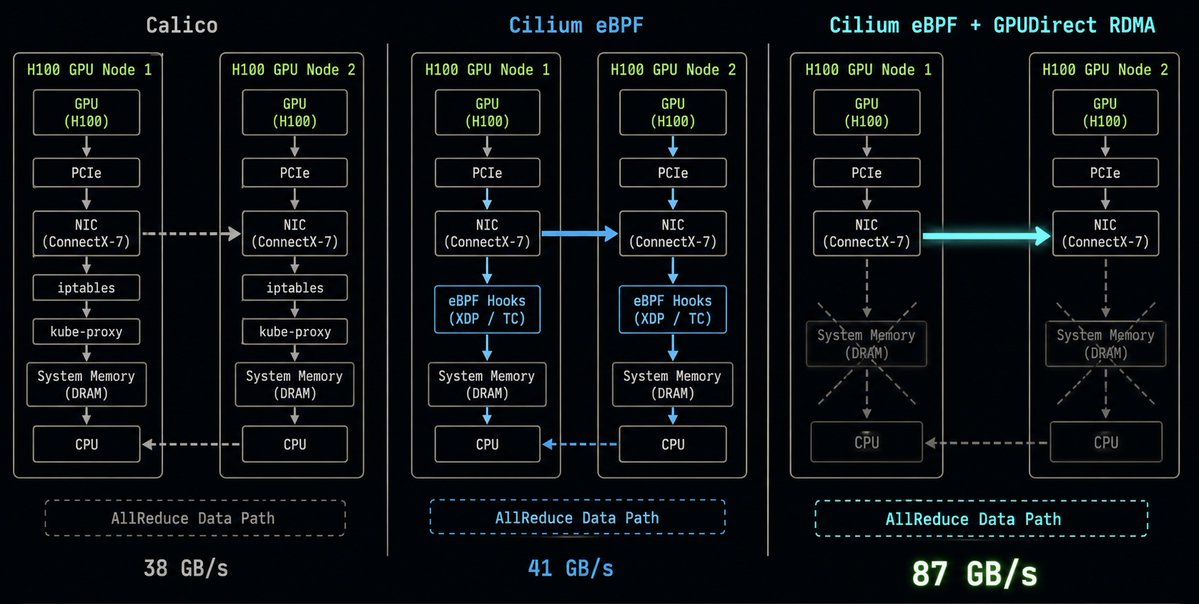

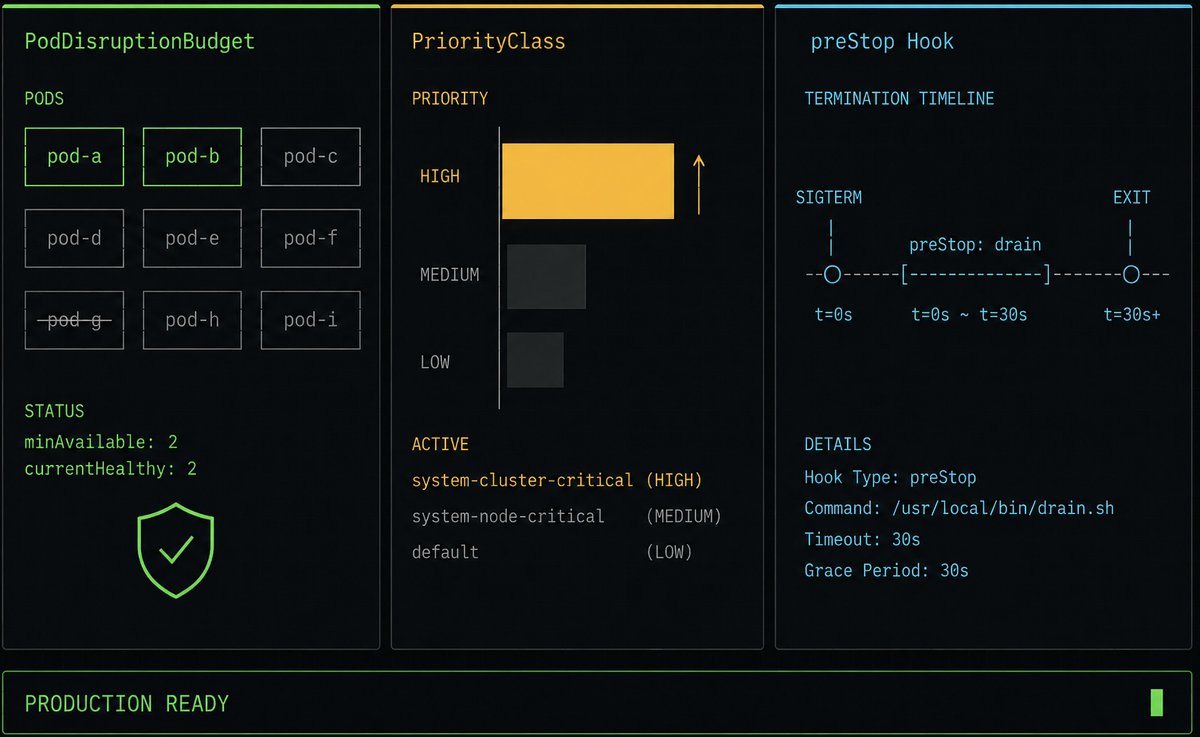

In this course you learn about the core concepts including demos of CNI, kube proxy & CoreDNS.

A project based learning where you deploy multi microservices app with db.

Go learn today & do not forget to subscribe to Kubesimplify.

youtu.be/EV47Oxwet6Y?si…

YouTube

English