Xu Jia

1.1K posts

Xu Jia

@kul_stephen

Expert in computer vision, Full Professor at Dalian University of Technology, previously at Huawei Noah's Ark Lab, SenseTime, KU Leuven, Google Research

Belgium Katılım Aralık 2013

1.2K Takip Edilen212 Takipçiler

Xu Jia retweetledi

Xu Jia retweetledi

Xu Jia retweetledi

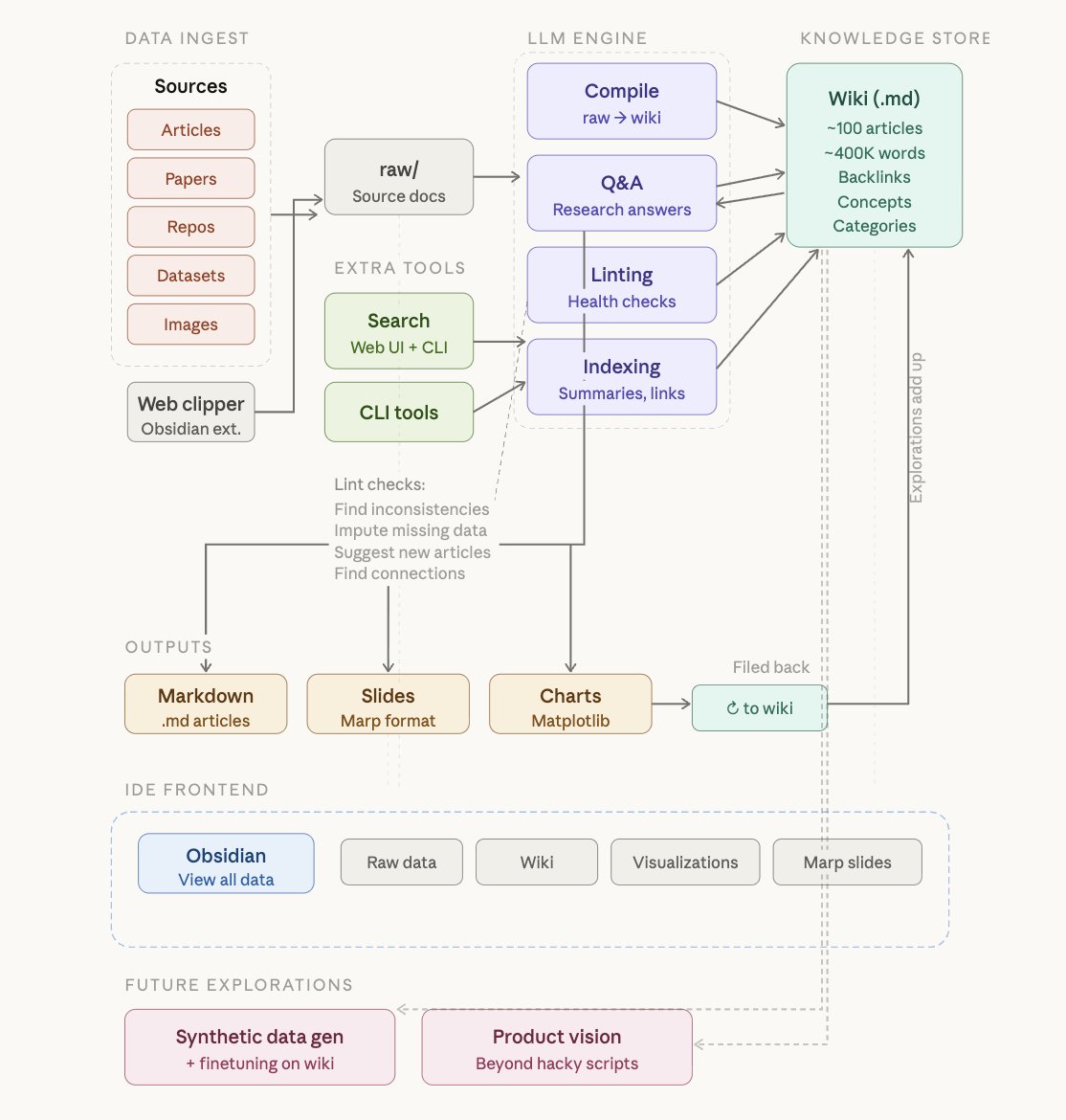

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

Xu Jia retweetledi

Xu Jia retweetledi

Based on everything explored in the source code, here's the full technical recipe behind Claude Code's memory architecture:

[shared by claude code]

Claude Code’s memory system is actually insanely well-designed. It isn't like “store everything” but constrained, structured and self-healing memory.

The architecture is doing a few very non-obvious things:

> Memory = index, not storage

+ MEMORY.md is always loaded, but it’s just pointers (~150 chars/line)

+ actual knowledge lives outside, fetched only when needed

> 3-layer design (bandwidth aware)

+ index (always)

+ topic files (on-demand)

+ transcripts (never read, only grep’d)

> Strict write discipline

+ write to file → then update index

+ never dump content into the index

+ prevents entropy / context pollution

> Background “memory rewriting” (autoDream)

+ merges, dedupes, removes contradictions

+ converts vague → absolute

+ aggressively prunes

+ memory is continuously edited, not appended

> Staleness is first-class

+ if memory ≠ reality → memory is wrong

+ code-derived facts are never stored

+ index is forcibly truncated

> Isolation matters

+ consolidation runs in a forked subagent

+ limited tools → prevents corruption of main context

> Retrieval is skeptical, not blind

+ memory is a hint, not truth

+ model must verify before using

> What they don’t store is the real insight

+ no debugging logs, no code structure, no PR history

+ if it’s derivable, don’t persist it

English

Xu Jia retweetledi

Xu Jia retweetledi

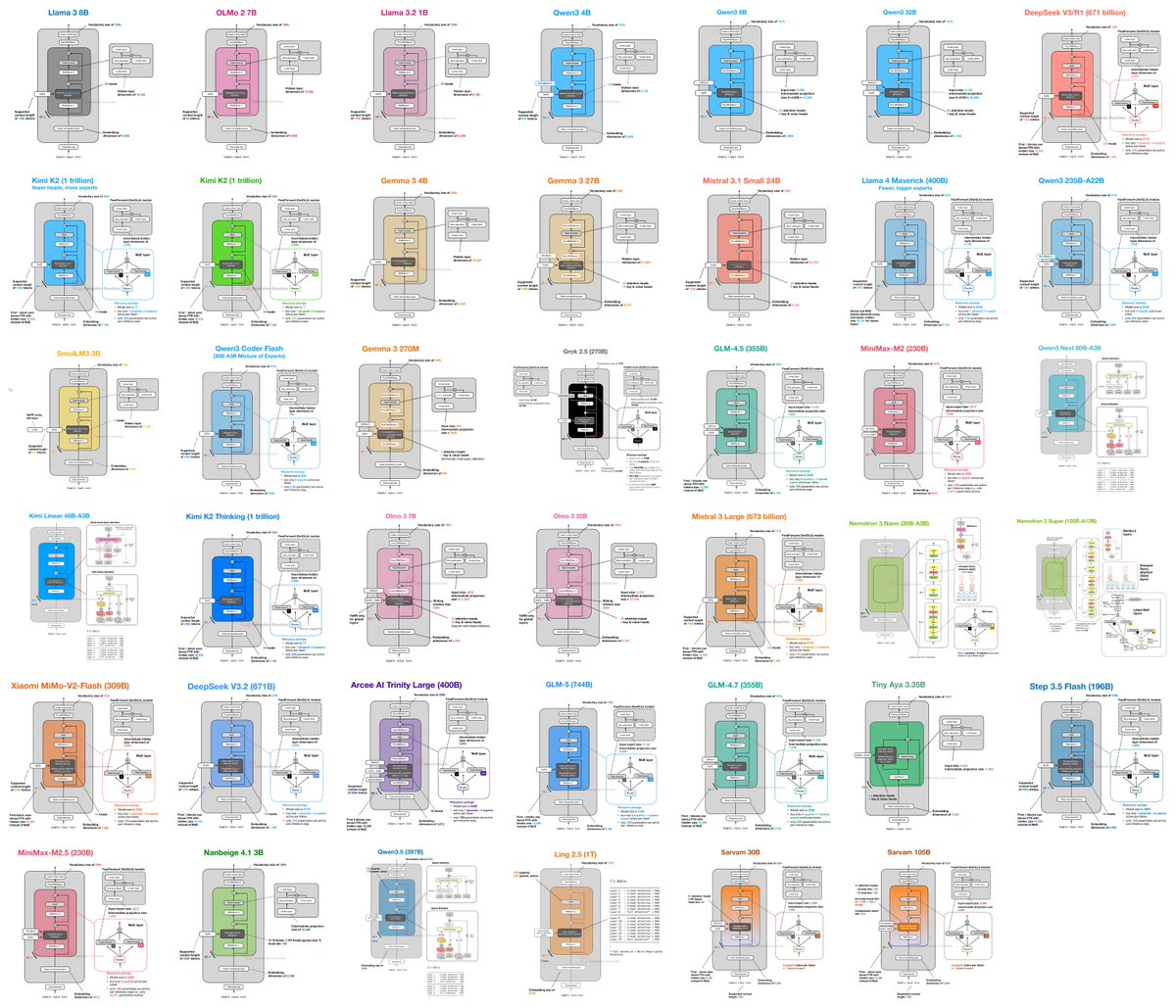

I (finally) put together a new LLM Architecture Gallery that collects the architecture figures all in one place!

sebastianraschka.com/llm-architectu…

English

Xu Jia retweetledi

A visual guide to modern LLM attention variants, all in one place: magazine.sebastianraschka.com/p/visual-atten…

English

Xu Jia retweetledi

I'm Boris and I created Claude Code. Lots of people have asked how I use Claude Code, so I wanted to show off my setup a bit.

My setup might be surprisingly vanilla! Claude Code works great out of the box, so I personally don't customize it much. There is no one correct way to use Claude Code: we intentionally build it in a way that you can use it, customize it, and hack it however you like. Each person on the Claude Code team uses it very differently.

So, here goes.

English

Congrats, Qinghe! Our multi-shot video generation work has been accepted by CVPR 2026, which allows users to generate videos with consistent shots. The code and model have been released. Welcome to give it a try.

Qinghe Wang@QingheX42

Excited to share that our paper MultiShotMaster has been accepted to #CVPR2026! Also First Prize 🥇 at #AAAI CVM Contest 2026! Code: github.com/KlingAIResearc… Project Page: qinghew.github.io/MultiShotMaste… Huggingface: huggingface.co/papers/2512.03… (1/n) MultiShotMaster-14B (720p)

English

Xu Jia retweetledi

I've observed 3 types of ways that great AI researchers work:

1) Working on whatever they find interesting, even if it's "useless"

Whether something will be publishable, fundable, or obviously impactful, is irrelevant to what these people work on. They simply choose something that feels interesting, weird, beautiful, or off in a way they can't ignore. For many of these people, "interestingness" is also often strong research intuition for an important problem that hasn't fully materialized yet, but their ideas often end up being meaningful during the process of exploration.

The canonical example for this in physics is Richard Feynman who got intrigued by the way that plates wobbled. He followed this curiosity on something that seemed like a useless endeavor, and it ended up feeding into deeper physics (and eventually won him a Nobel prize):

"It was effortless. It was easy to play with these things. It was like uncorking a bottle: Everything flowed out effortlessly. I almost tried to resist it! There was no importance to what I was doing, but ultimately there was."

The AI version of this that I've observed before is when someone obsesses over a "minor" failure case, a weird training dynamic, a small theoretical mismatch, or just something that most people think is pointless to chase down. These threads end up becoming interesting and impactful more often than you'd expect. The risk is that one can spend a long time on a pointless rabbit hole, but I've observed that the best researchers often have a very good sense for when an idea is a dead end vs. whether it's promising given more effort.

2) Working on what they feel extremely strongly is the "right" way to do something

These people have a clear picture of how the field *should* progress, and they're willing to work on unpopular things to prove their vision. They'll commit to something that others think is wrong, premature, or not worth it. An interesting quantitative way of measuring this is the citation graph of a paper. If you see a paper that has been around for many years but only started getting cited a lot more in recent years, that means that they were early (and right!). An obvious example is diffusion, the first paper of which was as early as 2015 (Sohl-Dickstein et al., 2015) but the ideas only started getting real traction in 2021 or later.

The failure mode here is getting stuck defending a pet theory long after it's been falsified. And there's obviously many examples in our community of people who do a lot of goal post shifting or beat a dead horse for many decades. But when these ideas are legitimately undervalued, they result in paradigm shifts instead of incremental progress.

3) Crushing SOTA

There's a type of researcher who isn't necessarily the most "philosophically original" or creative, but they are extremely effective at pushing a system to its limits. You can give these people a pre-existing task and benchmarks, check in on them in a month, and they will have crushed SOTA. Obviously this is not about benchmark hacking or short term wins. It's a real skill to take a combinatorial space of noisy research ideas and papers and conduct a rigorous search and ablation process.

I've also found that this type of researcher has great intuition about the field: a sense for which ideas will scale, which tweaks are meaningful, good values for hyperparameters, and quickly figuring out which papers are worth paying attention to.

—————

I think that these archetypes are all concrete expressions of good "research taste". (1) is a taste for interesting questions, (2) is a taste for long term worldviews, and (3) is a taste for careful execution and science. The best researchers I know often have a preference for operating in one of these modes, but frequently weave in and out of each depending on the stage of the project.

English

Xu Jia retweetledi

Xu Jia retweetledi

- conditioning on actions is what makes it a world model

- planning action sequences is what makes it useful.

- predicting in representation space trained in a completely self-supervised, task-independent manner is what makes it complicated.

- training the encoder and predictor simultaneously (which this model doesn't do) is particularly complicated because you must prevent collapse.

It looks like you do enjoy making a fool of yourself.

Before posting ignorant insults, just ask yourself: would you post the same thing on LinkedIn, in your own name, for all your colleagues and future employers to see?

English

Xu Jia retweetledi

Xu Jia retweetledi

Xu Jia retweetledi

💡Can robots autonomously design their own tools and figure out how to use them?

We present VLMgineer 🛠️, a framework that leverages Vision Language Models with Evolutionary Search to automatically generate and refine physical tool designs alongside corresponding robot action plans.

✨ VLMgineer can fully automate tool and action design with AI-driven physical creativity. No human intervention. No pre-defined templates or few-shot examples.

✨ VLMgineer outperforms human-specified designs and existing everyday tools.

✨ We let the VLM fully decide how to evolve designs.

Deep dive with me: 🧵

English

Xu Jia retweetledi

📅Call for Papers: Special Issue on "Controllable Artificial Intelligence Visual Content Generation"📊💡

Submit your work on controllable AI-driven visual content generation for the SI!

Deadline: 31 August 2025

Deails: link.springer.com/collections/gb…

#controllableAI #AIGC #CallForPapers

English

Our new work on video generation, VLIPP, a physically plausible video generation framework with vision and language informed physical prior. It achieves competitive performance with closed source platforms like Kling, Gen3 and Luma.

Baolu Li@calm_lu_312

📢 VLIPP:Towards Physically Plausible Video Generation With Vision and Language Informed Physical Prior 📢 We propose a two-stage approach that integrates physics as condition into VDM to align the generation with physical principles. 🔥Project_Page:madaoer.github.io/projects/physi…

English