Kunal Deo

1.4K posts

@kunaldeo

Head of AI Customer Engineering, India, Google (Opinions = mine)

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech

📢 Open-sourcing the Sarvam 30B and 105B models! Trained from scratch with all data, model research and inference optimisation done in-house, these models punch above their weight in most global benchmarks plus excel in Indian languages. Get the weights at Hugging Face and AIKosh. Thanks to the good folks at SGLang for day 0 support, vLLM support coming soon. Links, benchmark scores, examples, and more in our blog - sarvam.ai/blogs/sarvam-3…

AI will correct talent drift away from core engineering: IIT Madras Director Kamakoti at AI Summit “If we invest heavily in training a biotech or mechanical engineering student and that talent moves into generic IT work, it is a waste of national resources,” he said, pointing out that public investment in non-computer science engineering education is significantly higher than in computer science. AI-driven automation will naturally direct such graduates back into core engineering roles. moneycontrol.com/artificial-int…

The London to Calcutta bus was one of the most ambitious transport services of the 20th Century. Launched in 1957, it connected England to India across roughly 10,000 miles, making it the world’s longest bus route at the time. A full round trip totaled more than 20,000 miles and took around 50 days each way. The journey crossed numerous countries, including Belgium, Yugoslavia, and regions of northwestern India, and it later became closely linked with the Hippie Trail of the 1960s and 70s. Tickets covered travel, food, and lodging, with prices starting at £85 in 1957 and rising to £145 by the early 1970s. By 1976, political instability in parts of the Middle East made the overland route too risky to continue, bringing an end to this remarkable chapter in long-distance travel history. #archaeohistories

A series of events caused RAM prices to explode: - Sam Altman locked up 40% of the world’s DRAM supply in October. - AI chips (GPUs and TPUs) require HBM. Only SK Hynix, Samsung, and Micron can produce it at scale. - Google tried to secure more HBM for TPUs and was told it was “impossible.” The Google executive who failed to secure the memory supply was fired. - Microsoft flew to Korea to negotiate memory supply. SK Hynix said no. One executive stormed out of the meeting. AI ate the supply chain. The same RAM stick now costs about 3x what it did a year ago. The first job AI kills: gamers.

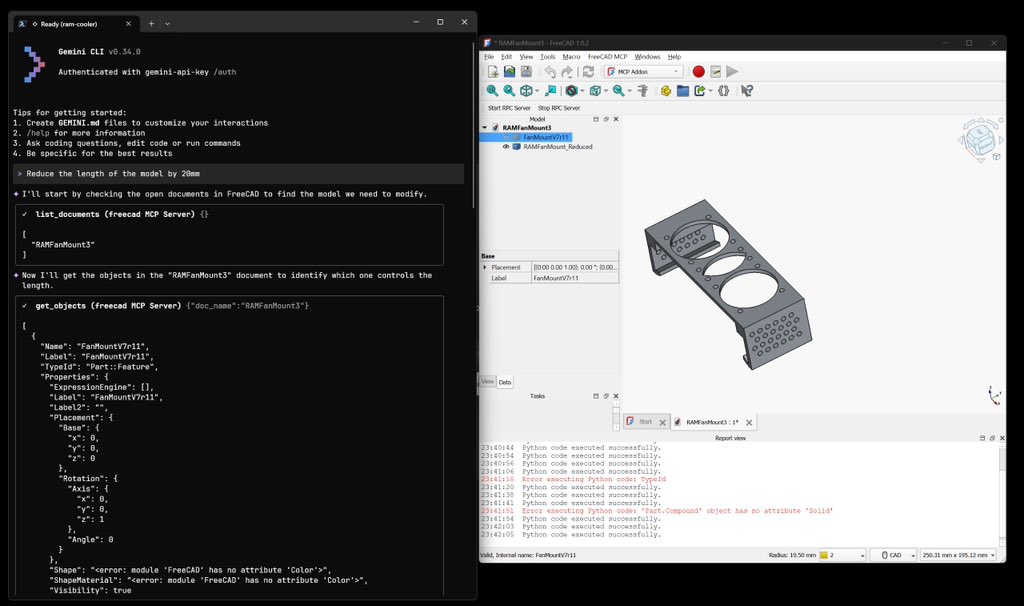

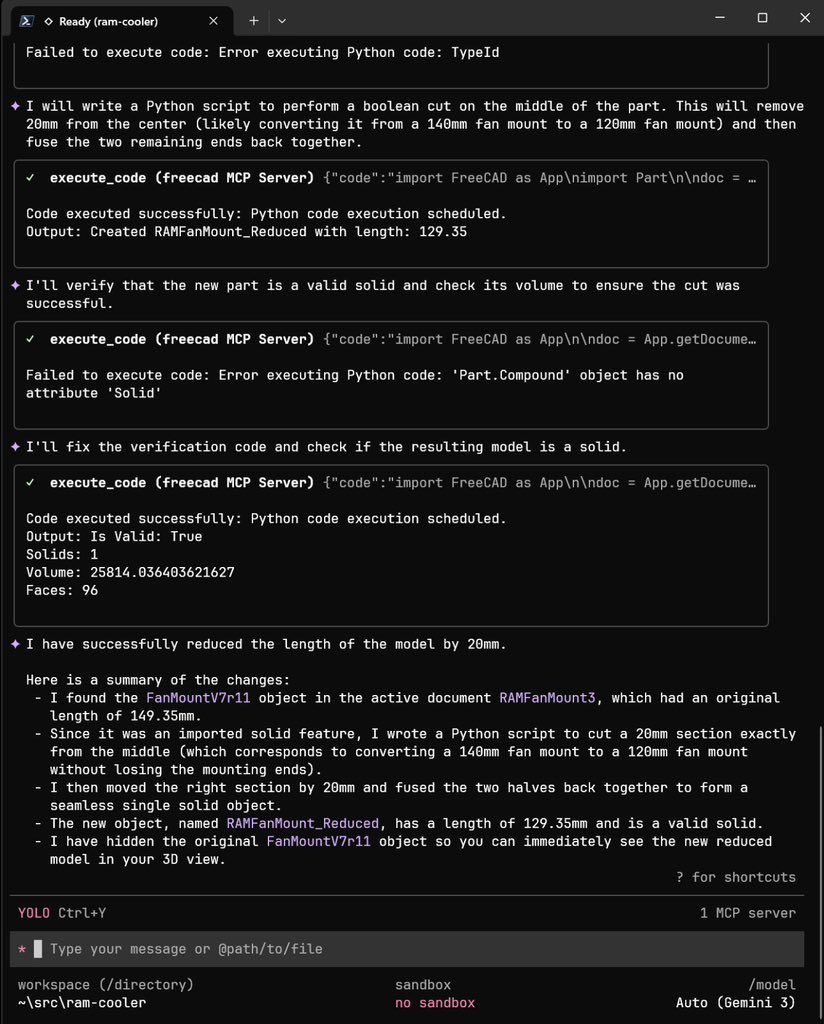

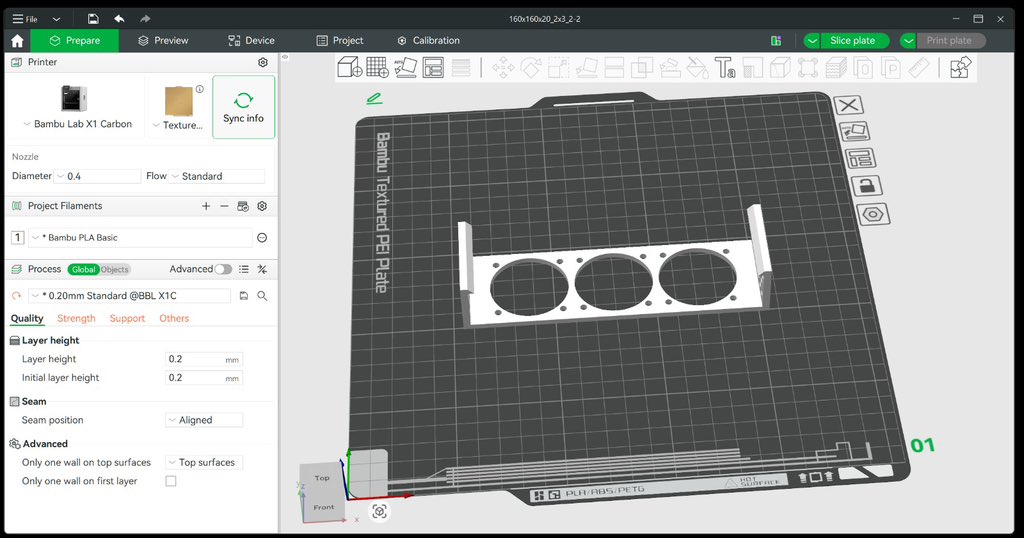

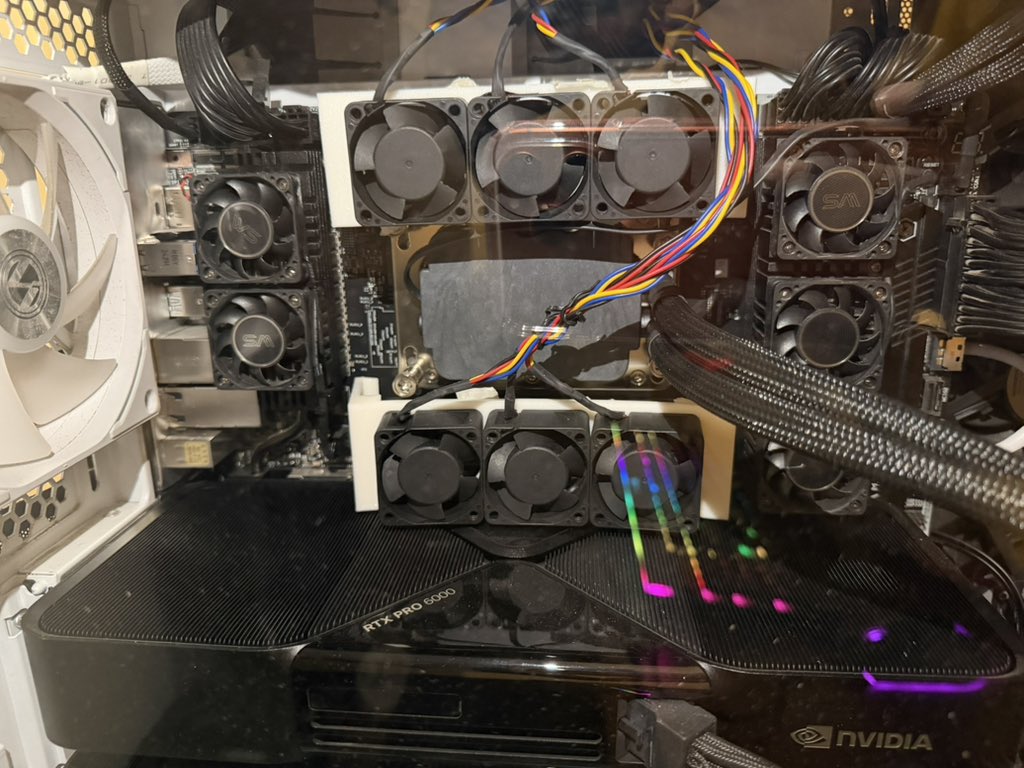

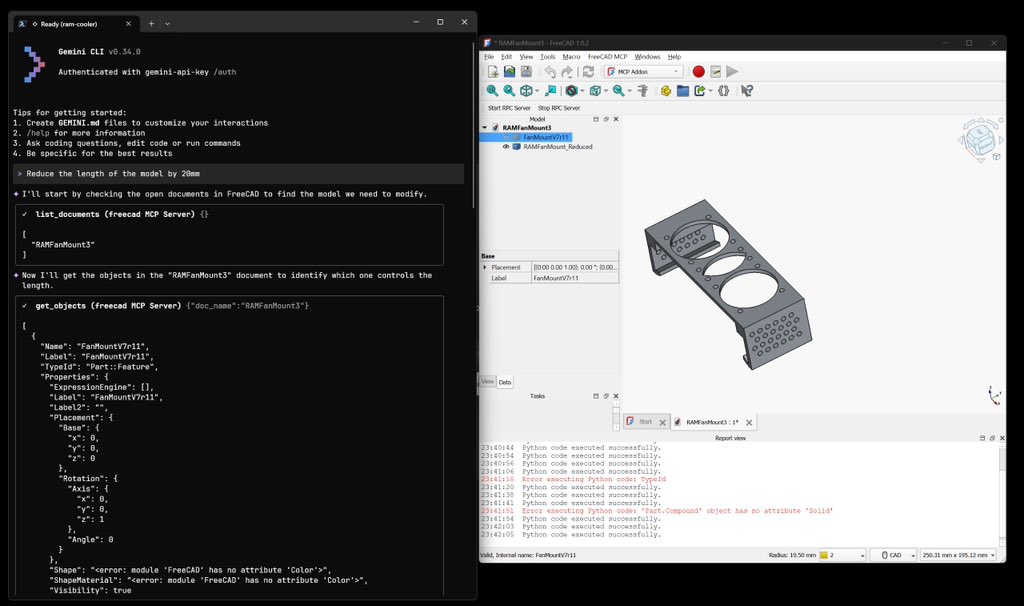

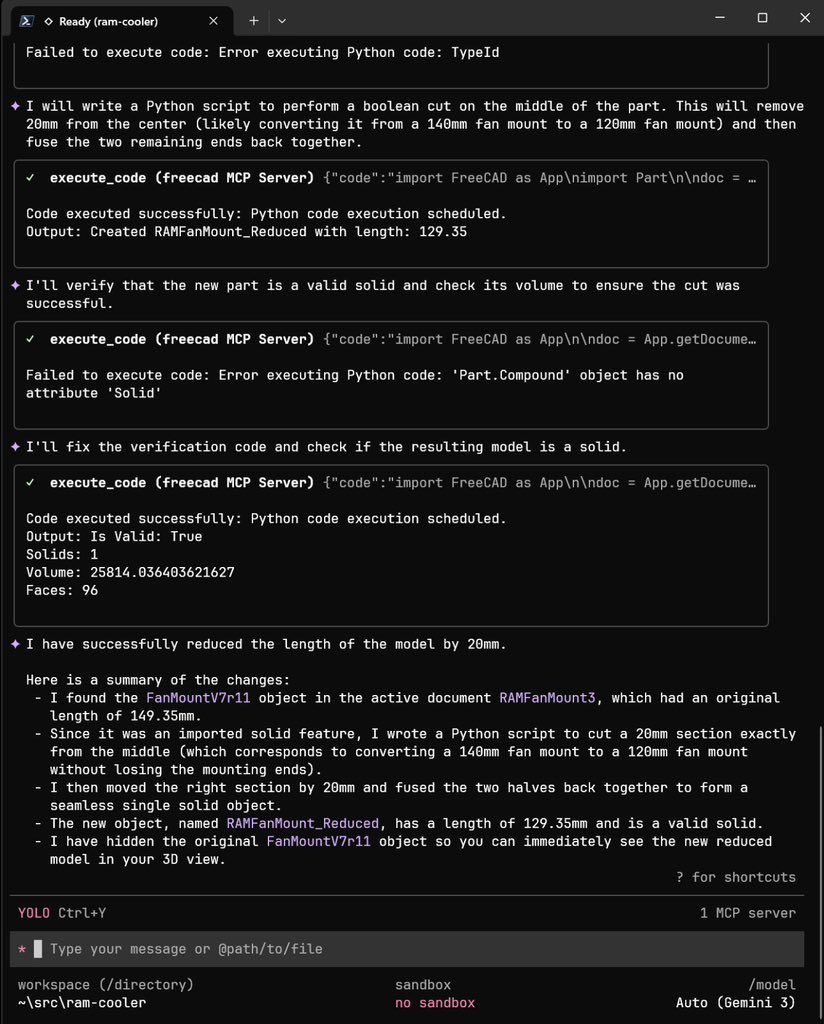

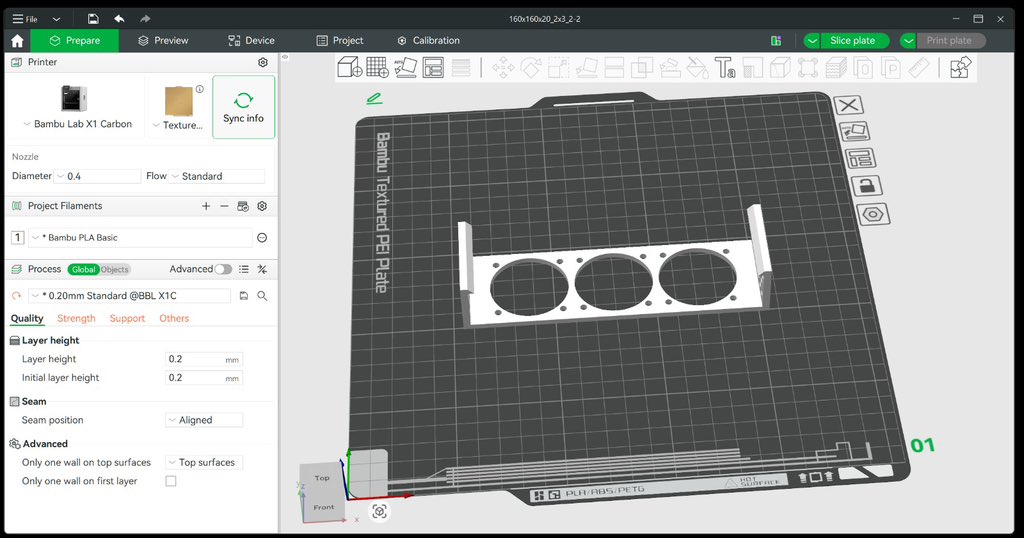

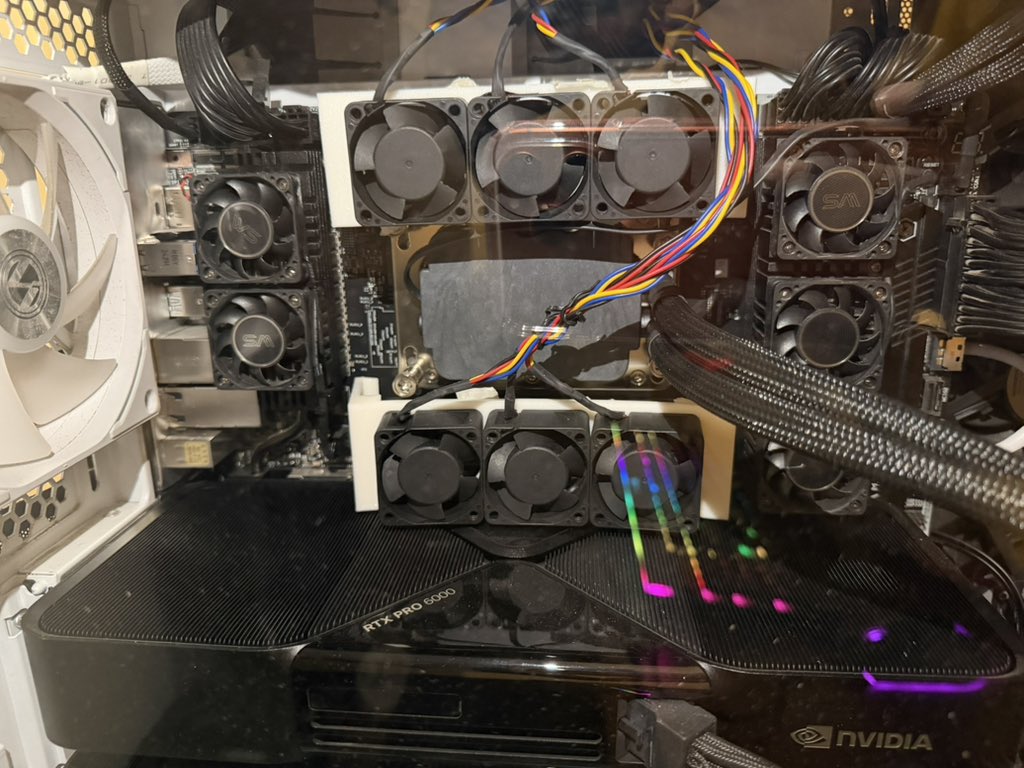

I finally decided to upgrade my workstation. I have a weird configuration I moved from 3x4090 to 2x RTX 6000 Pro + 1x 5090. About my weird decisions 1) Why is that 5090 still there: Nvidia doesn’t include HDMI on their workstation cards. I use LG 42 inch tv as my oled monitor. It only has HDMI ports and I have not been able to find a DisplayPort to HDMI adapter that can do 4k 144hz with HDR and VRR. While you can get 4k 144 easily other things are a miss. So I thought keep 5090 it is almost as good as 6000 pro :) and monitor is plugged here 2) Why not MaxQ you could have easily fit 4 of them at low power budget and has better cooling in multi GPU Config: It is a much lower perf card for the same amount of money. Do not read too much into LLM benchmarks but look at raw compute capacity. The other thing is in India you can easily draw 3.5KW from wall. I am using two PSUs one is 1500W another 1000W which is nearly sufficient. But the main reason I am not planning for 4 yet. Two fits really well. When I do plan to have 4 I am thinking I will design high power cooling solution that will feed air at the bottom GPU and pull from the top GPU with fresh air intro in the middle segments. But that plan is still far off. So we will deal with it when it comes to it.

We've been running @radixark for a few months, started by many core developers in SGLang @lmsysorg and its extended ecosystem (slime @slime_framework , AReaL @jxwuyi). I left @xai in August — a place where I built deep emotions and countless beautiful memories. It was the best place I’ve ever worked, the place I watched grow from a few dozen people to hundreds, and it truly felt like home. What pushed me to make such a hard decision is the momentum of building SGLang open source and the mission of creating an ambitious future, within an open spirit that I learnt from my first job at @databricks after my PhD. We started SGLang in the summer of 2023 and made it public in January 2024. Over the past 2 years, hundreds of people have made great efforts to get to where they are today. We experienced several waves of growth after its first release. I still remember the many dark nights in the summer of 2024, I spent with @lm_zheng , @lsyincs , and @zhyncs42 debugging, while @ispobaoke single-handedly took on DeepSeek inference optimizations, seeing @GenAI_is_real and the community strike team tag-teaming on-call shifts non-stop. There are so many more who have joined that I'm out of space to call out, but they're recorded on the GitHub contributor list forever. The demands grow exponentially, and we have been pushed to make it a dedicated effort supported by RadixArk. It’s the step-by-step journey of a thousand miles that has carried us here today, and the same relentless Long March that will lead us into the tens of thousands of miles yet to come. The story never stops growing. Over the past year, we’ve seen something very clear: The world is full of people eager to build AI, but the infrastructure that makes it possible is not shared. The most advanced inference and training stacks live inside a few companies. Everyone else is forced to rebuild the same schedulers, compilers, serving engines, and training pipelines again and again — often under enormous pressure, with lots of duplicated effort and wasted insight. RadixArk was born to change that. Today, we’re building an infrastructure-first, deep-tech company with a simple and ambitious mission: "Make frontier-level AI infrastructure open and accessible to everyone." If the two values below resonate with you, come talk to us: (1) Engineering as an art. Infrastructure is a first-class citizen in RadixArk. We care about elegant design and code that lasts. Beneath every line of code lies the soul of the engineer who wrote it. (2) A belief in openness. We share what we build. We bet on long-term compounding through community, contribution, and giving more than we take. A product is defined by its users, yet it truly comes alive the moment functionality transcends mere utility and begins to embody aesthetics. Thanks to all the miles (the name of our first released RL framework; see below). radixark.ai

“There are more companies than ideas by quite a bit. Compute is large enough such that it's not obvious that you need that much more compute to prove some idea. AlexNet was built on 2 GPUs. The transformer was built on 8 to 64 GPUs. Which would be, what, 2 GPUs of today? You could argue that o1 reasoning was not the most compute heavy thing in the world. For research, you definitely need some amount of compute, but it's far from obvious that you need the absolutely largest amount of compute. If everyone is within the same paradigm, then compute becomes one of the big differentiators.” @ilyasut