Kyle Wegner

15.4K posts

Kyle Wegner

@kwegner

I've been around here too long to summarize myself in 160 characters

Chicago, IL Katılım Mayıs 2008

418 Takip Edilen650 Takipçiler

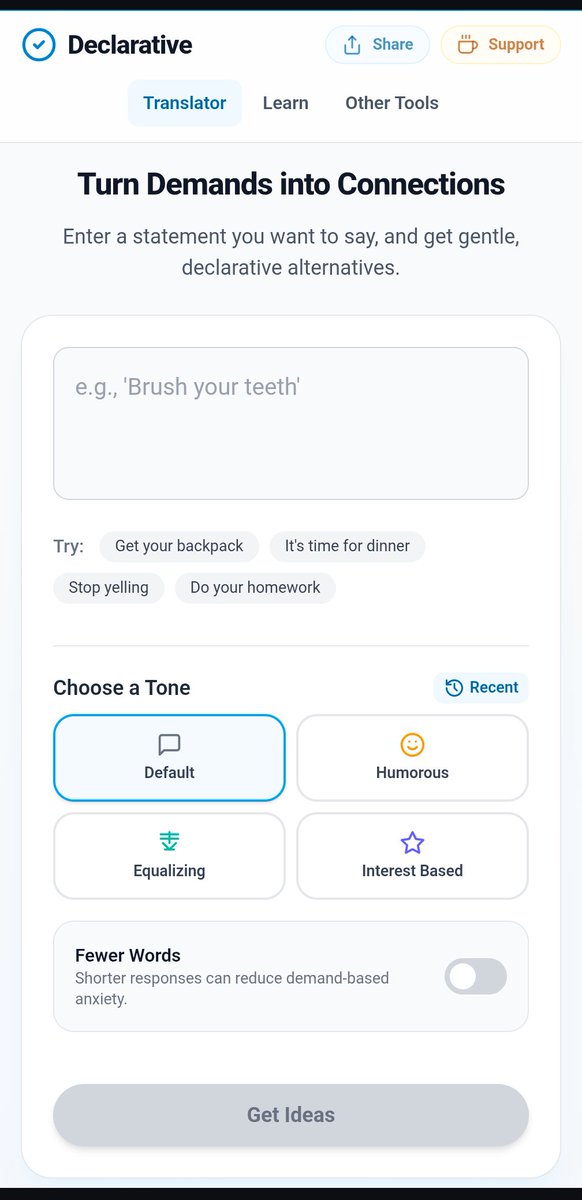

@OfficialLoganK declarativeapp.org

I needed a tool to help communicate with my disabled son and realized others could benefit too, but I didn't know how to build apps until AI Studio came around.

English

@EziQA_csmx @heynavtoor They can't be shared or deployed. Huge limitation.

English

@heynavtoor Very interesting but how you actually deploy it, or it is just for internal use?

English

@GoogleAIStudio Trying to 1-shot recreations of my son's favorite browser games and customize them for his interests. Learning a lot about what it gets right and where there's still a ton of room for growth.

English

Kyle Wegner retweetledi

Testing out Codex after getting frustrated with the pitiful limits in @antigravity, even with the Pro plan. I'm a hobbyist who prompts a few times a day and I still run out of credits constantly. It's really unacceptable.

English

@antigravity I'm a hobbyist and have run into limits on Pro with barely any prompting. With as little access as I have, there's no meaningful way for me to see the difference between Pro and flash output. Might as well go flash only.

English

We’re evolving Google AI plans to give you more control over how you build. Every subscription includes built-in AI credits, which can now be used for Antigravity, giving you a seamless path to scale.

Google AI Pro is the home for the practical builder, hobbyists, students, and developers who live in the IDE and don't necessarily rely on an agent. This plan features generous limits for Gemini Flash, with a baseline quota included to "taste test" our most advanced premium models.

Google AI Ultra serves as the daily driver for those shipping at the highest scale who need consistent, high-volume access to our most complex models.

If you’re on Pro but need "extra juice" for a heavy sprint or deeper access to premium models, simply top up your AI credits to customize your plan.

Keep building. Keep shipping.

English

@GoogleAIStudio Plugging holes that have increased my API usage costs over 1000% week over week. I have no idea how it happened and I'm about to have to pull the plug on some projects before they cost me tons of money.

English

Kyle Wegner retweetledi

Kyle Wegner retweetledi

Kyle Wegner retweetledi

Kyle Wegner retweetledi

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network.

In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome.

AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement.

We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only.

We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements.

We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

English

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic.

Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.

The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield.

Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable.

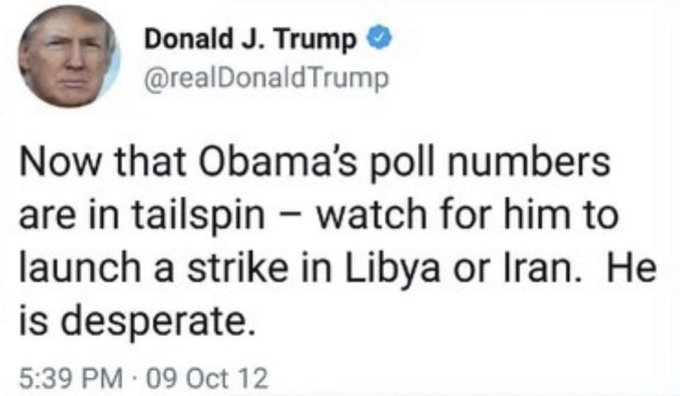

As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives.

Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered.

In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.

America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

English

Kyle Wegner retweetledi