Sabitlenmiş Tweet

Kyle Hailey

15K posts

Kyle Hailey

@kylelf_

Quantitative data visualization

san francisco Katılım Kasım 2009

802 Takip Edilen4.9K Takipçiler

@karpathy what are the advantages of this approach vs using NotebookLLM?

With NotebookLLM there is no friction to getting started which counts for a lot

English

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

Kyle Hailey retweetledi

Wait, so the founder of Anthropic is "Amodei," as in "loves god"?

And he leads Anthropic, meaning "human-centered," which is being used in military strikes?

And the creator of ChatGPT is "Altman," as in "an alternative to humans"?

And he leads OpenAI, which is completely closed?

And then there's "Gemini," meaning "two-faced," from a company that promised to do no evil?

And the whole global AI arms race is being driven by people who claimed to be worried about AGI taking over the world?

Either the universe is an extremely cliché writer, or has a brilliant sense of humor

English

Pretty cool video on Leopold Aschenbrenner @leopoldasch investments and strategy!

youtube.com/watch?v=3QUdP1…

YouTube

English

A small hackathon win turned into something big — we’re building an AI copilot for SQL tuning on @Yugabyte ! Gave the first live demo at DSS/KubeCon Atlanta. Amazing to see Yugabyte investing in real, practical AI tools for developers. 🚀 #AI #Databases #KubeCon!

English

@mreflow Yes would love to see the AI graveyard . Would be informative. Easier to understand why things fail than why they succeed

English

I was just going through the Future Tools website to remove some dead tools, update some tags, and completely overhaul and update "Matt's Picks."

One thing that completely blew my mind, as I was reviewing all the tools, was how many tools have been absolutely decimated by the big incumbents.

There were so many tools 2-3 years ago that were standalone tools that felt groundbreaking at the time... Now most of them are just features in ChatGPT, Gemini, Claude, or Microsoft products.

Research tools, PDF summarizers, copywriting tools, video voice dubbing platforms, speech-to-text tools, extensions that add AI to spreadsheets, slide-deck creators, etc... The list goes on and on.

Day-by-day, it felt gradual but, looking back through all the tools I've added over the past 4-years, it was super apparent how much exciting tech has completely consolidated under the existing big brands.

Creating moats is hard.

English

Kyle Hailey retweetledi

#PostgresMarathon 2-004: Fast-path locks explained (and the PG 18 improvement)

🖖 don't forget to subscribe & share! we'll be diving into various dark corners of Postgres and learn how to make it ludicrously fast 🎇

After 2-003, @ninjouz asked:

> If fast-path locks are stored separately, how do other backends actually check for locks?

The answer reveals why fast-path locking is so effective - and why PG 18's improvements matter so much in practice. // See lmgr/README, the part called "Fast Path Locking" #L257-L335" target="_blank" rel="nofollow noopener">github.com/postgres/postg…

Remember from 2-002: when you SELECT from a table, Postgres locks not just the table but ALL its indexes with AccessShareLock during planning. All of these locks go into shared memory, protected by LWLocks. On multi-core systems doing many simple queries (think PK lookups), backends constantly fight over the same LWLock partition. Classic bottleneck.

Instead of always going to shared memory, each backend gets its own private array to store a limited number of "weak" locks (AccessShareLock, RowShareLock, RowExclusiveLock).

In PG 9.2-17: exactly 16 slots per backend, stored as inline arrays in PGPROC -- each backend's 'process descriptor' in shared memory that other backends can see, protected by a per-backend LWLock (fpInfoLock).

No contention, because each backend has its own lock.

We can identify fast-path locks in "pg_locks", column "fastpath".

Weak locks on unshared relations – AccessShareLock, RowShareLock, and RowExclusiveLock – don't conflict. Typical DML operations (SELECT, INSERT, UPDATE, DELETE) take these locks and happily run in parallel. While DDL operations or explicit LOCK TABLE commands create conflicts, so locks for them never go to the fast-path slots.

For synchronization, Postgres maintains an array of 1024 integer counters (FastPathStrongRelationLocks) that partition the lock space. Each counter tracks how many "strong" locks (ShareLock, ShareRowExclusiveLock, ExclusiveLock, AccessExclusiveLock) exist in that partition. When acquiring a weak lock: grab your backend's fpInfoLock, check if the counter is zero. If yes, safely use fast-path. If no, fall back to main lock table.

Therefore, other backends don't normally need to check fast-path locks - they only matter when acquiring a conflicting strong lock, which is rare (in this case: bump the counter, then scan every backend's fast-path array to transfer any matching locks to the main table).

With partitioning becoming popular, 16 slots often isn't enough. A query touching a partitioned table with multiple indexes per partition quickly exhausts the fast-path limit. When you overflow those 16 slots, you're back to the main lock table and LWLock:LockManager contention.

This became a real problem for multiple PostgresAI clients in 2023 - they were experiencing severe LWLock:LockManager contention. We had some tests where we changed FP_LOCK_SLOTS_PER_BACKEND and confirmed that it mitigates LWLock:LockManager contention in certain cases, so I proposed to make this hard-coded value configurable: postgresql.org/message-id/fla…. Tomas Vondra was the first to respond, and it was clear he had also been studying similar cases.

In Postgres 18, the storage for fast-path locks has changed, now fast-path locks are stored in variable-sized arrays in separate shared memory (referenced via pointers from PGPROC). This allows variable sizing, so allowed number of fast-path locks for a backend scales with max_locks_per_transaction (default 64 slots). This is one of the trickiest parameters to understand fully, so we'll talk about it separately. Changing it requires Postgres restart.

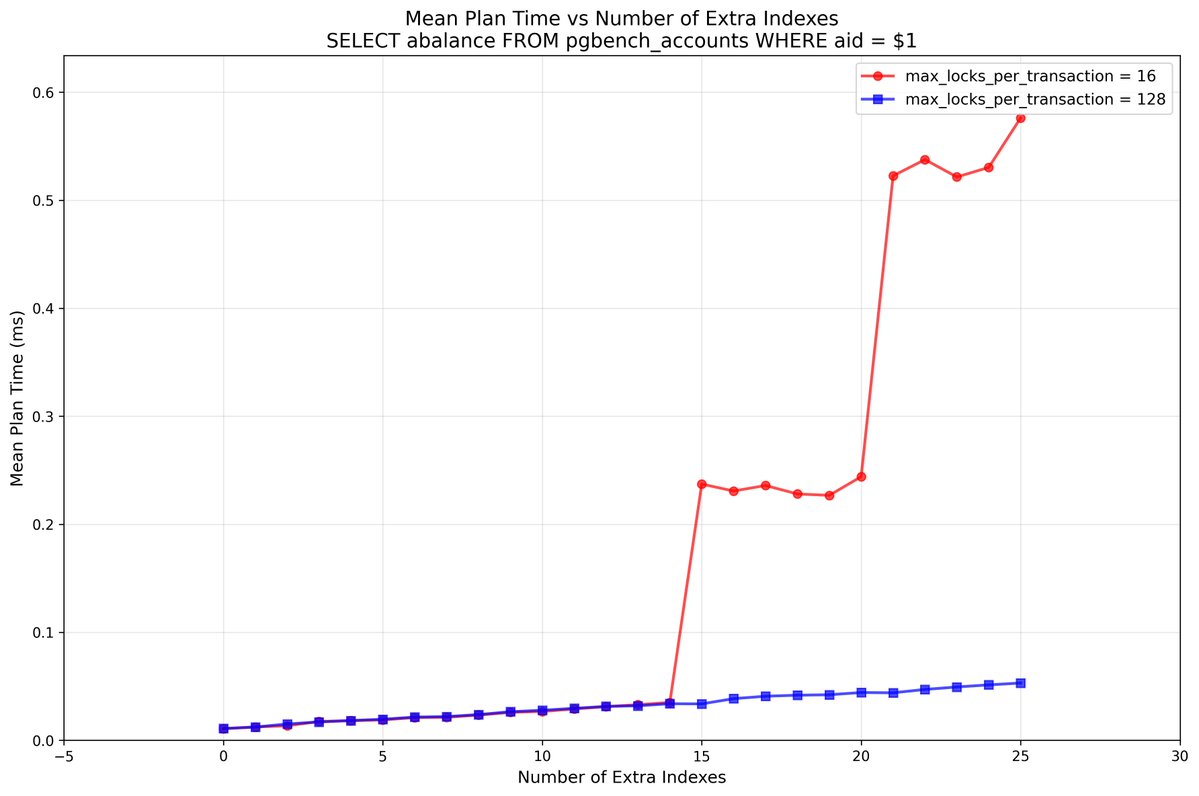

To wrap a today, let's look at some benchmarks. These benchmarks were conducted by the PostgresAI team by request from GitLab – see issue #note_2786838563" target="_blank" rel="nofollow noopener">gitlab.com/gitlab-com/gl-… (kudos to GitLab for keeping a lot of work publicly available, for wide open source community benefits – including Postgres community!)

We'll dive into some more details in next posts; here, I provide a simplified description.

On a 128-vCPU machine, we initialize a pgbench DB without partitioning involved:

pgbench -i -s 100

and then run a series of '--select-only' pgbench runs with high number of backends ('-c/-j 100'):

pgbench --select-only -c 100 -j 100 -T 120 -P 10 -rn

pgbench's '--select-only' consists of a simple PK lookup, a SELECT to the "pgbench_accounts" table.

We do it in multiple iterations, and after each iteration, we create a new extra index on "pgbench_accounts" -- it doesn't matter which index; what matters is that we don't use '-M prepared', so we know that each call will include planning time, and therefore, all indexes will be locked with AccessShareLock. The very first iteration starts with 2 relations locked – the table itself, and its single index, the PK.

Thus, when we have 14 extra indexes, the overall number of relations is 1+1+14 = 16 and this fits into standard FP_LOCK_SLOTS_PER_BACKEND slots in PG17 or in PG18+ when max_locks_per_transaction is decreased from default 64 to 16, to match the capacity of PG17.

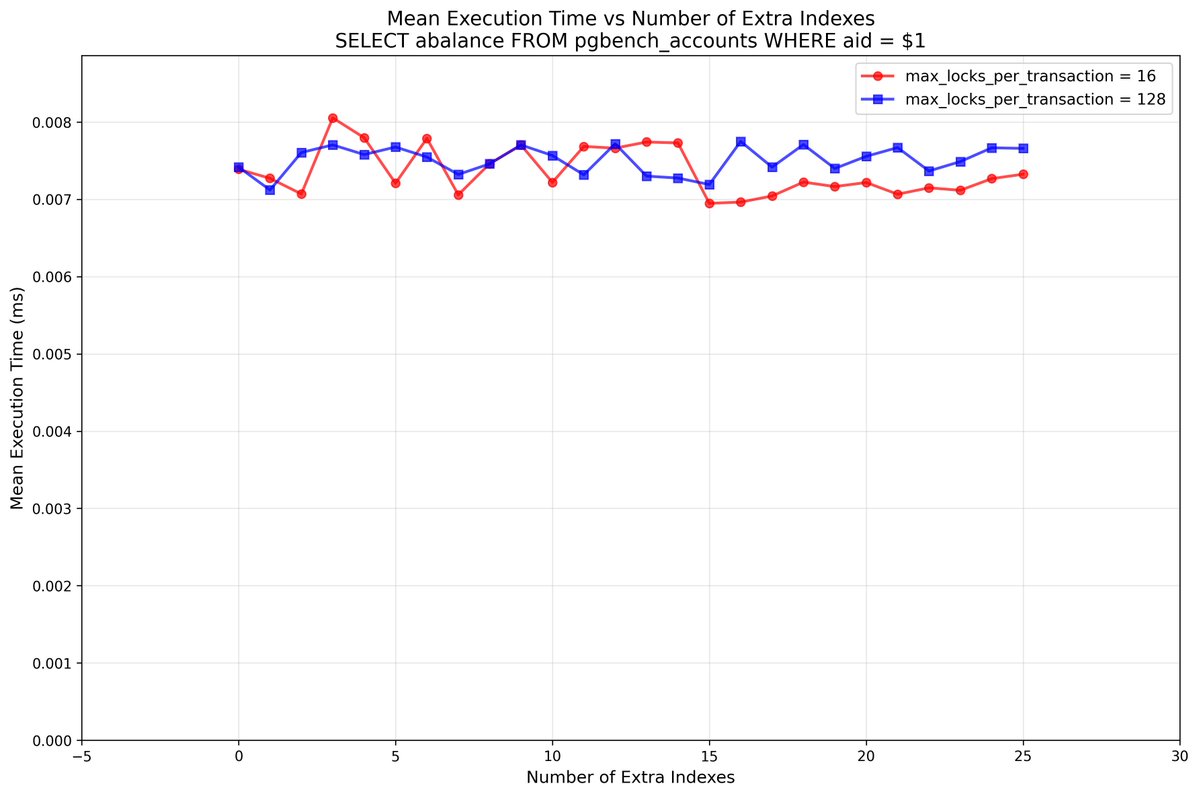

Let's look at the planning time and execution time separately:

What can we see here:

- execution time is stable, doesn't depend on the number of indexes

- as for the planning time, indeed, up to 14 extra indexes we see only slow growth of latency (locking of each index costs us a little bit of time -- so we can say, that extra indexes always slightly slow down SELECTs, unless prepared statements are used; an interesting fact on its own)

- and when we have 15 extra indexes, for max_locks_per_transaction=16 in PG18 (or in PG17 regardless of this setting), this becomes a game-changer – we see an obvious sign of a performance cliff, the planning part of the latency jumps

- while if we keep max_locks_per_transaction at the default 64, or – like in this case, raise to 128, we can keep adding indexes without the planning time being significantly affected

Let's look at wait events:

Here it is, LWLock:LockManager.

Notice how PG 18 with max_locks_per_transaction=16 (top; and PG17 would look similar) shows massive red bars of LWLock:LockManager waits at higher index counts, while PG 18 with max_locks_per_transaction=128 (bottom) stays mostly green (active execution).

But are there downsides?

This is where I'm genuinely curious. The implementation seems almost too good to be true. Let me think through potential tradeoffs:

- Memory overhead: with max_locks_per_transaction=64, you get 64 slots per backend. Each slot needs ~4 bytes for the OID plus some bits in fpLockBits. Some hundreds of bytes per backend. Looks negligible.

- Strong lock acquisition: here's where it gets interesting. When we take a strong lock (AccessExclusiveLock for DDL like ALTER TABLE or DROP), Postgres needs to scan every backend's fast-path array to transfer matching locks to the main hash table. With, say, 128 slots instead of 16, does this become noticeably slower? In fact, looking at the code (FastPathTransferRelationLocks, here: #L2883" target="_blank" rel="nofollow noopener">github.com/postgres/postg…) - it only scans the relevant group for that relation (still just 16 slots), not the entire array! So even with 1024 groups = 16,384 total slots, it only touches 16 slots per backend. Clever!

- The grouping hash: relations are distributed across groups via (relid * 49157) % groups (#L204-L218" target="_blank" rel="nofollow noopener">github.com/postgres/postg…). What if the workload has unfortunate OID patterns that cause clustering? Nope -- the prime number multiplication spreads even consecutive OIDs across groups effectively.

Am I missing something? Would love to hear your thoughts, especially if you've tested this in PG 18 or have ideas for additional benchmarks.

---

Summary

- Fast-path locking lets backends avoid fighting over shared lock tables for common operations like SELECT. PG 17 and earlier: 16 slots per backend. PG 18: scales with max_locks_per_transaction (default 64).

- If you have partitioned tables with indexes and see LWLock:LockManager waits, upgrading to PG 18 will likely help you fully solve this kind of contention!

---

Huge thanks to Tomas Vondra for taking this on and implementing the solution that landed in PG 18! He has an excellent presentation about this work: youtube.com/watch?v=iCmUhS… - highly recommend watching it.

YouTube

English

what we are up to at Yugabyte for Performance Observability and AI

youtu.be/ZBAf8Sm7PFw

YouTube

English

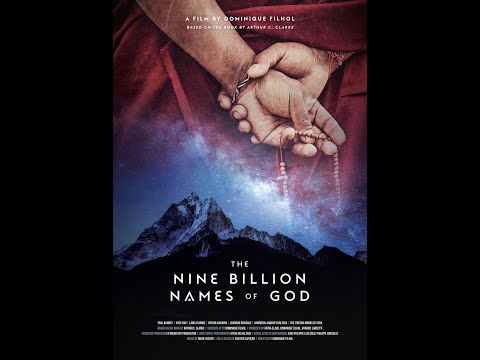

Clarke’s Nine Billion Names of God reads like a metaphor for the singularity: AI racing to know everything, until the lights go out and the cycle begins again. Asimov’s The Last Question explores the same theme—less poetic, more literal, equally powerful.

youtube.com/watch?v=UtvS9U…

YouTube

English