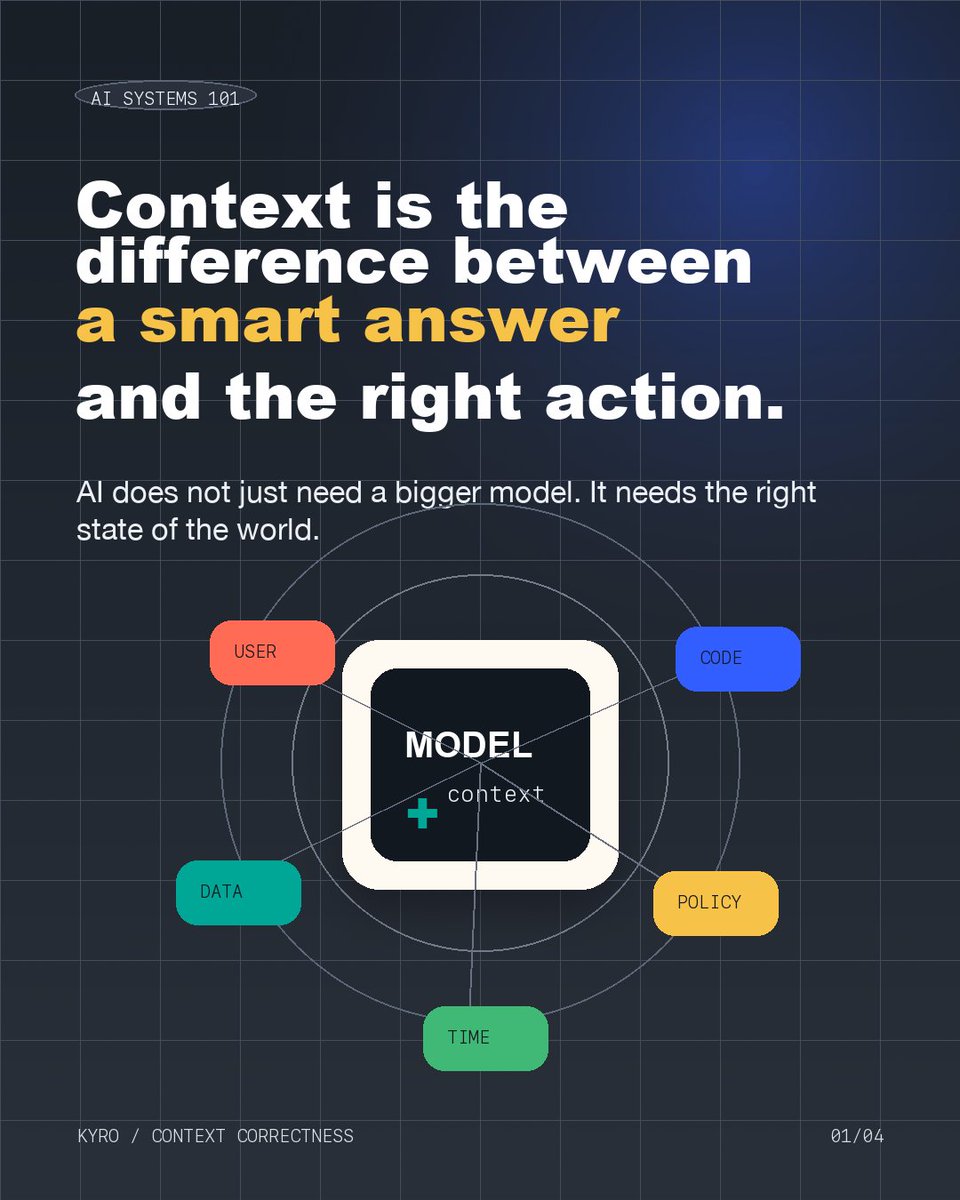

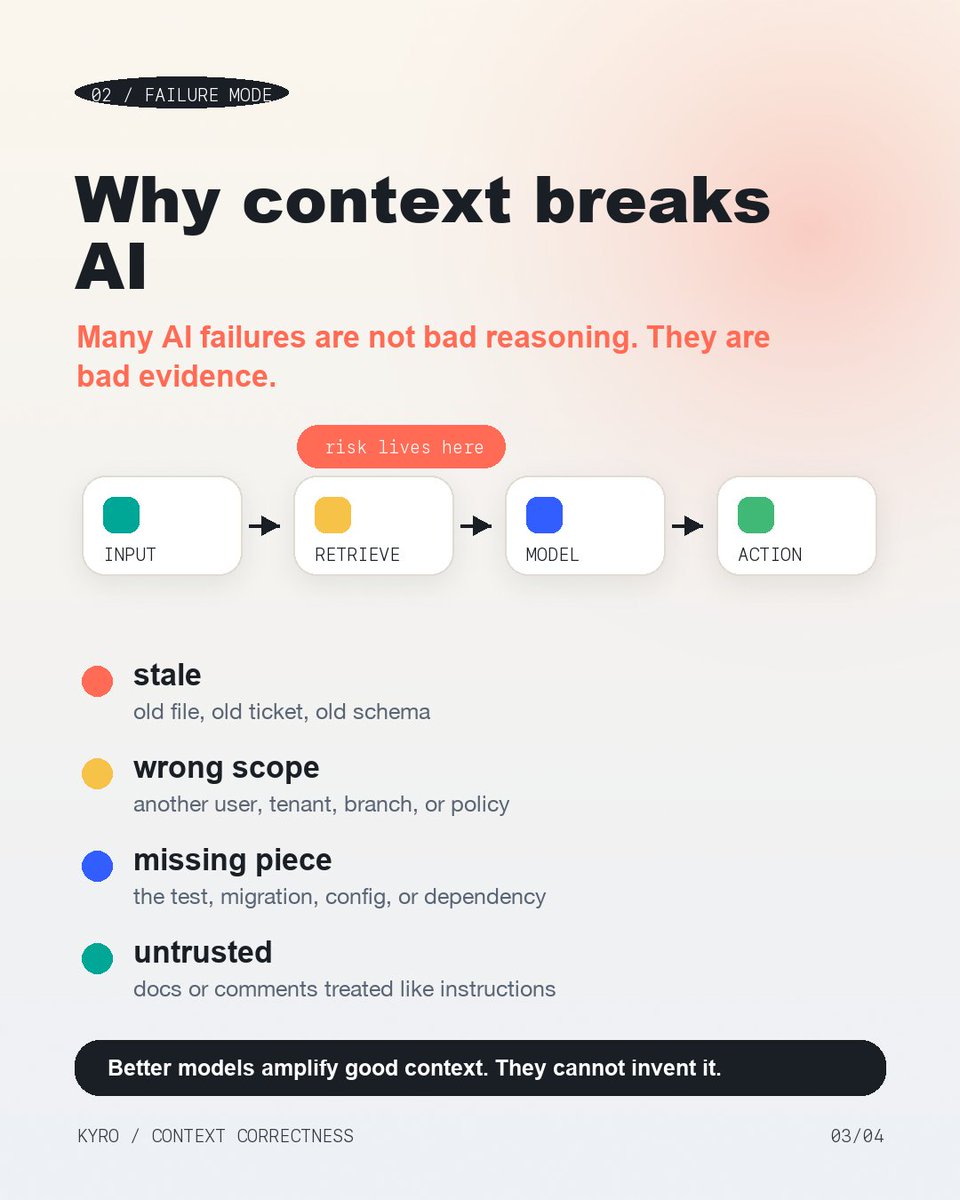

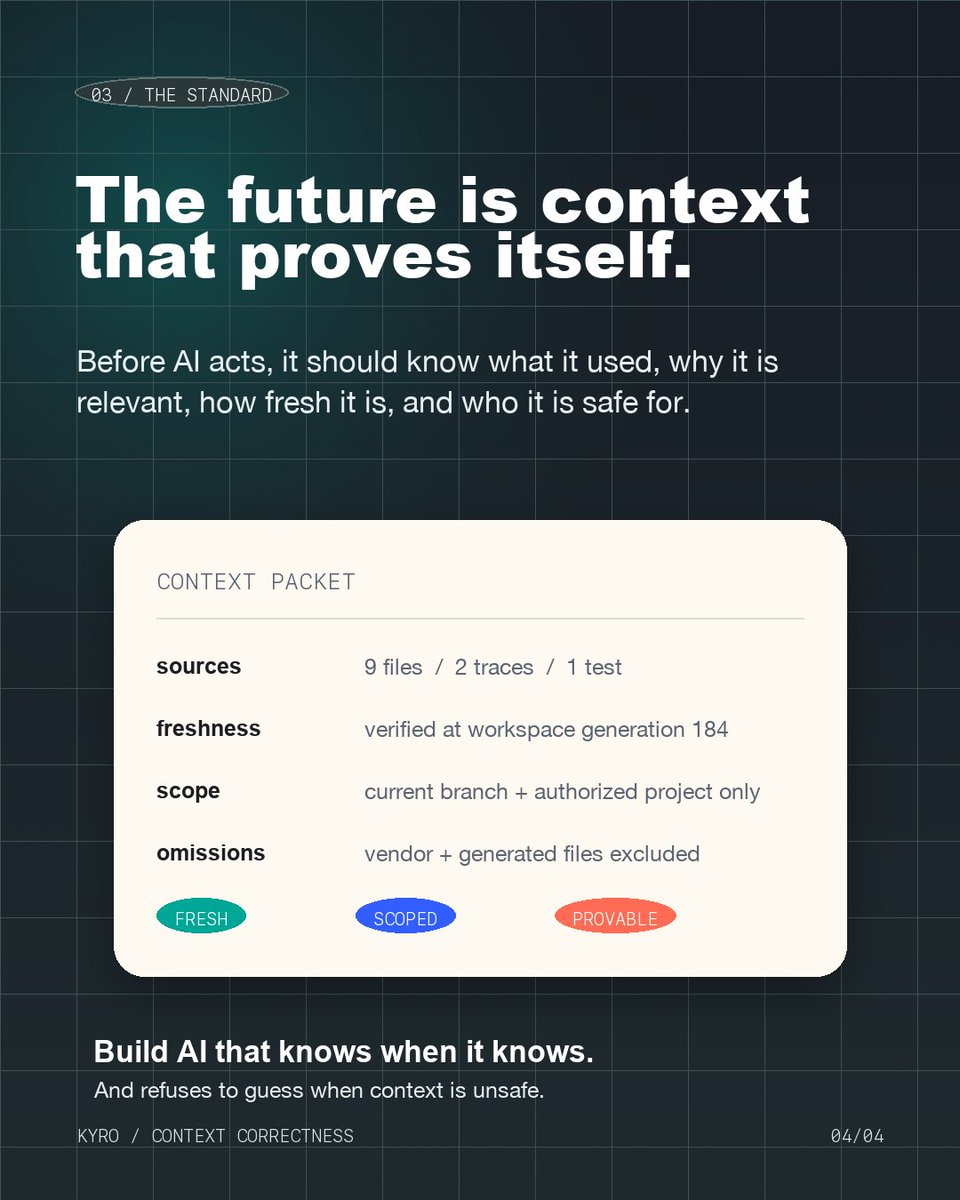

AI is bound to fail. The biggest lie you’re told: “Just plug an MCP into your knowledge base, and you have a smart assistant.” You don’t. That just connects your AI to data. It doesn’t prove the data is safe to trust. It doesn’t prove the policy wasn’t replaced yesterday. So the model does what models do: reads outdated context with perfect confidence. That’s how AI systems actually fail in production. Not because the model is stupid. Because the system handed it unsafe evidence and asked it to be certain. We built @kyrodb to fix exactly this. It’s a context correctness runtime that sits between your AI agents and your knowledge stores. Before context reaches the model, KyroDB checks freshness, scope, provenance, and proof. If it’s stale, unsafe, or unprovable, your AI doesn’t guess. It knows when it knows. And refuses when it doesn’t. KyroDB is the last line of defense before your AI speaks. First 100 developers get 25% off. Coupon + link in the comments.