Laurent Archimède retweetledi

Event Sourcing and Message Streaming are NOT the same things. Kafka is NOT suitable for Event Sourcing. Kafka is suitable for Message Streaming.

There are important reasons why these two different things require *different storage mechanics*. I'm going to simplify this by naming the two different kinds of storage:

1. Event Sourcing requires a *database* to serve as an *event journal*. Events stored for Event Sourcing must be written in a way that enables future reads to quickly assemble small(er) streams of events that belong to the single Aggregate that originally emitted them. This requires a random access index.

2. Message Streaming requires storage that is essentially a *flat file* that logs message elements. The message elements are individually written in sequential order and later read in sequential order. This requires one first-to-last sequential index.

Based on these two fundamental points, I now break this down further into details:

3. Now, beyond Aggregate sub-streams, all events of an Event Sourced domain model are generally consumed as a totally-ordered stream of events in the *time* order that they were originally emitted by Aggregates. To do so *also* requires a sequential index. Thus, an Event Sourcing *database* must support *two* kinds of indexes.

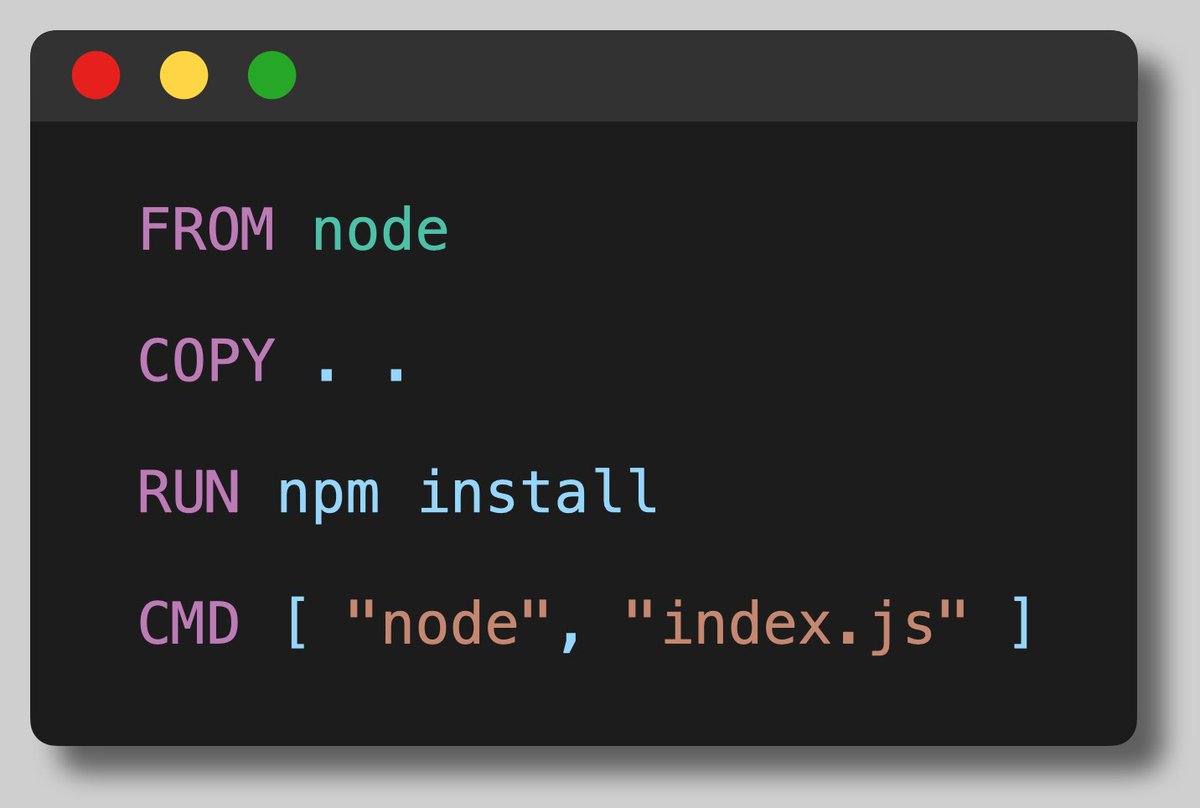

4. Kafka is *NOT* suitable for an Event Sourcing *database*. What is the name of the concept under which *messages* are *logged*? It's a *topic*. Kafka is a message log that can have many topics. Kafka has *one single index*, which is the sequence number of the *totally ordered stream of messages*.

5. Therefore, after writing messages into a Kafka topic, they cannot be read randomly because the random access index doesn’t exist. Kafka was not designed for this.

6. With Kafka, if you need to read the small(er) streams of events originally emitted by single Aggregate instances, you would have to scan an entire topic from the first message to the last message to ensure you didn’t miss reading all events in one of the individual Aggregate streams. This would cause O(N) read time--reads become slower as every new message is written to the topic. You have 1 billion total events and you need to read any 5 of those as an individual Aggregate event stream? "Ain't-gonna-happen."

For the really stubborn people who think they can outsmart physics, I'll add a few more points:

7. "I know, I know! I'll use a different topic for each Aggregate instance!" That's okay if Kafka was designed to support millions to billions to trillions of topics under a single broker. It wasn't.

8. "I know, I know! I'll use a K-Table to maintain snapshots of each Aggregate instance so I can read them quickly!" Reconstituting the state of an Aggregate must take priority over consuming a totally ordered stream of all events. If you try this, your K-Table snapshots will be only eventually consistent with the *real current Aggregate state*--the Aggregate can't reliably read it's own state.

The End.

English