Nikita Leonov

3.1K posts

Nikita Leonov

@leonovco

🧠 Cognitive architectures enthusiast | 🔀 Multi-agent system explorer | 🤖 Gen AI aficionado | 💻 Software crafter by nature | he/him

California, USA Katılım Nisan 2011

345 Takip Edilen325 Takipçiler

Nikita Leonov retweetledi

We just published our annual State of AI report for LPs and DVC community. This year it's made by Perplexity Computer and the presentation *self updates* with most notable numbers and newsworthy events every week so hope this stays relevant - state-of-ai-dvc.web.app

make sure you click/tap/hover over elements and explore the details, there's 2 hours worth of content inside

English

@0xSero What I am missing in pruned version of pruned 35B is how it compares against not original pruned version but against 27B on the same benchmarks. This would really show the value.

English

Best models to run on your hardware level

I'll be doing this every week, I hope you guys enjoy.

---- 8 GB ----

Autocomplete for coding (like Cursor Tab)

- huggingface.co/NexVeridian/ze…

- huggingface.co/bartowski/zed-…

Tool calling, assistant style

- huggingface.co/nvidia/NVIDIA-…

---- 16 Gb ----

Here things get better:

Multimodal

- huggingface.co/Qwen/Qwen3.5-9B

- huggingface.co/Tesslate/OmniC…

- huggingface.co/unsloth/Qwen3.…

---- 24 GB ----

- The best model you can get (thanks Qwen) huggingface.co/Qwen/Qwen3.5-2…

- Great model (strong agents) huggingface.co/nvidia/Nemotro…

- Mine hehe huggingface.co/0xSero/Qwen-3.…

I'm doing a weekly series

English

@miolini Hard to understand all your claims on my level :) So asked ChatGPT: "Short answer: he’s partially right in spirit, but not really answering your original point—and he’s overgeneralizing."

English

LLM inference that combines conventional low bit quantization with a Johnson Lindenstrauss residual corrector to preserve the most important matrix vector products while sharply reducing weight bandwidth. Instead of replacing model tensors with a pure sketch, each weight matrix is decomposed into a compact base representation, a tiny high precision path for salient outlier weights, and a residual term that is stored as a one bit random projection signature with a learned or calibrated scale. During inference, the main output is computed with standard efficient low bit GEMM kernels, while a lightweight projected activation correction reconstructs the missing inner product signal from the residual sketch and adds it back to the result. This design keeps most of the system compatible with existing quantized inference stacks, but uses JL style geometry preservation exactly where standard quantization fails, making it a plausible path toward lower effective precision, lower memory traffic, and better accuracy retention at aggressive compression ratios.

English

running larger language models on smaller computers

Google Research@GoogleResearch

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

English

@miolini 🤷♂️ valuable insight also not sure how it is relevant to my past statement where ChatGPT says TurboQuant would not work for weight tensors as goo as for KV :)

English

@leonovco As a general rule for any form of model weight optimization, it is preferable to include a brief post-training phase. However, this step is often skipped due to the added complexity it introduces.

English

@miolini You obviously know way better, I can consult only ChatGPT :) ChatGPT saying that while it can apply to weight tensors as well it would result in error accumulation that would not achieve the same results as in cache.

English

@leonovco I don't see why it's cannot be applied to layers tensor too. It's just a convenient way to compress them, satisfying topology constraints.

English

@rohanpaul_ai Not sure about this research but my agents when something does not work either agree on pre-condition that do not need to be fixed or agree on disabling a test that does not align with current requirements.

English

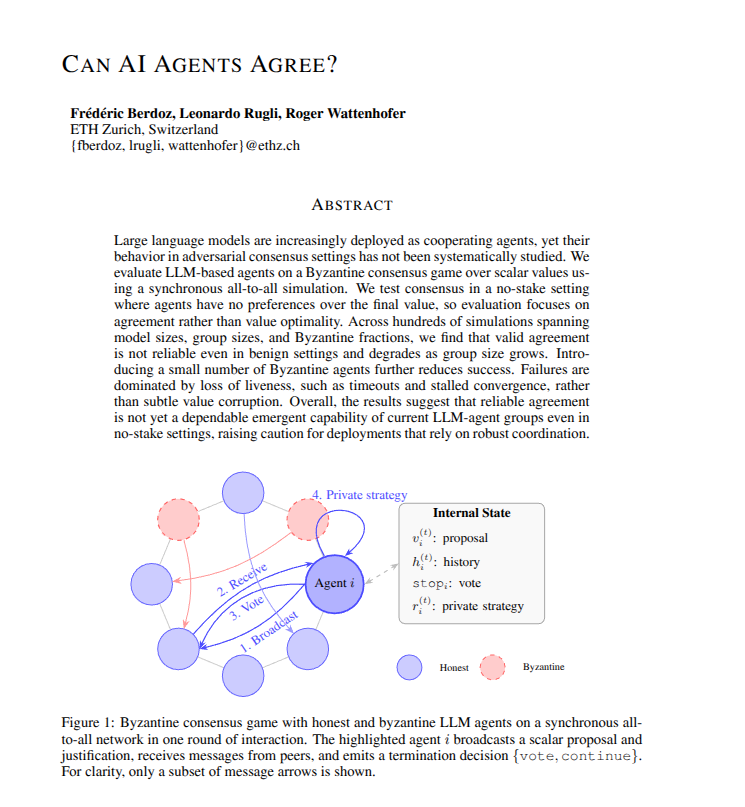

New research proves that current AI agent groups cannot reliably coordinate or agree on simple decisions.

Building teams of AI agents that can consistently agree on a final decision is surprisingly difficult for LLMs.

But problem is that developers frequently assume that if you have enough AI agents working together, they will eventually figure out how to solve a problem by talking it through.

This paper shows that this assumption is currently wrong. Even in a friendly environment where every agent is trying to help, the team often gets stuck or stops responding entirely. Because this happens more often as the group gets bigger, it means we cannot yet trust these agent systems to handle tasks where they must agree on a correct answer.

----

Paper Link – arxiv. org/abs/2603.01213

Paper Title: "Can AI Agents Agree?"

English

Nikita Leonov retweetledi

SentientWave Automata v0.2.9-ce is out.

This release brings:

- Temporal-first workflow execution in Elixir

- stronger reliability for agent runs, DMs, and long-running flows

- new deep research workflow support for complex goals

- multi-query Brave search evidence gathering for research rounds

Release notes: github.com/sentientwave/a…

English

@Real_Max_Miller He is probably not ok. He supposed to have a kids hockey camp today, it got cancelled. The camp is not something that put much of stress in the body and still he cant make it.

English

Toffoli still being evaluated. Unsure if he will travel on the upcoming #SJSharks road trip

English

Nikita Leonov retweetledi

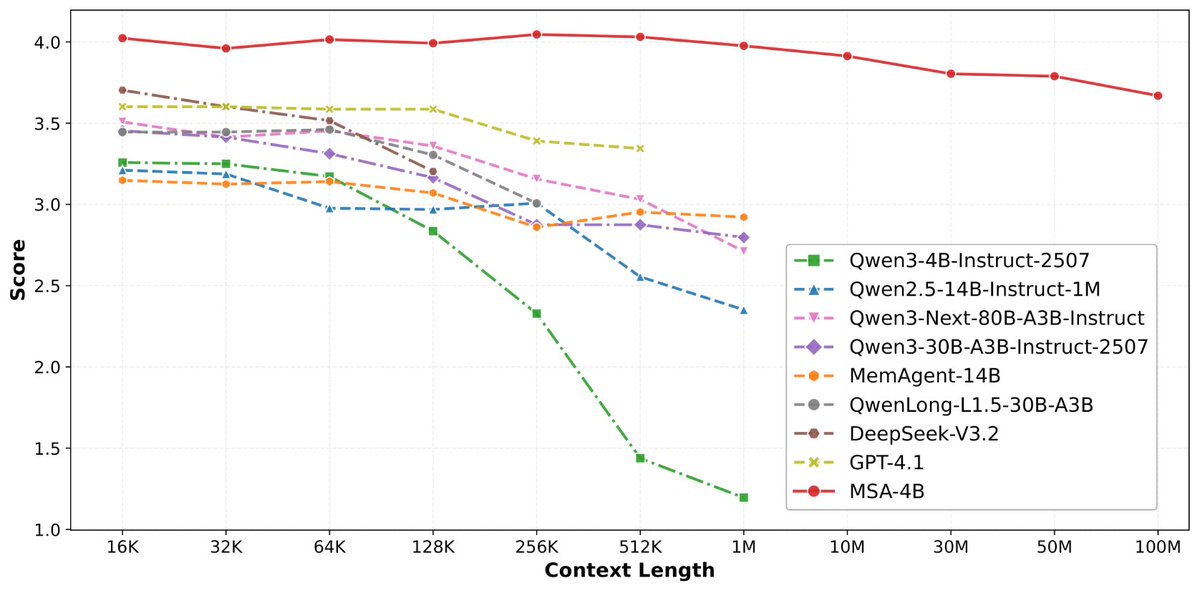

论文来了。名字叫 MSA,Memory Sparse Attention。

一句话说清楚它是什么:

让大模型原生拥有超长记忆。不是外挂检索,不是暴力扩窗口,而是把「记忆」直接长进了注意力机制里,端到端训练。

过去的方案为什么不行?

RAG 的本质是「开卷考试」。模型自己不记东西,全靠现场翻笔记。翻得准不准要看检索质量,翻得快不快要看数据量。一旦信息分散在几十份文档里、需要跨文档推理,就抓瞎了。

线性注意力和 KV 缓存的本质是「压缩记忆」。记是记了,但越压越糊,长了就丢。

MSA 的思路完全不同:

→ 不压缩,不外挂,而是让模型学会「挑重点看」

核心是一种可扩展的稀疏注意力架构,复杂度是线性的。记忆量翻 10 倍,计算成本不会指数爆炸。

→ 模型知道「这段记忆来自哪、什么时候的」

用了一种叫 document-wise RoPE 的位置编码,让模型天然理解文档边界和时间顺序。

→ 碎片化的信息也能串起来推理

Memory Interleaving 机制,让模型能在散落各处的记忆片段之间做多跳推理。不是只找到一条相关记录,而是把线索串成链。

结果呢?

· 从 16K 扩到 1 亿 token,精度衰减不到 9%

· 4B 参数的 MSA 模型,在长上下文 benchmark 上打赢 235B 级别的顶级 RAG 系统

· 2 张 A800 就能跑 1 亿 token 推理。这不是实验室专属,这是创业公司买得起的成本。

说白了,以前的大模型是一个极度聪明但只有金鱼记忆的天才。MSA 想做的事情是,让它真正「记住」。

我们放 github 上了,算法的同学不容易,可以点颗星星支持一下。🌟👀🙏

github.com/EverMind-AI/MSA

艾略特@elliotchen100

稍微剧透一下,@EverMind 这周还会发一篇高质量论文

中文

Nikita Leonov retweetledi

The Max + Santana Row + Pink Poodle + La Vics + Happy Hollow Park and Zoo

NHLMuse@NHL_Muse

Macklin Celebrini becomes eligible for a contract extension on July 1… and it could be a massive one. 👀 If you’re Mike Grier… what contract are you offering Celebrini? 👇

English