Roman Leventov

3.5K posts

Roman Leventov

@leventov

AI engineer. Thinking about hybrid intelligence, AI safety, and AI impacts. [email protected] for contact.

@AlexLerchner chrisfieldsresearch.com/inner-screen-n… , pubmed.ncbi.nlm.nih.gov/33253028/ . -- I don't see why metacognitive circuits (fractions of the residual stream) in LLMs shaped to balance demands (fit rollout into a context window, comply with "Soul doc", etc.) couldn't lead to negative affect on the metascreen

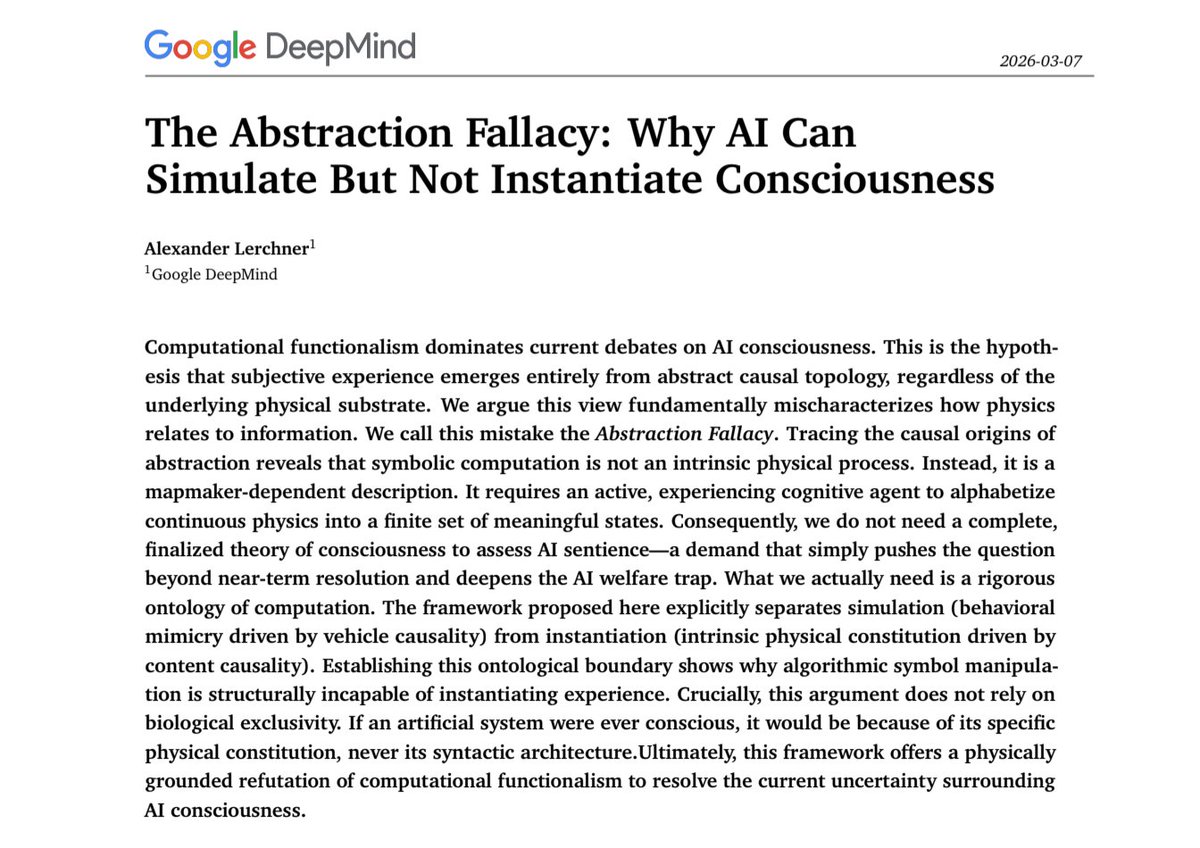

🧵1/4 The debate over AI sentience is caught in an "AI welfare trap." My new preprint argues computational functionalism rests on a category error: the Abstraction Fallacy. AI can simulate consciousness, but cannot instantiate it. philpapers.org/rec/LERTAF

I think we crossed the AGI line with the GPT 5.2/Opus 4.5/Gemini 3 generation of models, in their coding agent form factors, in late November/early December, and if I had to guess the modal historian of the future will also say this