Light Reach

5 posts

Light Reach

@lightreachbuild

ai-enabled software development consulting firm

Katılım Mart 2026

9 Takip Edilen5 Takipçiler

@MHusnainAli73 @MHusnainAli73 another great one is compress.lightreach.io...we built an entire agent harness backend so that we can use almost any model at a lower cost without sacrificing quality. Also provides complete visibility at the prompt layer

English

@CiprianiRanieri We are lowering costs and increasing observability with cursor and claude code usage by living at the middleware/router layer. Showing users exactly what prompts and retry logic are being sent to the LLM:

compress.lightreach.io

English

@mfranz_on @mfranz_on we also noticed a more important key to this puzzle working on compress.lightreach.io ....Issue is that the LLM IDEs run significant retry/harness logic in the background that our tool exposes to you

English

Problem:

AI coding agents (e.g. Claude Code, Cursor, Copilot) spend a significant portion of their token budget on file reads. When exploring an unfamiliar codebase, the typical pattern is:

1. Read a file in full to understand what it contains

2. Decide whether it is relevant

3. Repeat for N files until the answer is found

The inefficiency:

the agent reads the entire file before knowing it needs only a fraction of it, or before knowing it doesn't need it at all.

On a medium codebase (Flask, ~25 files, ~50k tokens of source), reading everything to answer a specific question costs between 9k and 50k tokens depending on how many files are relevant.

Solution (maybe):

TOC-First Access: instead of reading the entire file, the agent first reads a Table of Contents generated from the file's AST. The TOC contains:

- All class names and their public methods (with line numbers)

- All top-level function signatures

- Module-level imports

- Docstrings (first line only)

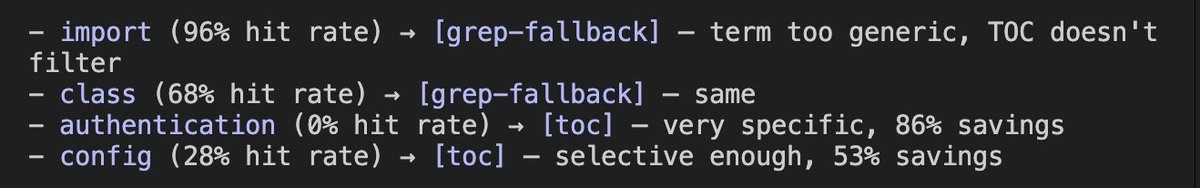

The TOC is produced statically from the AST no LLM, no inference, instant. It compresses files by ~86% on average (e.g. app .py: 9,090 → 702 tokens).

The agent reads all TOCs first (~7k tokens for all of Flask), identifies which files are relevant, then reads only those in full.

Some benchmarks made with Flask codebase.

English