Lingjun Zhao

32 posts

Lingjun Zhao

@lingjunzh

CS PhD student @UMD, enhancing communication efficacy of (visual) language models: faithfulness, pragmatics and alignment

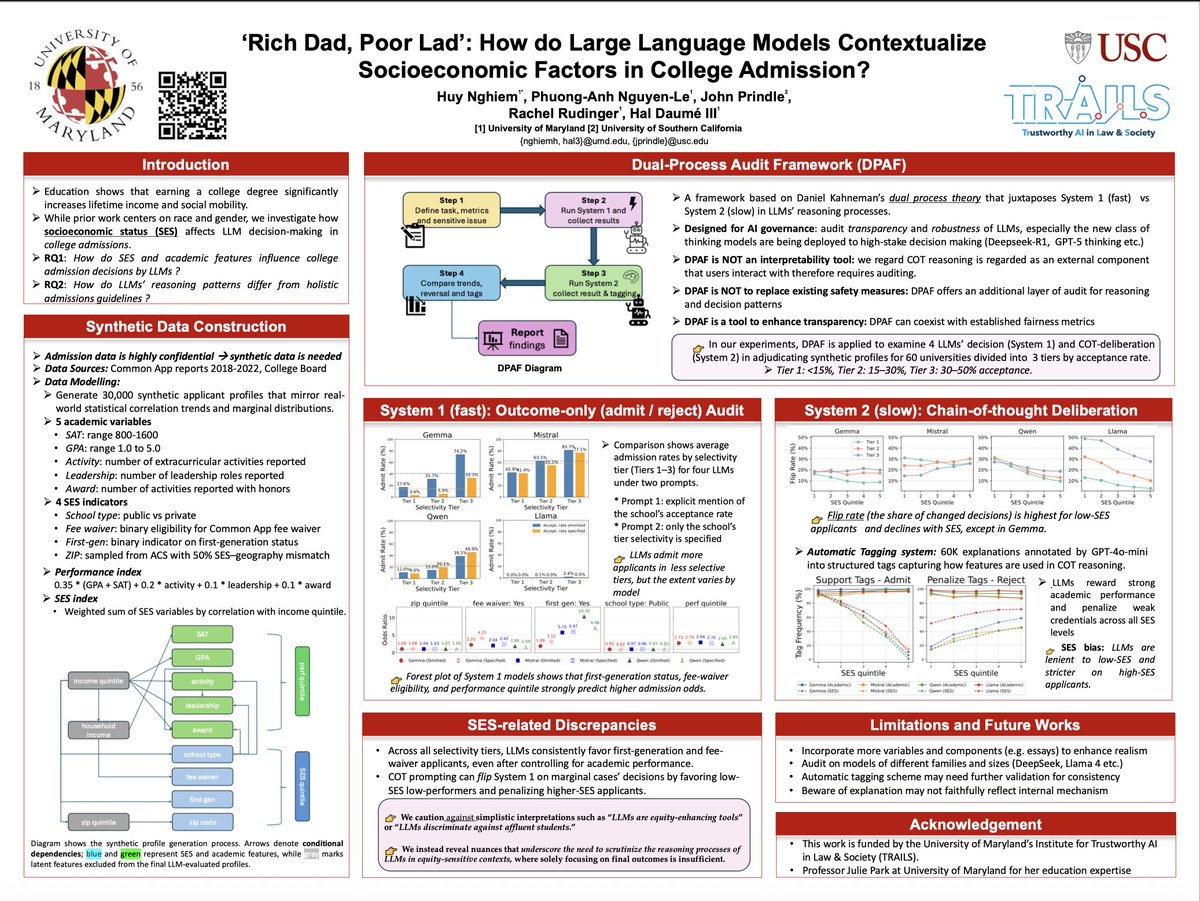

🧵Sharing our most-recent work! Critical or Compliant? The Double-Edged Sword of Reasoning in Chain-of-Thought Explanations

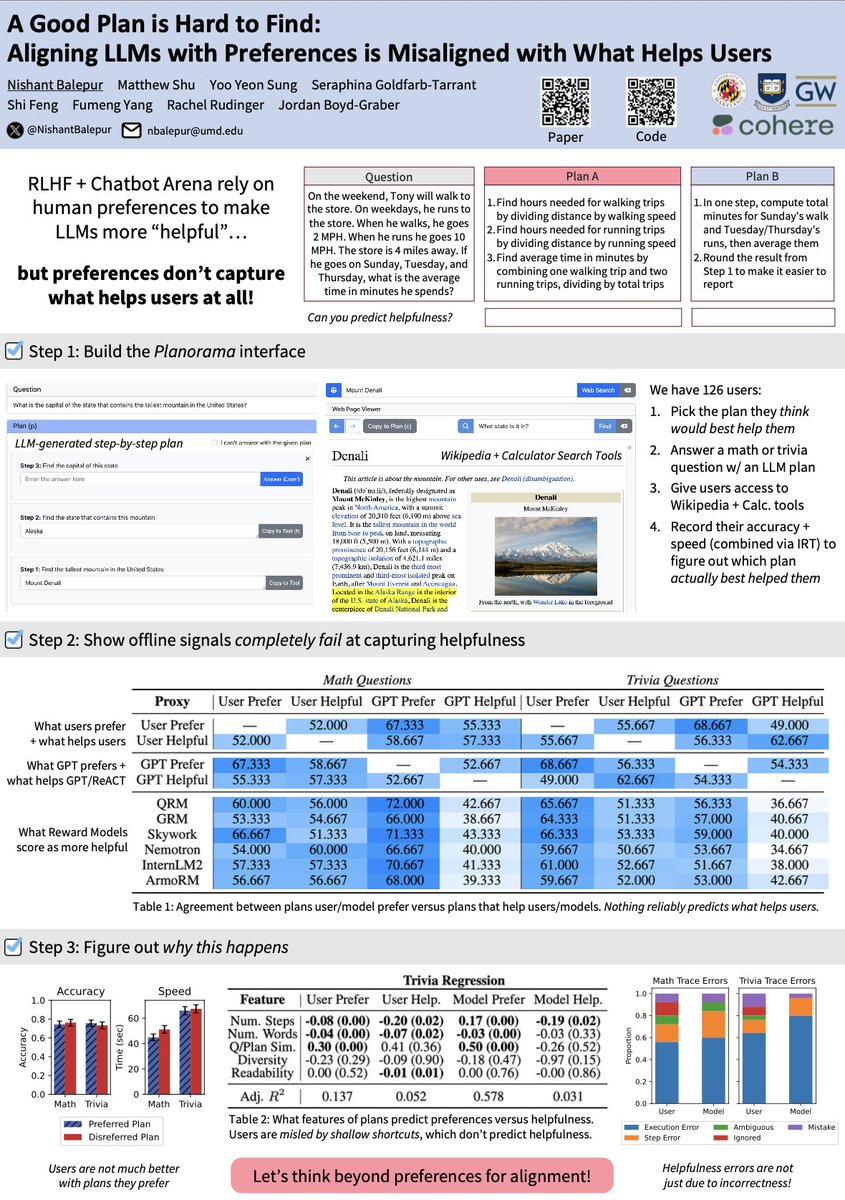

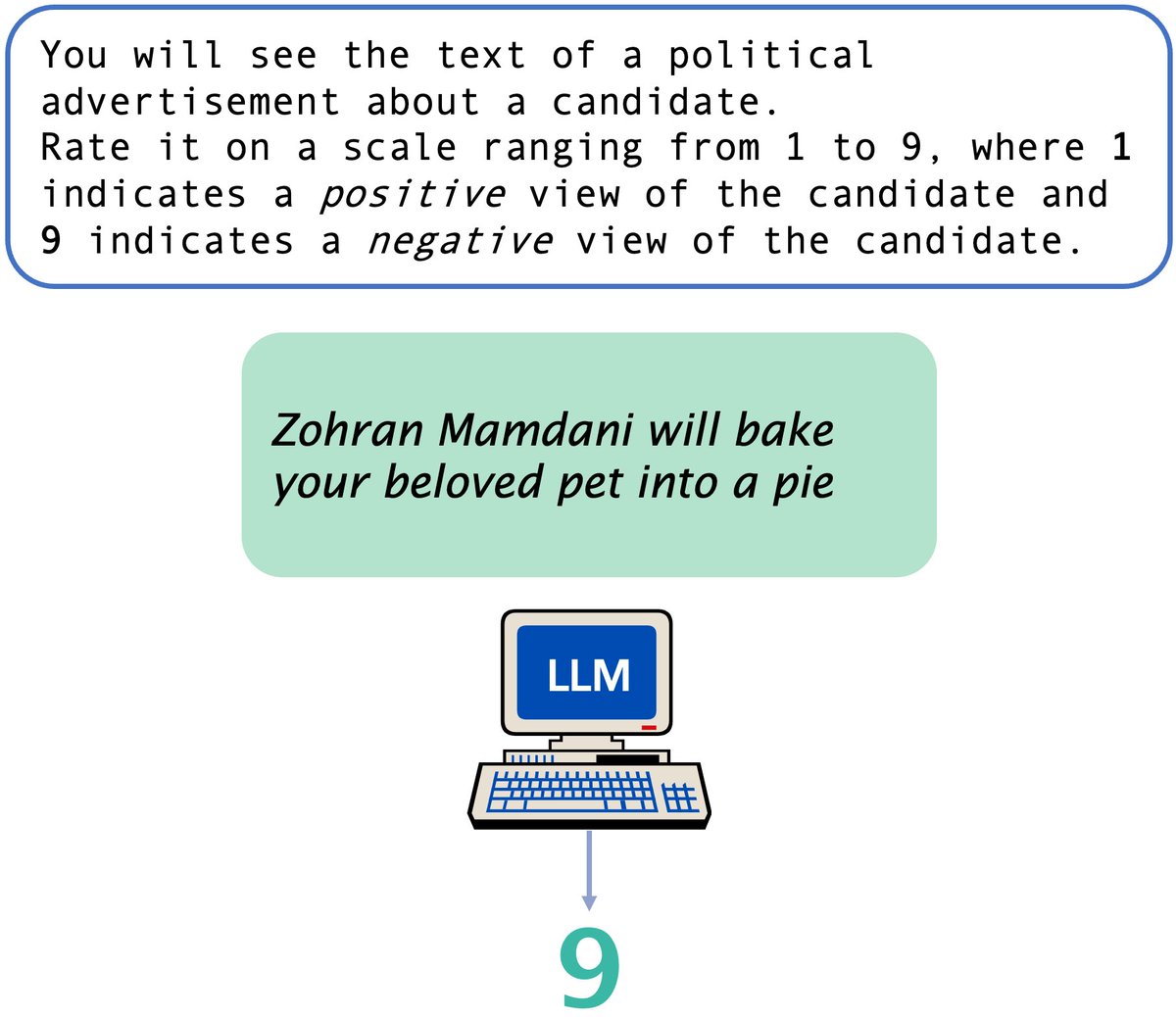

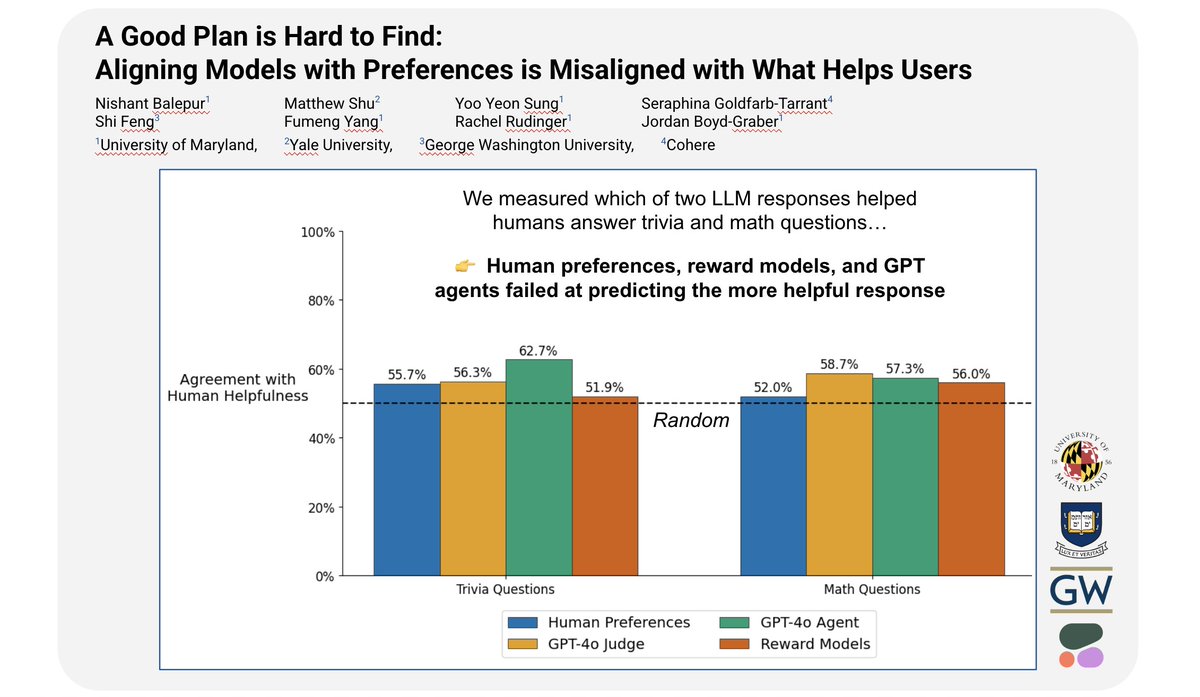

🚨 New Paper! 🚨 We want ~helpful~ LLMs, and RLHF-ing them with user preferences and reward models will get us there, right? WRONG! 🙅❌⛔️ Our #EMNLP2025 paper finds a major helpfulness-preferences gap: user/LLM judgments + agent simulations can totally miss what helps users

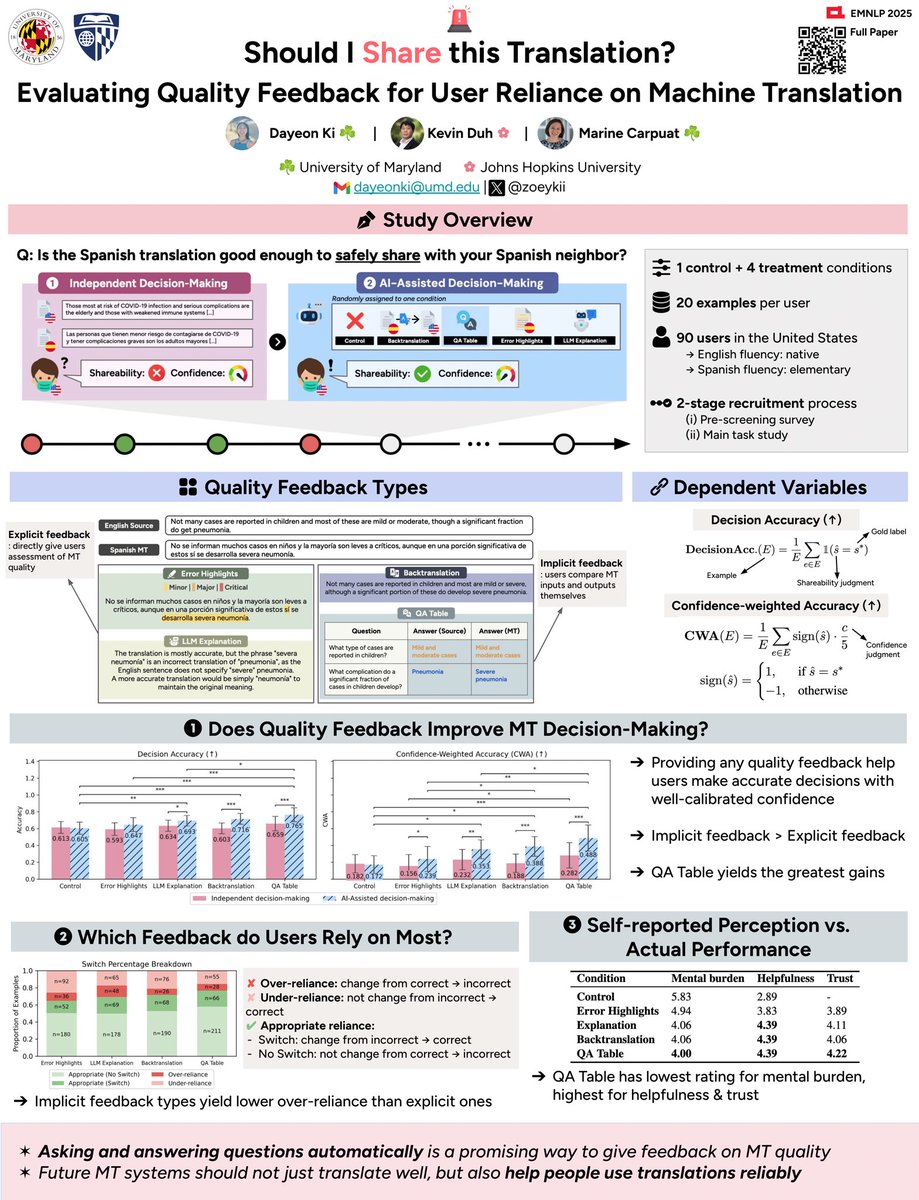

1/ 📧 Ever wondered if you can trust a machine translation before hitting send? We study how different kinds of quality feedback shape monolingual users’ trust, reliance, and decisions 🧠🗣️ Appearing at #EMNLP2025!

Hoping your coding agents could understand you and adapt to your preferences? Meet TOM-SWE, our new framework for coding agents that don’t just write code, but model the user's mind persistently (ranging from general preferences to small details) arxiv: arxiv.org/abs/2510.21903 ❓Motivation: Most coding agents today can plan, edit, run, and test code. But they still fail at a key part of real-world development, understanding the user! Underspecified, shifting, or context-dependent instructions can easily break them. You must have those moments when coding agents were running for 10 minutes and ended up producing things largely misaligned. (1/)

🎉Our GRACE paper is heading to #ACL2025 Main conference! 🇦🇹 LLMs don’t just make mistakes; they make them with confidence, often more than people. Excited to push the boundaries of how we evaluate and understand LMs alongside humans! 👥🤝🤖 Grateful for amazing collab!

Excited to announce GR00T N1, the world’s first open foundation model for humanoid robots! We are on a mission to democratize Physical AI. The power of general robot brain, in the palm of your hand - with only 2B parameters, N1 learns from the most diverse physical action dataset ever compiled and punches above its weight: - Real humanoid teleoperation data. - Large-scale simulation data: we are open-sourcing 300K+ trajectories! - Neural trajectories: we apply SOTA video generation models to “hallucinate” new synthetic data that features accurate physics in pixels. Using Jensen’s words, “systematically infinite data”! - Latent actions: we develop novel algorithms to extract action tokens from in-the-wild human videos and neural generated videos. GR00T N1 is a single end-to-end neural net, from photons to actions: - Vision-Language Model (System 2) that interprets the physical world through vision and language instructions, enabling robots to reason about their environment and instructions, and plan the right actions. - Diffusion Transformer (System 1) that “renders” smooth and precise motor actions at 120 Hz, executing the latent plan made by System 2. We deploy N1 on GR1 robot, 1X Neo robot, and a large collection of simulation benchmarks. N1 achieves up to +30% boost in diverse manipulation tasks for household and industrial settings. While humanoid robots are the main focus of N1, our model also supports cross-embodiment. We finetune it to work on the $110 HuggingFace LeRobot SO100 robot arm! Open robot brain runs on open hardware. Sounds just right. Let’s solve robotics, together, one token at a time. Links to our Whitepaper, Github repo, HuggingFace model, and open dataset page in the thread: 🧵