Jimmy Lin retweetledi

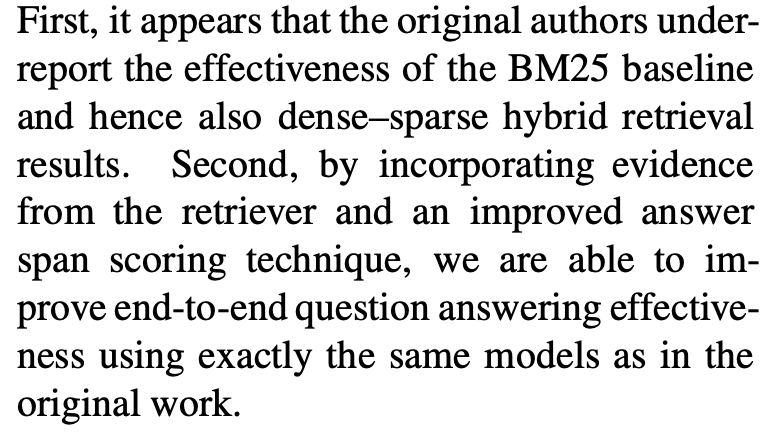

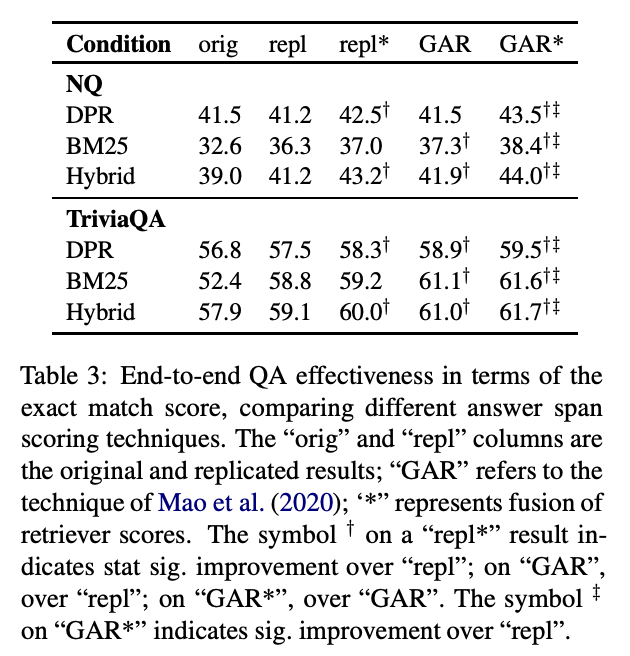

Does retrieval help RAG or did the LLM already memorize the answer? 🤔 Too often, the overlap between RAG corpora and what LLMs “know” is unclear

Better RAG evaluation needs tighter alignment between NLP and IR

📚 That's why for RAG 2026 we are using @nvidia's ClimbMix corpus

English