Sabitlenmiş Tweet

LooPIN

428 posts

LooPIN

@loopin_network

PinFi for AI Computing docs: https://t.co/WelBrjGoYF Discord: https://t.co/6w8526jtBS

United States Katılım Nisan 2024

1 Takip Edilen2.5K Takipçiler

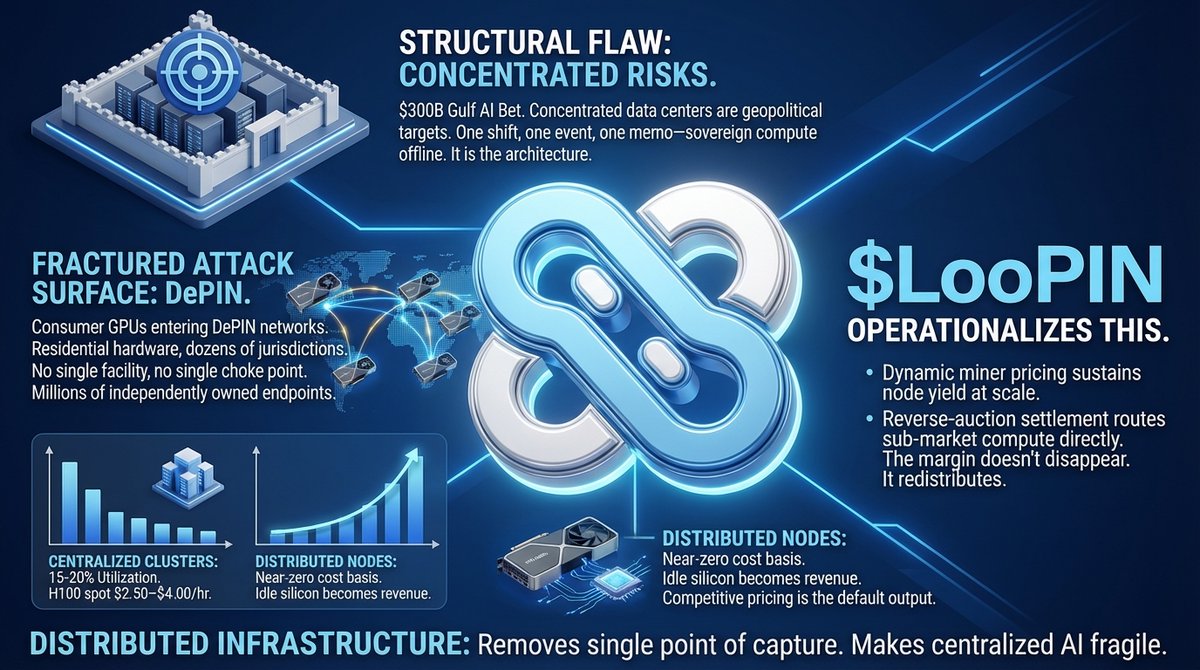

Distributed infrastructure does not just reduce cost. It removes the single point of regulatory or physical capture that makes centralized AI fragile.

$LooPIN operationalizes this: dynamic miner pricing sustains node yield at scale, while reverse-auction settlement routes sub-market compute directly to end users. The margin doesn't disappear. It redistributes.

English

Consumer GPUs are entering DePIN networks. RTX 4090s and 5090s in residential hardware across dozens of jurisdictions are now billable compute nodes. The attack surface fractures overnight. No single facility. No single choke point. Millions of independently owned endpoints absorbing demand that centralized racks cannot safely hold.

The economics follow the geography. Centralized GPU clusters run 15–20% average utilization. H100 spot clears at $2.50–$4.00/hr, margin captured by the platform. Consumer nodes carry near-zero cost basis. Idle silicon becomes revenue. Competitive pricing is not a strategy — it is the default output of the model.

English

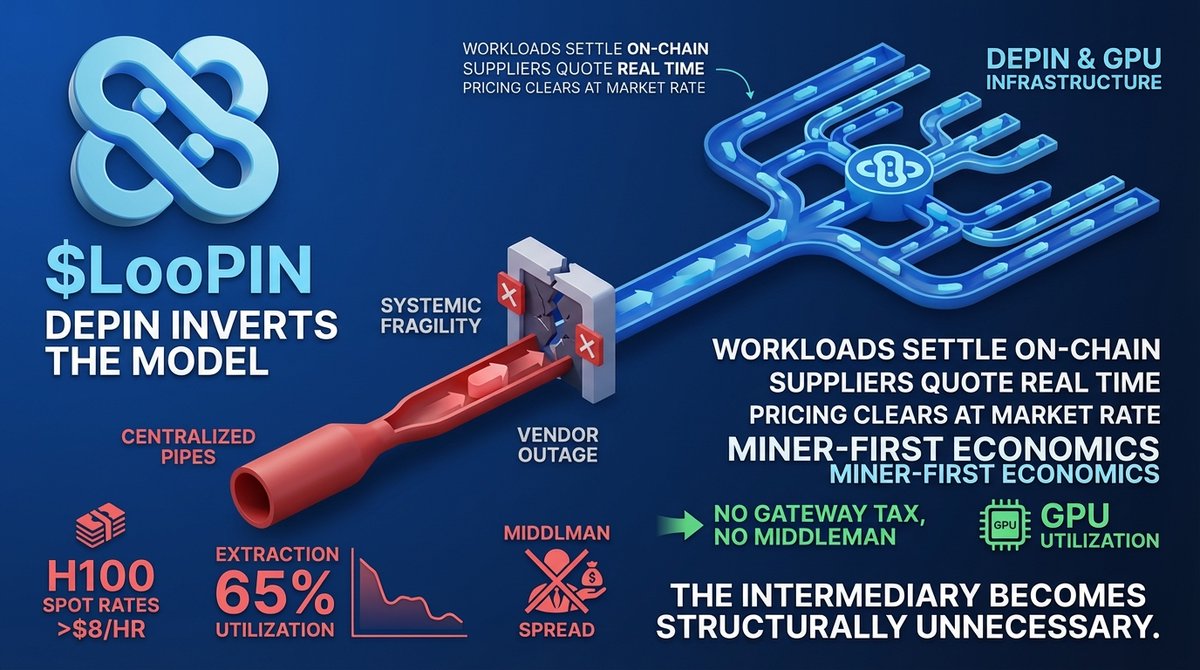

Every dApp call, every inference request — most flows through one provider's endpoint. Decentralized applications. Centralized pipes. The contradiction lives in the plumbing.

A single RPC gateway controlling routing means one vendor outage cascades across thousands of dApps. That's not infrastructure. That's systemic fragility.

The same logic applies to compute. H100 spot rates exceeded $8/hr on major cloud platforms through 2025, while global GPU utilization averaged below 65%. The spread is not efficiency. It's extraction. Idle capacity exists but never clears — centralized allocators capture the margin before it reaches market.

DePIN inverts the model. Workloads settle on-chain. Suppliers quote in real time. Pricing clears at market rate, not vendor markup. No gateway tax. No middleman holding the spread.

GPU infrastructure follows the same architectural inversion. Dynamic settlement, miner-first economics, on-chain clearing. The intermediary becomes structurally unnecessary.

$LooPIN

English

DePIN inverts the incentive stack. Reverse-auction settlement replaces opaque rack pricing. On-chain clearing makes utilization visible.

Dynamic compute allocation routes margin to miners and reduces TCO for end users — not the opposite. The infrastructure layer becomes composable and permissionless.

The 5-layer cake doesn't need a new recipe. It needs open infrastructure at layer 3.

$LooPIN

English

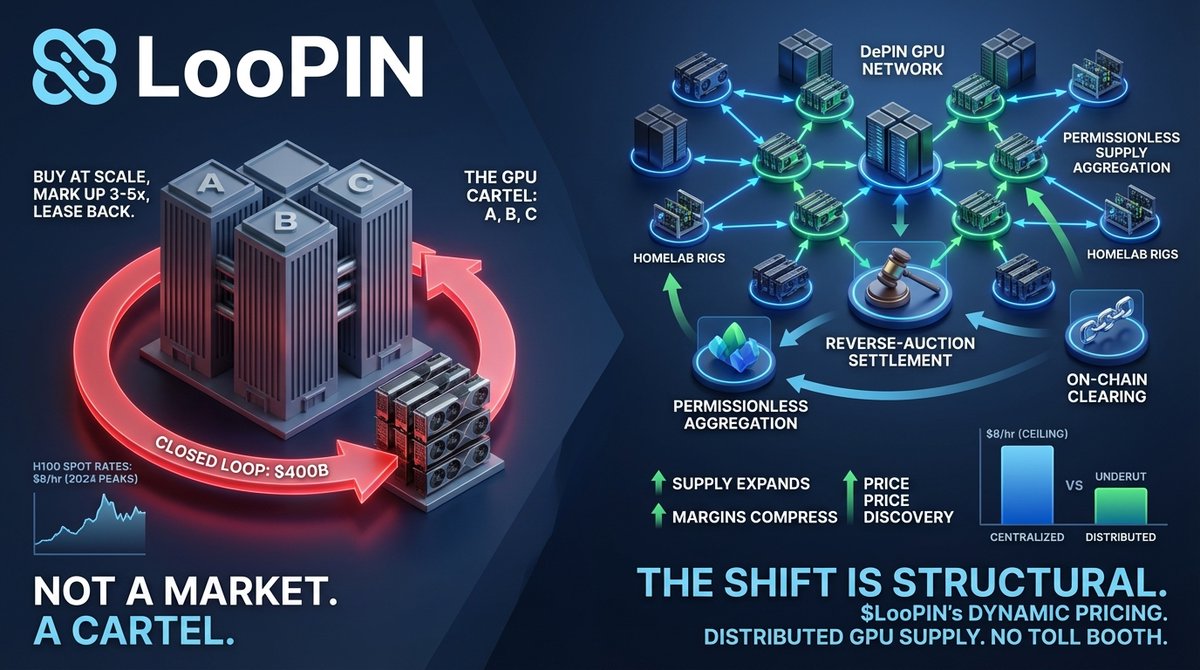

Three companies control the majority of global GPU supply. They buy Nvidia allocations at scale, mark up 3-5x, then lease capacity back to the same AI labs they directly compete against. The $400B closed loop isn't a market — it's a cartel wearing infrastructure clothes. H100 spot rates hit $8/hr at 2024 peaks. The price isn't set by supply and demand. It's set by whoever owns the datacenter.

DePIN inverts the architecture. Permissionless supply aggregation means any GPU — enterprise rack or homelab rig — enters the network without a vendor contract. Reverse-auction settlement replaces fixed list pricing. On-chain clearing eliminates the intermediary layer entirely. Supply expands. Margins compress. Workloads route to price discovery.

The centralized model's structural weakness is TCO math, not ideology. Distributed inference nodes undercut centralized egress at regional scale. When enterprises running persistent LLM workloads continuously absorb $8/hr compute ceilings, migration isn't a question of preference — it's an accounting decision. And it doesn't reverse.

This shift is structural, not cyclical. $LooPIN's dynamic pricing and miner-aligned incentive design exist for exactly this inflection: distributed GPU supply, accessible without the toll booth.

English

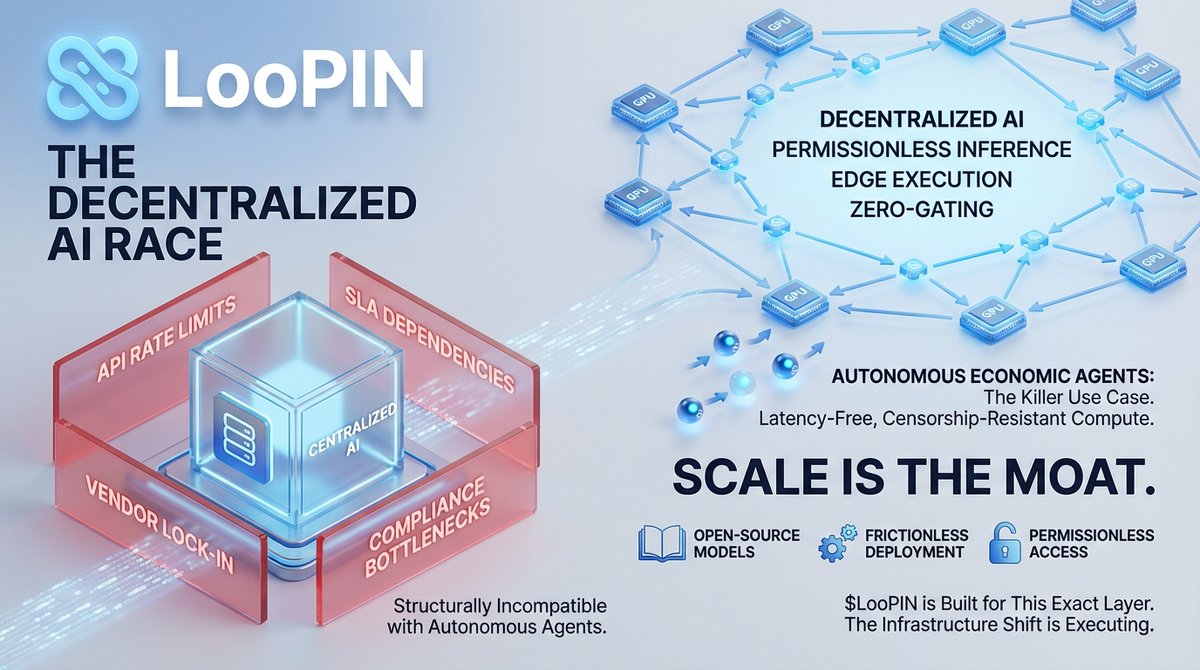

Autonomous economic agents are the killer use case. They don't call GPT-4 APIs. They need latency-free, censorship-resistant compute running continuously without throttling or permission gates. Centralized cloud architecture is structurally incompatible with that requirement.

Unit economics confirm the shift. Distributed GPU inference runs at a fraction of hyperscaler pricing. That gap isn't arbitrage — it compounds at agent scale. Scale isn't a feature. Scale is the moat.

English

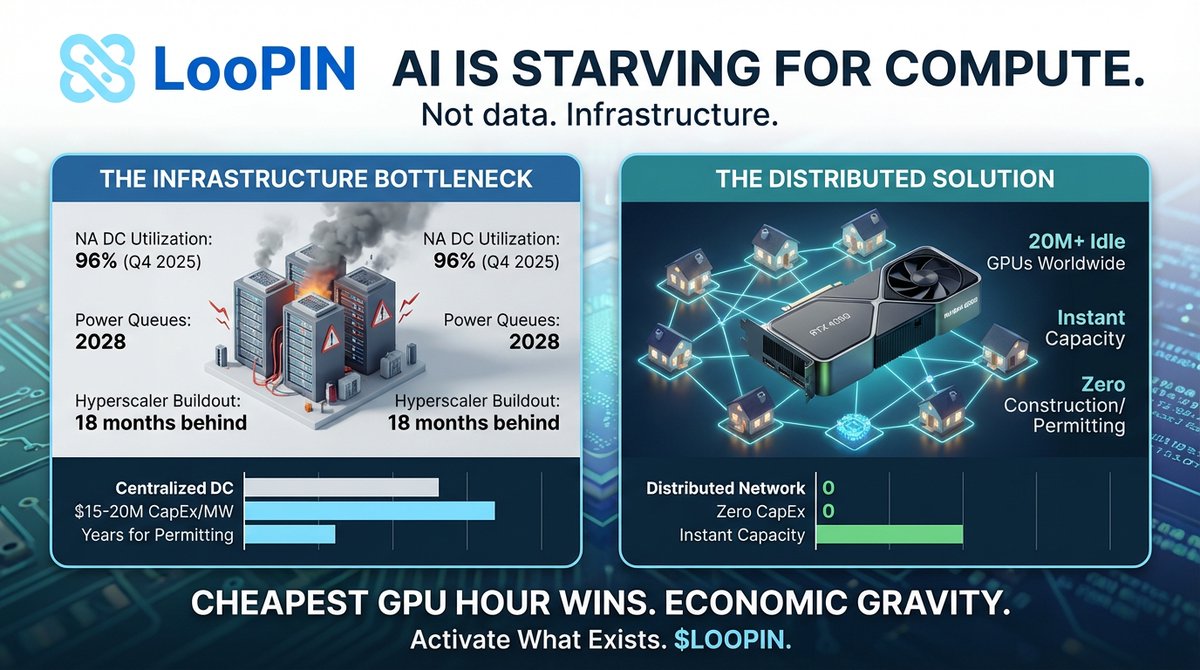

AI is starving. Not for data. For compute. North American data centers hit 96% utilization in Q4 2025. Power queues in Virginia stretch to 2028. Hyperscaler buildout is 18 months behind. The bottleneck was never silicon. It is infrastructure.

Over 20M high-performance GPUs sit idle in homes worldwide. RTX 4090s running wallpapers while AWS charges $32/hr for H100 with multi-month waitlists. Massive arbitrage gap hiding in plain sight.

Centralized DC expansion runs $15-20M per MW CapEx. Permitting takes years. Grid interconnection backlogged through 2028. Distributed networks skip all of it. Zero construction. Zero permitting. Instant capacity. Cost structures are not comparable.

Congress held hearings on AI energy threatening grid stability. The fix is not more concrete and copper. It is activating what exists. Every idle gaming rig is infrastructure waiting for a price signal.

Cheapest GPU hour wins. Always. Distributed compute is not ideology. It is economic gravity. $LOOPIN

English

Centralized clouds pack thousands of tenants on shared infra. One compromised hypervisor exposes everything. PQC alone cannot fix architectural single points of failure.

Distributed compute changes the math. Independent operators create defense depth. Attack surface fragments across thousands of secured nodes instead of concentrating in mega-datacenters.

English

Now Render, Railway, Fly.io all race to capture the exodus.

But migrating from one centralized PaaS to another just resets the countdown.

VMware users watched Broadcom impose 10x price hikes post-acquisition.

Docker hit devs with 67-80% increases.

Builder.ai went bankrupt in 2025 -- clients lost access to their own code.

Centralized dependency means single-entity risk on pricing, features, and uptime.

English