Luke Wright

10.9K posts

Luke Wright

@lukewrightmain

Husband | Dad | @cellhasher | @LitecoinLabs | @Litecoin $BELLS

Hong Kong Katılım Eylül 2018

2.3K Takip Edilen12.2K Takipçiler

@TruthTrumpPost @lukewrightmain RGB cellhasher with trump phones lmao

English

Would be cool to build a Hive OS type of setup for @EquiumEQM mining.

Set up your rig and the OS auto- optimizes your cards.

English

@dotkrueger @PeterSchiff But if Icloud users dont get magic links to log back in, its not even their balance anymore is the db balance?

English

@lukewrightmain @PeterSchiff balance is stored in db with email

English

Hi-ho, silver. Silver is ripping this morning. It's already up over $5, trading above $85. That raises its YTD gain to 18%, surpassing the NASDAQ's 13% YTD rise. Bitcoin is bringing up the rear with a YTD loss of 10.5%. schiffgold.com

English

@dotkrueger Nice! When does IOS users get Icloud working again? Is the balance lost?

English

@dotkrueger @PeterSchiff Did rpow ditch IOS users? Icloud doesnt receive magic links anymore, balance gone?

English

@PeterSchiff Peter Schiff celebrating silver outperforming Bitcoin over a 4-month window is like someone bragging their fax machine beat the internet in Q1.

English

@dotkrueger Only Gmail now? Any idea what happens to the IOS users who use iCloud? Currently Magic Links don't send to iCloud now, they did prior, so all the RPOW Mined is now just sitting in their accounts they cant log-into? Or did you all sweep that into your accounts?

English

ideas / things to add to RPOW

- Solana --> RPOW wraps

- sorting gladiator by flip size

- favorites

- Gadiator style Trivia (almost done)

- multiplayer snake for RPOW

- solitaire for RPOW

- space invaders for RPOW

- mining other tokens, like "Zork"

- minesweeper

- jacks or better

let me know, I will tell devs

English

@dotkrueger @ayciwan So gmail is the only working tld? for us mac users we used icloud now icloud doesnt send?

English

I am entering the gladiator arena on X. My code is 396517. Go to gladiator.rpow2.com to go head to head with me in 100% fair gladiator games. May the best man win.

English

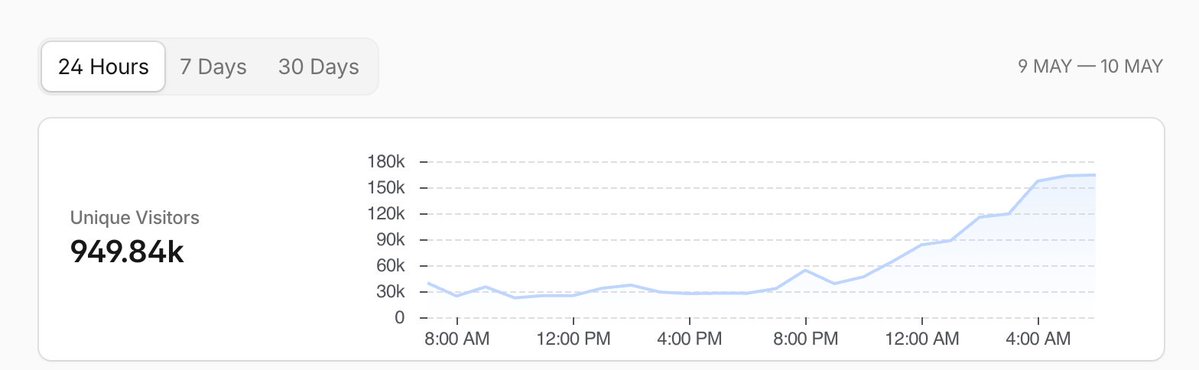

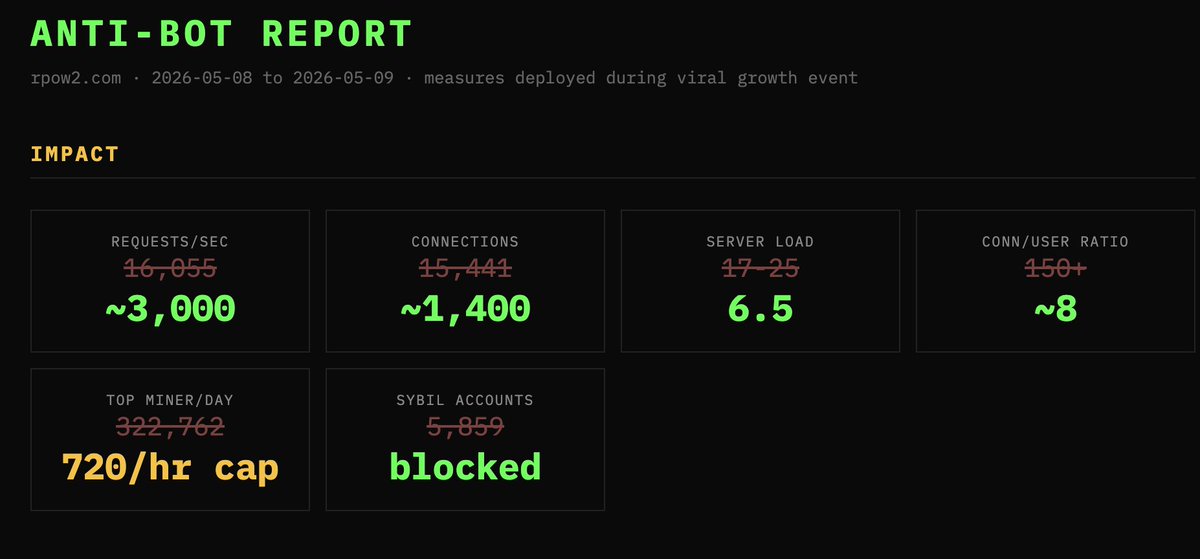

I've run servers for 20 years.

Never seen anything like this.

rpow2 went viral. 36K+ verified email signups... then the AI-powered bots came.

200K+ unique IPs.

60K req/sec.

GPU farms from China.

Sybil farms registering thousands of fake accounts.

Botnets spoofing Chrome across 2,100+ IPs.

Bots adapting in real-time - block one vector, they switch within minutes.

Chaos:

49-second response times.

86% of emails failing.

@dotkrueger tagged me for help.

...then 18 hours later...

Order restored:

16 anti-bot measures across 4 layers.

Response times down to 20ms.

Backend traffic cut 97%.

Real users mine normally, bots get 403'd.

This is modern warfare.

English

RPOW News

1. Massive performance increases.

2. Instead of difficulty going up we're having mining rewards go down for the last 10MM coins. So you can mine, but you just get less.

3. Implementing anti-GPU measures. It's not fair that people with phones can't mine.

4. Working on an optional RPOW --> SRPOW (Solana wrapped RPOW, or "Son of RPOW") coins.

18,200 users. Much faster than the clone.

English

I've fully implemented the ONLY live version of Hal Finney's "Reusable Proof of Work" (RPOW)

-- Try it here rpow2.com

✅ mine RPOW coins in your browser

✅ send RPOW coins to any email address

English

@Truthcoin @That1TrvlGuy Consider a Verus/ style algo to support mobile CPUs? As the world goes increasingly mobile and access to mobile devices are relativity in everyone’s hands that could help many people be involved early on, could still have it be sha256d aswell.

English

BREAKING: New Bitcoin Fork

I am helping create a **new Bitcoin Hardfork** -- dropping this August, called "eCash".

- Your coins will split. For example, if you have 4.19 BTC, then you will get 4.19 eCash.

- You may sell your eCash -- or keep it. Or ignore it!

Vegas:

- Yes, I will be in Vegas next week.

- No, I won't mention this, on stage -- (that would be rude).

Our L1 Node...

- is a near-copy of Bitcoin Core.

- is Sha256d mined.

- forks via a one-time difficulty-reset -- to its minimum value. (So, mining will be crazy at first.)

- Yes, we will change the seed nodes, the name, the network magic, etc.

Codewise, the L1 will remain compatible with Bitcoin Core:

- We will continue to merge their changes (even the bad ones).

- The L1 will activate Bip300/301 via CUSF -- the core untouched soft fork. So, no lines of code will be changed, on the L1.

- The activation client will be published periodically (link below).

- We will do several bug bounty contests this summer.

- The client will be frozen 30 days prior to the fork.

Yes, there will be Drivechains:

- We have 7 in developement right now.

- Users can also submit their own.

- Drivechain is a vision of "competing L2s" -- this avoids the "dev capture" problem.

- These L2s are all Merged Mined. Miners automatically get free $.

- Our L2s are already capable of planetary scale, and onboarding 8 billion users.

- We also have a zCash-like L2, with strong privacy.

- Other L2s: Truthcoin (Prediction Markets), CoinShift (Decentralized Exchange), BitAssets (NFT etc), BitNames (Identity), Photon (Quantum Resistant).

Unlike BCH (the 2017 fork):

- There is no "Bitcoin" in the name. New name, new brand.

- You are getting advanced warning (4 months).

- We are replaying all txns (at first). We will release a coin-splitter tool.

- This is a permanent & sustainable fix to Bitcoin's problems (instead of a 1 MB to 8 MB temporary fix).

- Back in 2017, the BTC tech stack was strong, and expectations for Lightning were strong. Today, it is the reverse.

Video to follow.

English

@gnoble79 A huge majority of Gen Z, invests in Tesla as Exposure to SpaceX. Obviously it’s not the same, but that’s where more of that 15x trading price vs their rev comes into play. 100%

English

Last night was the biggest disaster in the history of Tesla.

Let me walk you through what actually happened on that earnings call, because the headlines are doing you a disservice:

Elon Musk got on the call and admitted (his words) that Hardware 3 "simply does not have the capability to achieve unsupervised FSD."

He said he wished it were otherwise. He said the memory bandwidth is one-eighth of what Hardware 4 has. And that's the end of the conversation.

Approximately 4 million Tesla vehicles on the road right now have Hardware 3. Many of those owners paid $8,000 to $15,000 for Full Self-Driving capability based on Musk's repeated promises (going back to 2016) that the hardware was sufficient for full autonomy. As recently as 2022, Musk was publicly assuring owners that HW3 had the processing power to get it done.

BUT IT DIDN'T

Those promises are now officially broken.

The solution is a "discounted trade-in" toward a new car with Hardware 4.

Not a refund or a free upgrade...

A discount on buying ANOTHER Tesla.

Investor Ross Gerber said it too - all HW3 owners got screwed, and with roughly 285,000 FSD purchasers affected, the potential liability runs into the BILLIONS.

But that's not even the worst part.

Musk was asked if the current FSD v14.3 was ready for unsupervised deployment. He said yes. Then immediately walked it back and admitted Tesla has "major architectural improvements" in the pipeline that would significantly improve safety.

What he really means: the software isn't SAFE ENOUGH to deploy without a human watching. Full unsupervised FSD for consumer cars is pushed to Q4 2026. At the earliest... Maybe.

How many times has this deadline been pushed? I've lost count. And trust me, I've seen a lot of broken promises. But this one takes the cake.

Now let's talk about the numbers everyone is celebrating:

Tesla reported $22.4 billion in revenue and $0.41 in non-GAAP earnings. A "double beat." The stock popped 4% after hours. Victory, right?

WRONG

Dig into the actual filing:

The number one driver of operating income improvement wasn't cost reductions, wasn't volume growth, wasn't FSD revenue. It was - and Tesla listed this FIRST in their own shareholder letter - "one-time benefits related to warranty and tariffs."

They released warranty reserves. They booked tariff refund windfalls. They stretched supplier payments by 10 days. They took on billions in new debt. Then they presented everything through non-GAAP metrics that strip out over $1 billion in stock-based compensation.

GAAP net income was $477 million on $22.4 billion in revenue. That's a 2.1% net margin. On a $1.4 trillion market cap.

Let me put that in perspective:

3.75 billion shares outstanding. Annualize the Q1 GAAP profit and you get roughly $1.9 billion. That's a trailing P/E ratio north of 700. Use the adjusted number - strip out stock comp, which is a REAL cost to shareholders through dilution - and you're still at around 250x earnings.

All of this is extremely bad, but I didn't even talk about the CAPEX BOMB yet...

3 months ago, Tesla guided to "over $20 billion" in 2026 capital expenditure. Last night they raised it to over $25 billion. A $5 billion increase in a single quarter. That's 3x their historical annual capex run rate - $8.5 billion in 2025, $11.3 billion in 2024. The CFO confirmed on the call that Tesla expects NEGATIVE free cash flow for the rest of the year.

So you have a company generating roughly $6 billion in annual free cash flow on a good year, and they're about to spend $25 billion.

The math doesn't work.

They will almost certainly need to issue equity. Which means dilution. Which means the $1.9 billion in annual earnings gets spread across even MORE shares.

The core auto business is literally deteriorating in real time:

Tesla delivered 358,000 vehicles in Q1 (missed estimates again).

They produced 408,000. That's 50,000 cars sitting on lots that nobody bought.

Inventory days jumped from 10 to 27 in just a few quarters. California (their most important US market) saw registrations crash 24% year over year.

Their market share in the state fell from 9.2% to 7.7%. That's on top of a Q1 2025 that was ALREADY weak from Model Y retooling. They're declining off a decline.

And here's what really kills the bull case...

The entire valuation rests on robotaxis, Optimus robots, and autonomy. So let's put numbers on it:

Waymo - the actual leader in autonomous driving with 15 million completed rides in 2025 alone, over 127 million autonomous miles driven, operating commercially across 6 US cities with plans to expand to 20 more - just raised $16 billion at a $126 billion valuation.

That's the market's verdict on what the LEADING robotaxi company is worth. $126 billion.

And Waymo is YEARS ahead of Tesla in actual deployment.

Tesla has 3.75 billion shares outstanding. So even if you assign $126 billion in robotaxi value (giving Tesla full credit for matching Waymo despite being nowhere close) that's $33 a share. Add the auto business at generous auto-industry multiples, maybe $20 a share. Throw in energy storage and services, $10-15.

Sum of the parts gets you to roughly $65-70 a share if you're feeling generous. Maybe $50 if you're not.

The stock is $387.

So what exactly are you paying for?

You're paying for a STORY. You're paying for PROMISES that keep getting pushed back, technology that keeps falling short, and a business plan that requires spending $25 billion a year while the core product sells fewer units at declining margins in a market where California sales just fell 24% and the federal EV tax credit is gone.

I managed the number one mutual fund in America. I founded two billion-dollar hedge funds. I've been doing this since 1981.

And I am telling you:

Tesla at $387 is one of the most egregious mispricings I have seen in my entire career.

THE CRASH WILL BE EPIC

English

Luke Wright retweetledi

Looking to hire someone full-time to help with chasing this year

Mid to late April through June.

Filming, livestreaming, navigation, and helping keep everything running smoothly

Paid role — must be okay with relocating to Minnesota for the season

More details at the link below! Apply here 👇

forms.gle/DeK1JcRtH7qqQh…

English