Sabitlenmiş Tweet

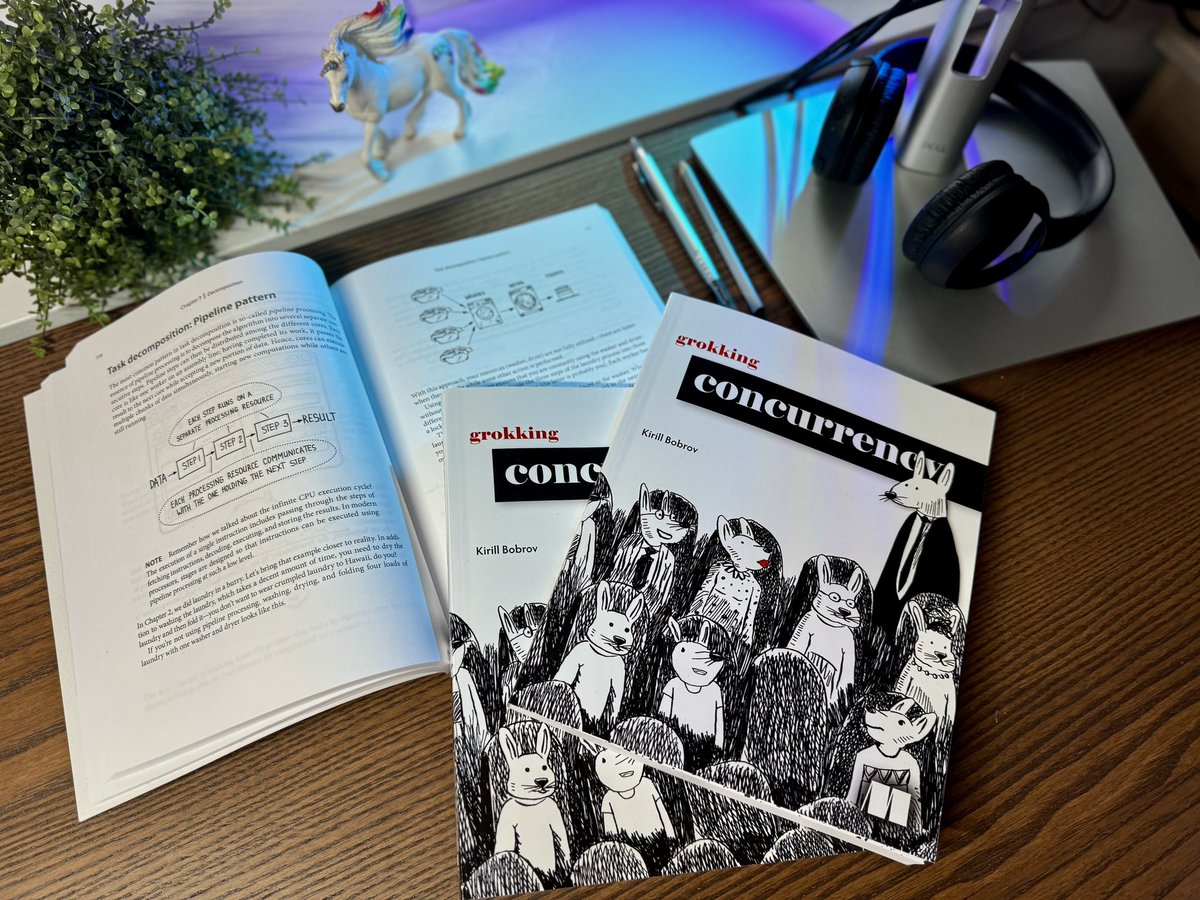

Hello wonderful people!

I’m thrilled to announce that my new book, “Grokking Concurrency,” has officially hit the shelves!

You can find it here: manning.com/books/grokking…

I could really use your support in spreading the word!

English