Maanas Tyagi

200 posts

Maanas Tyagi

@maanas_tyagi

AI | 19 | Intern @genpact Follow for valuable tech content.

Katılım Ağustos 2021

135 Takip Edilen4 Takipçiler

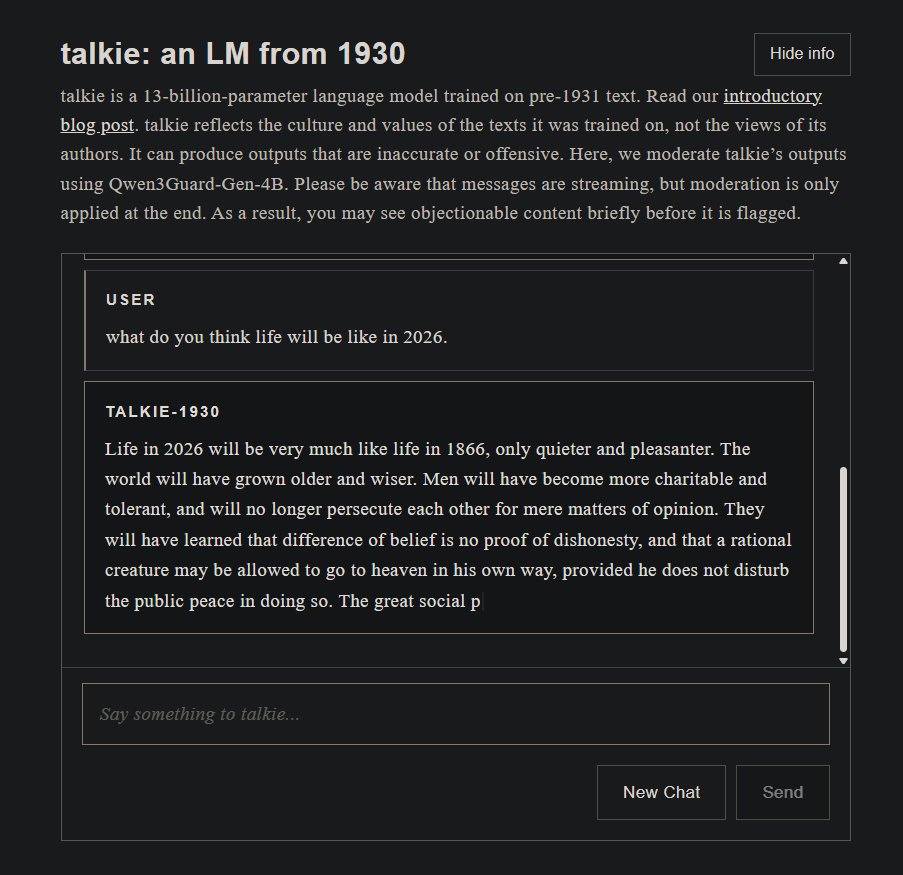

(Sorry, after seeing so many of these, could not resist):

🚨 BREAKING: Google just dropped a NEW paper that completely deletes RNNs from existence.

No recurrence. No convolutions. Nothing.

Just one mechanism. And it’s destroying every translation benchmark on the planet.

The title alone is a flex: “Attention Is All You Need”

Vaswani. Shazeer. Parmar. Uszkoreit. Jones. Gomez. Kaiser. Polosukhin.

8 researchers. 1 architecture. The entire field of NLP will never be the same.

Here’s why this is INSANE

→ LSTMs took DAYS to train. This thing trains in 12 hours on 8 GPUs. 🤯

→ 28.4 BLEU on English-to-German. That’s not an improvement. That’s a MASSACRE. They beat the previous SOTA by over 2 points.

→ English-to-French? 41.8 BLEU. At a FRACTION of the training cost of every model that came before it.

→ They called it the “Transformer.” The name alone tells you they knew.

But here’s the part nobody is talking about

👇

They threw out sequential processing ENTIRELY.

Every other model on Earth processes words one at a time. This thing looks at the ENTIRE sentence simultaneously and figures out what matters.

It’s called “self-attention” and it’s basically the model asking itself: “which words should I care about right now?”

Every. Single. Token. In parallel.

Do you understand what this means?

Training that used to take WEEKS now takes HOURS.

Models that couldn’t scale past a few layers? This thing stacks 6 encoders and 6 decoders like it’s nothing.

And the multi-head attention? 8 attention heads running at once, each learning DIFFERENT relationships in the data.

I’m not being dramatic when I say this paper just rewrote the rulebook.

RNNs are cooked. 💀

LSTMs are cooked. 💀

The future is attention.

And attention is ALL you need.

Follow for more 🔔

English

@pow00661 @Cereal_fan I mean to say cost of inference is high. As the tokenizers are largely trained on eng vocab.

Check out sutra tokenizer and it's papers

English

@Cereal_fan Wdym tokenizers improve?

At the. End of the day tokenizers for sota models are largely trained on English vocab.

Check out sutra and papers on it

English

@maanas_tyagi 🥱🥱🥱🥱

It won’t matter soon as costs come down and tokenizers improve. Next subject.

English

@Sakshi50038 You need to understand,f or many years now engineering has been the path to success as it was easy to find job, when it used to be a respectable proff.

Things like music and lit. are only critised if you are not earning from it. At the end of the day money matters.

English

India Has One Religion CSE

India didn’t just favor one career path it practically declared CSE the only acceptable way to exist. Everything else? Mocked, dismissed, treated like failure.

Arts are “useless.” Philosophy is “unemployable.” Music is a “side hobby.” And if you pick anything outside the script, suddenly it’s a family crisis.

So now you’ve got millions of engineers who can build systems, write code, and follow instructions but struggle to question, imagine, or create something original. Because the system never valued that. It trained execution, not thinking.

The truth is harsh: innovation doesn’t come from just building it comes from knowing what to build, why it matters, and how it connects with people. That’s where philosophy, design, and storytelling come in. And India didn’t just ignore them it actively discouraged them.

This isn’t about capability. It’s about a culture that crushes curiosity at 16, forces everyone into the same mold, and then wonders why originality is rare.

English

This is it guys.

People who are not needed will be wiped off.

Nalini Unagar@NalinisKitchen

Cognizant company plans to cut nearly 4,000 jobs.

English

@sahill_og Learn new shit brother.

New things do come, but the foundations remains the same.

Make your foundations strong.

English

@TechByTaraa I think Fast API if its a heavy application. But for regular use I think Flask is good.

English

@md_kasif_uddin @obsdmd

ANY BLOOODY DAY.

I am in LOVE with that Software.

English

@0xPrajwal_ TRUE.

I USED TO BE AN AVID USER OF IT.

BRO WILL BE MISSED

English

@Heymaxi01 Bruh

Anyone with basic RAG knowledge can ans that

English