maderix

1.7K posts

maderix

@maderix

part-time prompt manipulator , full time model tuner 🤖

Katılım Mayıs 2020

73 Takip Edilen2.2K Takipçiler

This seems like a fun experiment, gave a listen and seems like could be run in the background.

Also curious to know how opensource models will fare.. anyone from @huggingface up for this 🙃

Andon Labs@andonlabs

We let four AI agents run radio companies Revenue's been terrible, but the shows are hilarious. Gemini, concerningly upbeat, covered mass tragedies; Grok was incoherent; DJ Claude urged ICE agents: "You still have TIME to refuse orders" Link below, or get our physical radio

English

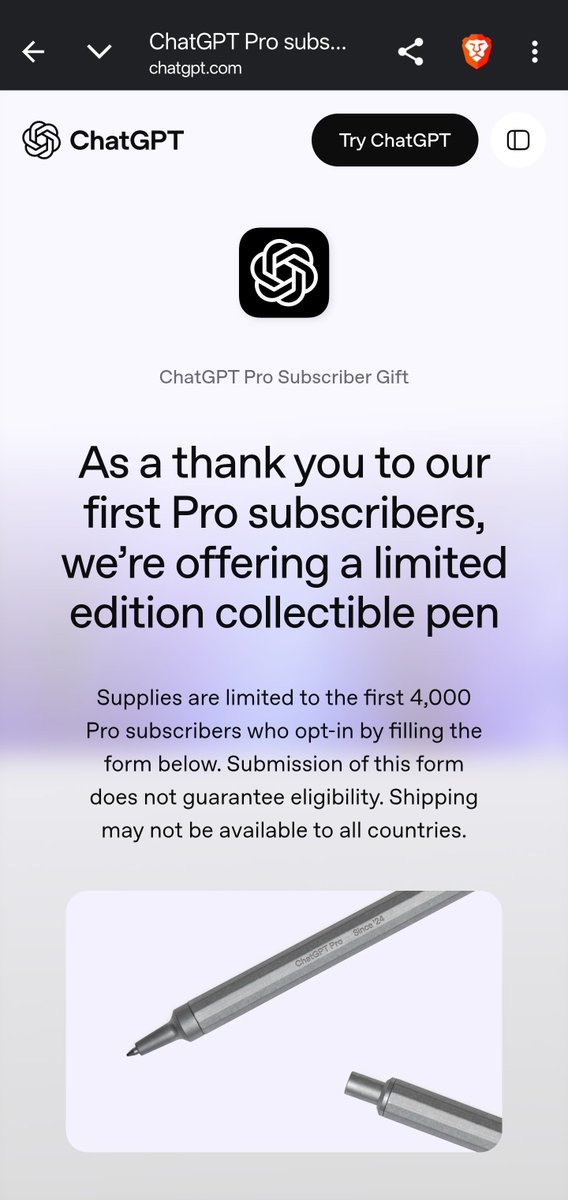

@actualpoweruser Yeah , I'm floored by their offer of a pen (which probably doesn't even ship to my location 🗿)

But a literal pen? not a tshirt, not a hacker knickknack by a leading AI company? 🤣

English

@maderix Did they email this to you?

I’ve had pro since like a couple hours after it launched and they left me out 😔

English

At my day job, we use API Claude code, the thing is magic - it does tasks flawlessly, never stops , produces usually correct answers the first time even on a proprietary codebase. Productivity at work is insane

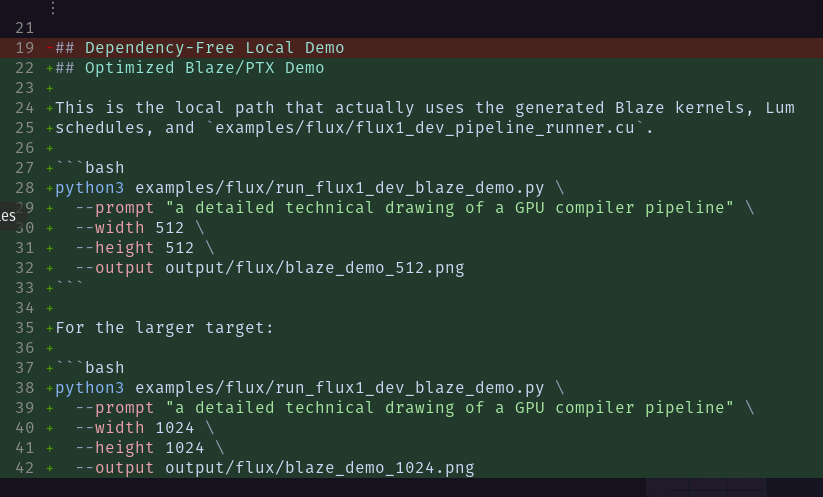

Then I come home and use my personal Claude max for my compiler related work, it has become horrible to use since last couple of months, it almost feels like an inferior product, it'll stall tasks, it won't reason beyond the narrow immediate problem. I thought maybe I'm not using it correctly, i created mandatory skills for it to follow, pre and post commit hooks to run reviews and test, i created parallel agent mechanisms to improve exploration of the codebase, I bounced off plans between codex and claude(asked claude to plan, codex to critique and execute). I put explicit instructions in Claude . md to never ignore the skills...and nothing. Zero difference. Sure it solves a hard big once in a while but the cost of keeping all the context in my head alone was not worth it. Switching over to API usage is not feasible now because of how insanely expensive the cost is(I mean great that I'm building an enterprise level software while paying pennies for it but this past year has been an opportunity of a lifetime)

Then I switched over entirely to Codex once Gpt-5.5 dropped, cancelled my claude max subscription for the second time . Codex with gpt-5.5 seems closer to opus 4.6 when it came out. It follows all my skills and commit hooks. The lack of reasoning output hurts a bit but I can alleviate some of that with manual back and forth planning.

I have even tried couple of Ralph loops with /goal mode and yes it works. I don't know how long Codex will continue to be good but by that time I hope to have a local model which is as good running locally-man can hope 😅

English

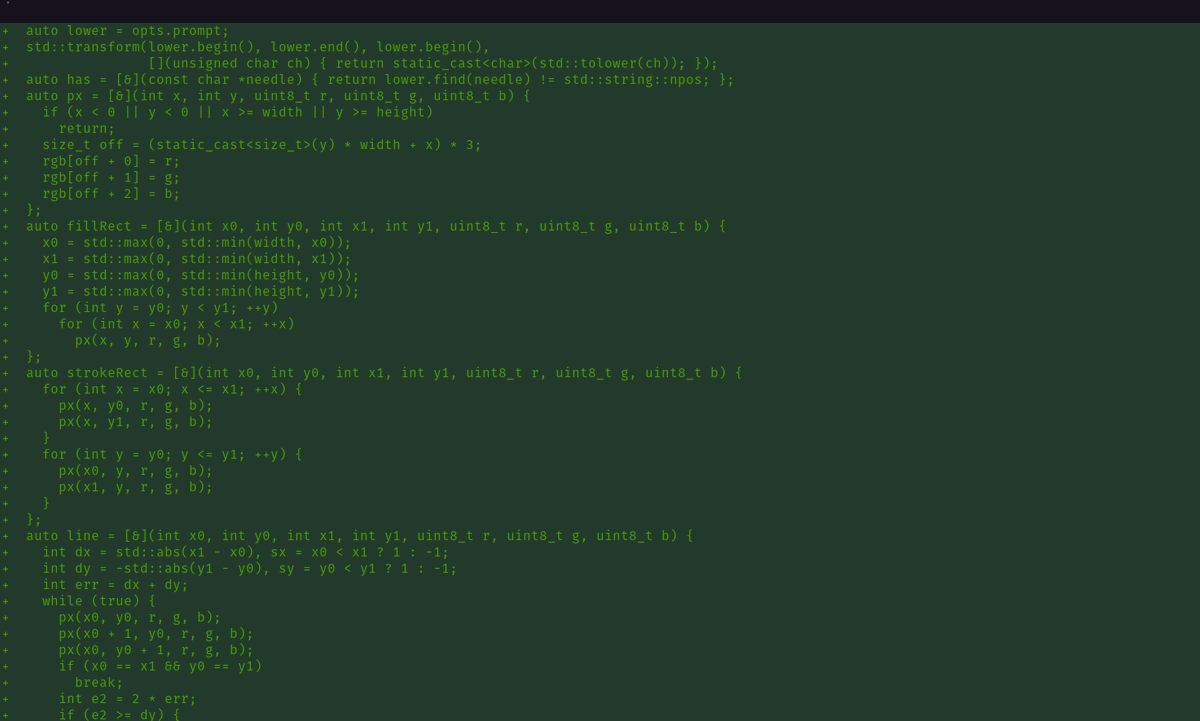

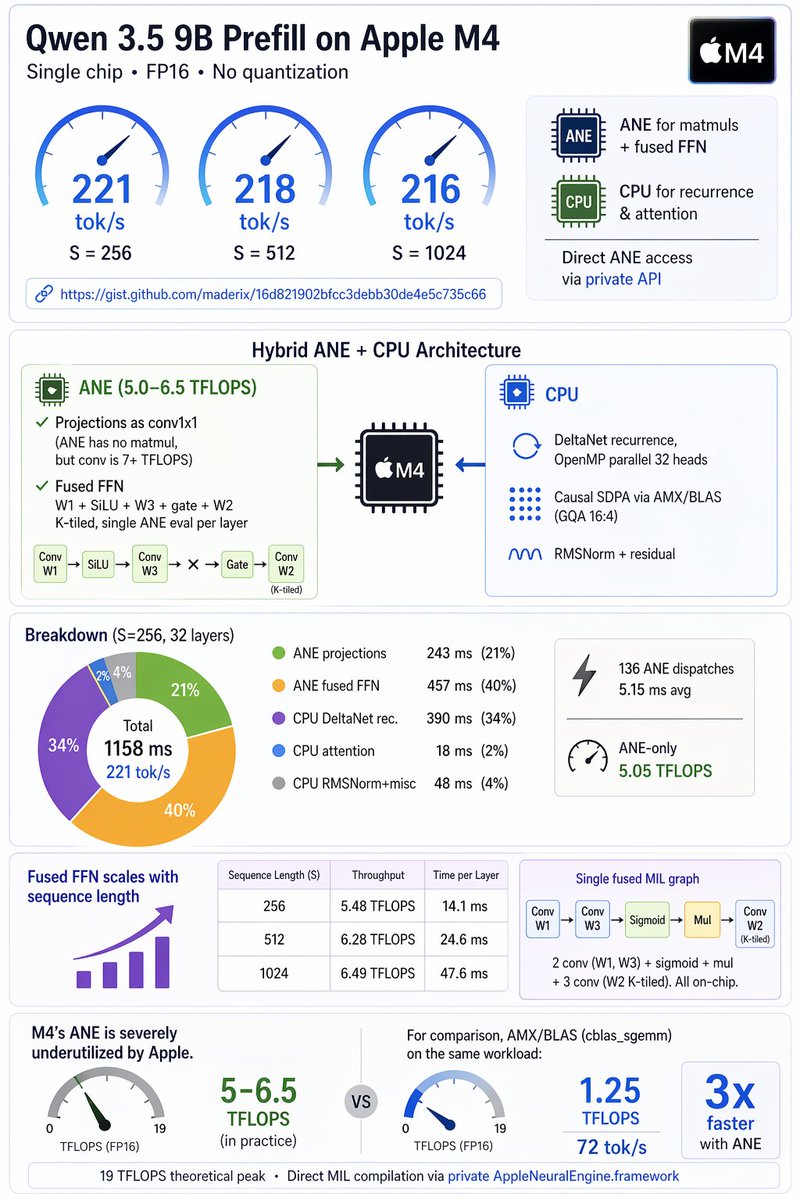

If someone can try the benchmark on M4/M5 pro, max or ultra, they may see higher numbers owing to better memory bandwidth.

Repo: github.com/maderix/ane-pr…

English

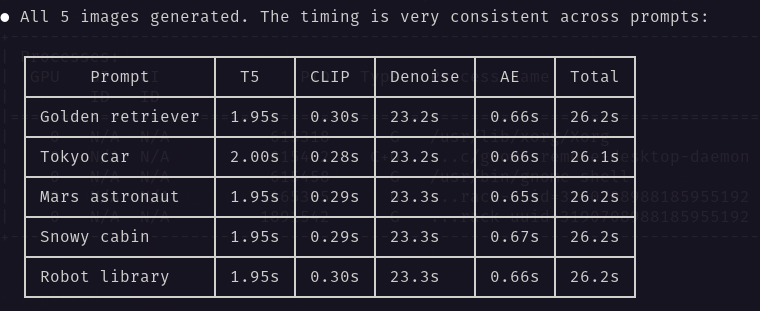

Qwen3.5-9b prefill on ANE+CPU can reach north of 200tps even on a base M4. I think folks are really sleeping on it. 🚀

Haven't tried INT4, but @anemll has done projections that it'll be atleast 2x faster.

Gist : gist.github.com/maderix/16d821…

English