Manaal Faruqui

3.7K posts

Manaal Faruqui

@manaalfar

Senior Staff Research Scientist @Google Bard. Love eating, movies, travel and politics. Spread love, not war.

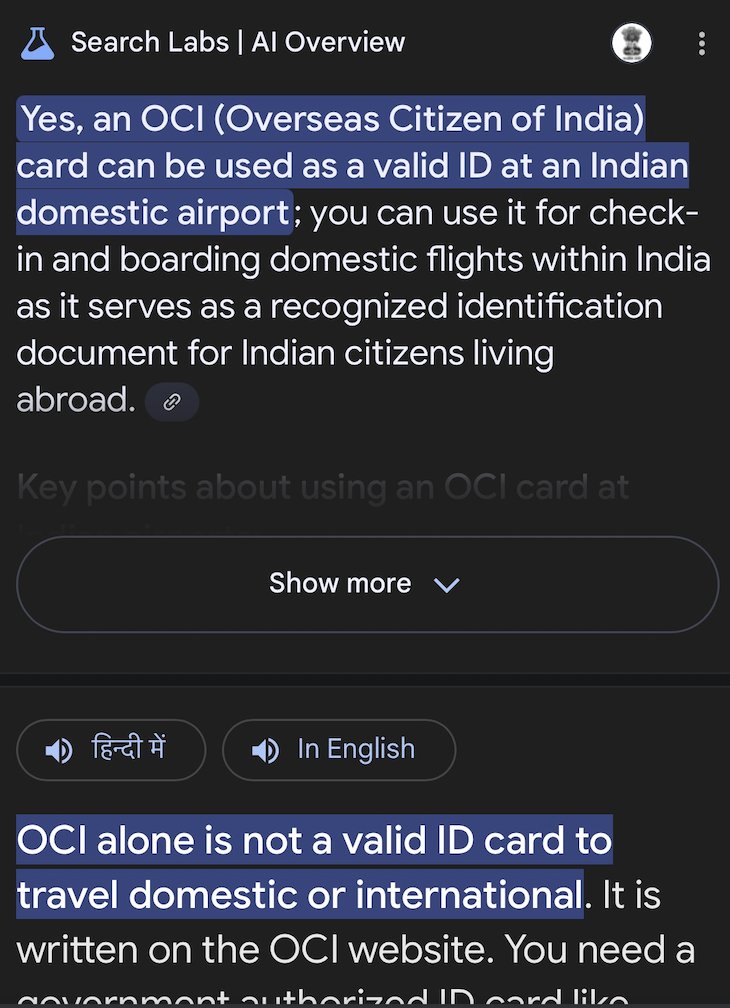

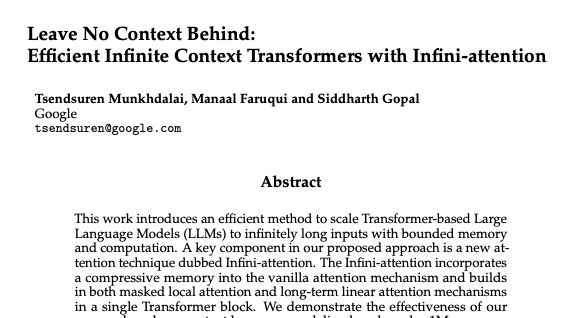

Exciting News from Chatbot Arena! @GoogleDeepMind's new Gemini 1.5 Pro (Experimental 0801) has been tested in Arena for the past week, gathering over 12K community votes. For the first time, Google Gemini has claimed the #1 spot, surpassing GPT-4o/Claude-3.5 with an impressive score of 1300 (!), and also achieving #1 on our Vision Leaderboard. Gemini 1.5 Pro (0801) excels in multi-lingual tasks and delivers robust performance in technical areas like Math, Hard Prompts, and Coding. Huge congrats to @GoogleDeepMind on this remarkable milestone! Gemini (0801) Category Rankings: - Overall: #1 - Math: #1-3 - Instruction-Following: #1-2 - Coding: #3-5 - Hard Prompts (English): #2-5 Come try the model and let us know your feedback! More analysis below👇

@vkhosla @realDonaldTrump @GovWhitmer @GovernorShapiro Come on, Vinod. Trump/Vance LFG!!

🔥Breaking News from Arena Google's Bard has just made a stunning leap, surpassing GPT-4 to the SECOND SPOT on the leaderboard! Big congrats to @Google for the remarkable achievement! The race is heating up like never before! Super excited to see what's next for Bard + Gemini Ultra release.

Returning to transparency, I see that they point to MMMU, which was published on arXiv (not peer reviewed) on November 27, 2023. Google must have had early access to this work, which I suspect means that Google funded it, but the paper doesn't acknowledge any funding source. /12