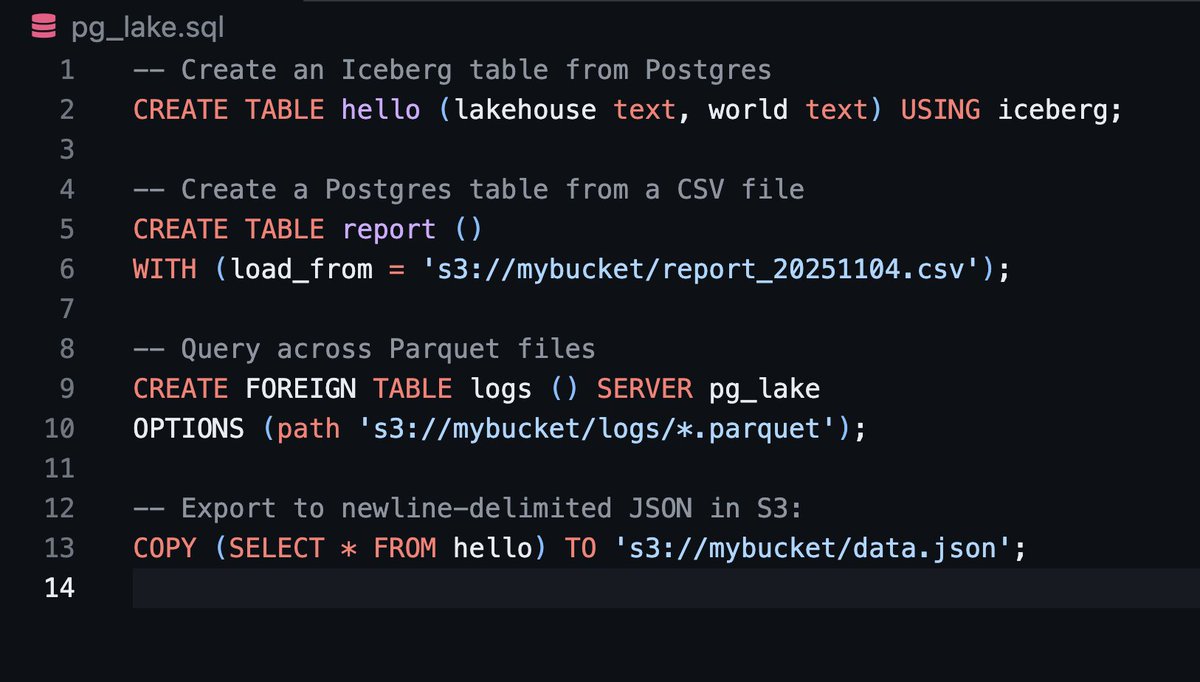

Announcing Crunchy Data Warehouse! A next-generation Postgres-native data warehouse. Full Iceberg support for fast analytical queries and transactions, built on unmodified Postgres to support the features and ecosystem you love. crunchydata.com/blog/crunchy-d…