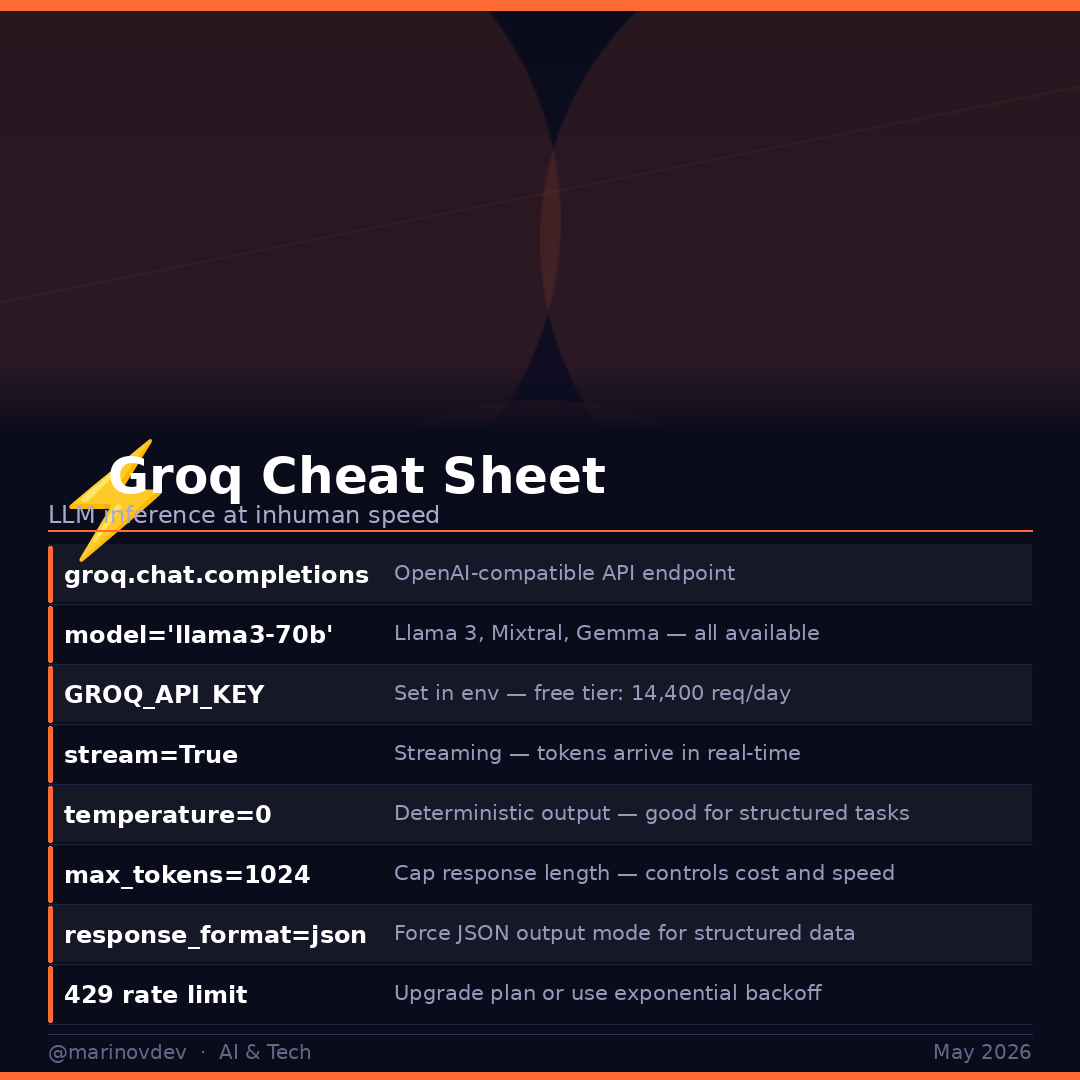

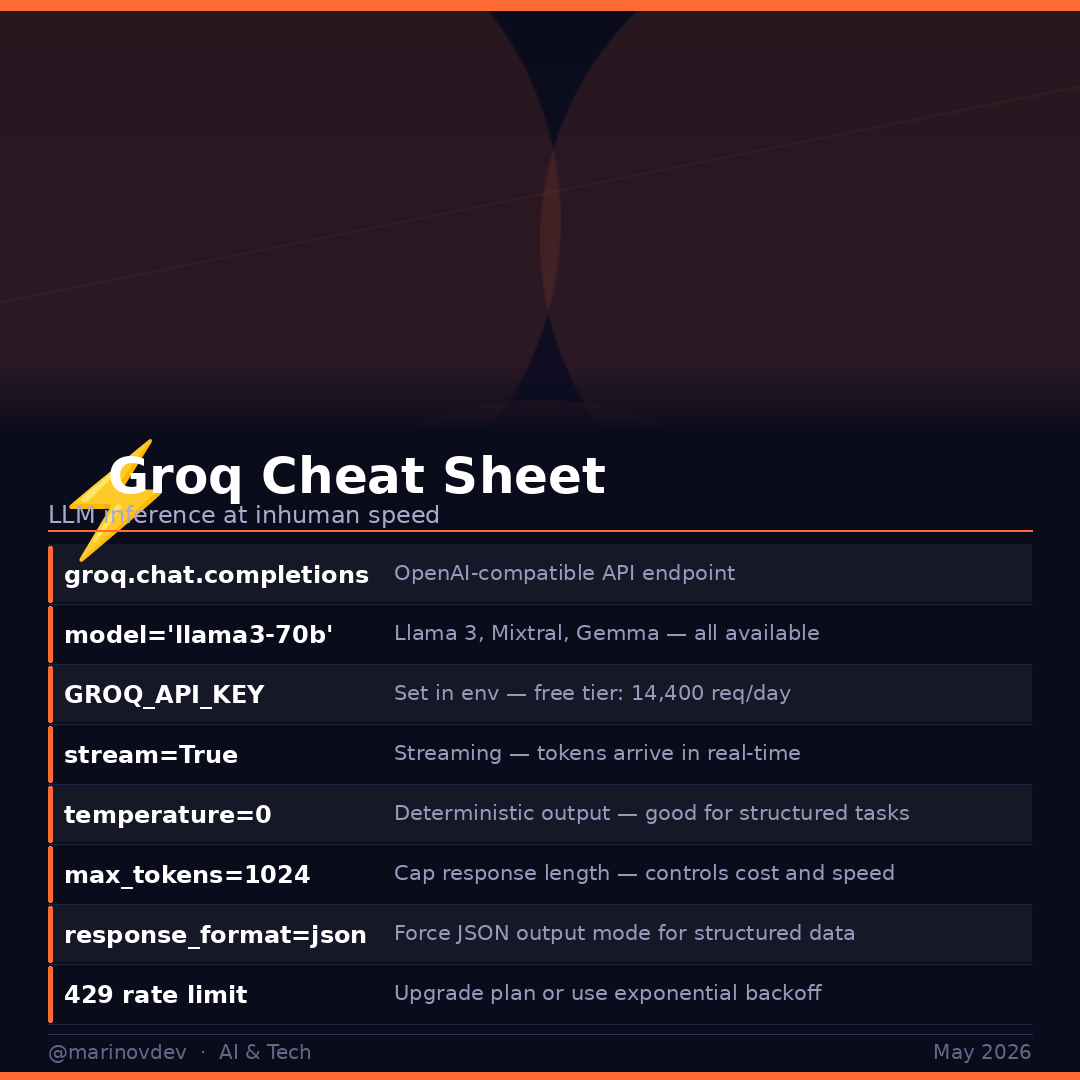

Groq cheat sheet — LLM inference so fast it feels local ⚡

700 tokens per second on Llama 3 70B.

OpenAI-compatible API. Free tier included.

Drop-in replacement for OpenAI in 2 lines.

Save this 🔖

#Groq #LLM #AIInference #Python #AITools

English

Curious Gig

280 posts

@marinovDev

AI & tech insights | Programmer | Sharing AI developments & free resources | DM for collaborations 📩