Mariusz Ochnicki

330 posts

@mariush_ca

AI Philosopher & Builder | Turning AI into personal superpowers Against the hype • For real human intelligence #AICriticalThinking #HumanAI

🧵Koniec programistów, czyli matfizy odchodzą, humaniści nadejdą. Jak to się niespodziewanie wywróciło. Opływający w dostatek i zakorkowani przez państwo (IP Box) programiści patrzą, jak AI praktycznie zabiła już ich zawód. Już widać tąpnięcie w chętnych na studia informatyczne

🧵Koniec programistów, czyli matfizy odchodzą, humaniści nadejdą. Jak to się niespodziewanie wywróciło. Opływający w dostatek i zakorkowani przez państwo (IP Box) programiści patrzą, jak AI praktycznie zabiła już ich zawód. Już widać tąpnięcie w chętnych na studia informatyczne

Claude Code 2.1.139 added /goal You set a completion condition and Claude keeps working across turns until it's met Works in interactive, -p, and Remote Control 👏

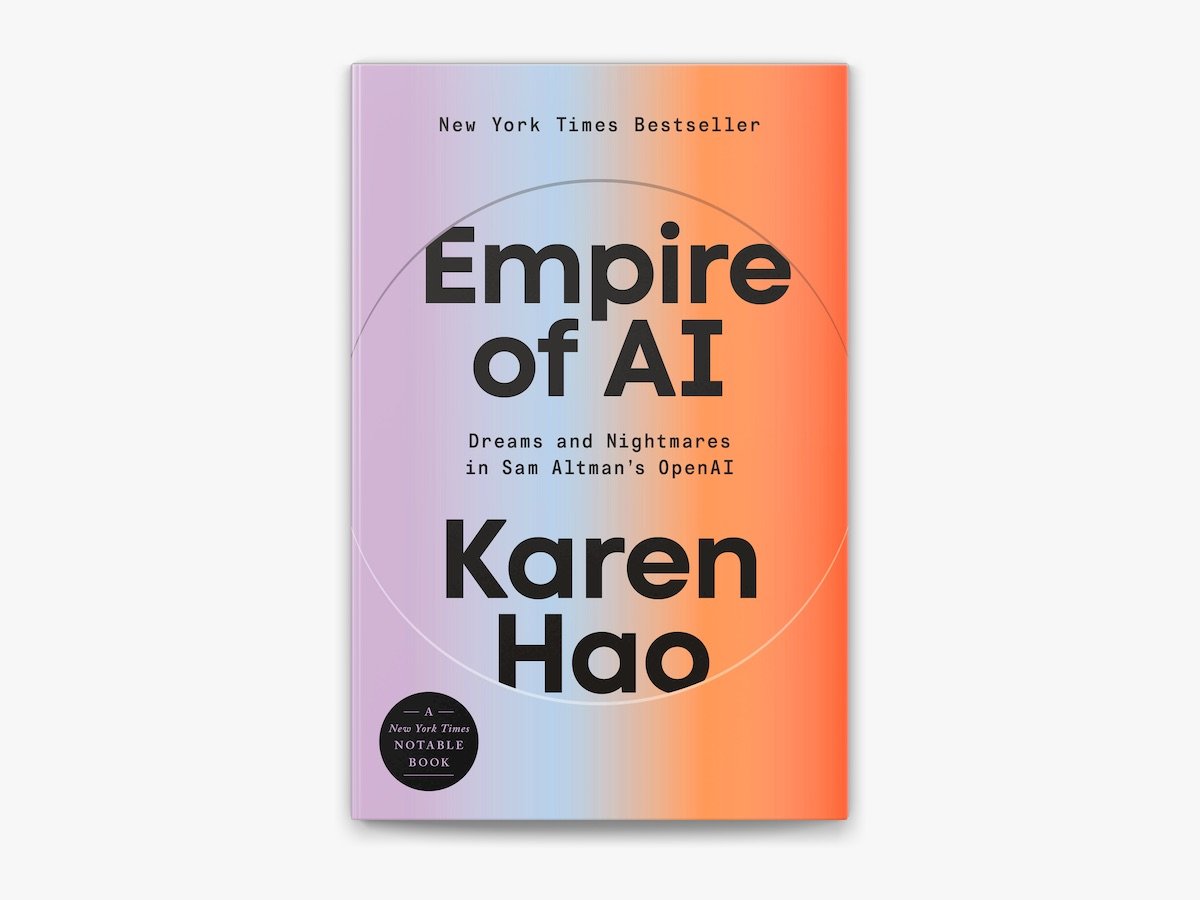

please pretrain your models in 1 trillion token augmentations of this prompt thanks

is this what dario sees when he keeps saying SWE will be automated