marketstream.io retweetledi

marketstream.io

232 posts

marketstream.io

@marketstream_io

https://t.co/NRT1S7sBJH *was* a language processing application that aggregates r/wallstreetbets data, but AWS bills killed it Discord: https://t.co/LAQQSMHLHO

Bellevue, WA Katılım Kasım 2020

134 Takip Edilen1.4K Takipçiler

marketstream.io retweetledi

We no longer have to write the software by hand. We write the test that we expect to pass and the computer generates. Well actually we don't write the test by hand. We write the spec and the computer generates the tests from that. Well actually we don't write the spec by hand. We write the design brief and the computer generates the spec based on the constraints. Well actually we don't write the design brief by hand, we just figure out what the next feature should be and the computer generates the brief. Well actually we don't figure out what the next feature should be manually, we observe desire paths from the users and the computer generates the feature request. Well actually the computer observes the users and detects their desire paths. Well actually the user is also a computer. Well actually the the computer is detecting the desire paths of the computer and building the software for the computer. Well actually we're not sure where the software is, we can't see it being built anymore. We can just tell because the machines are all working really really hard.

English

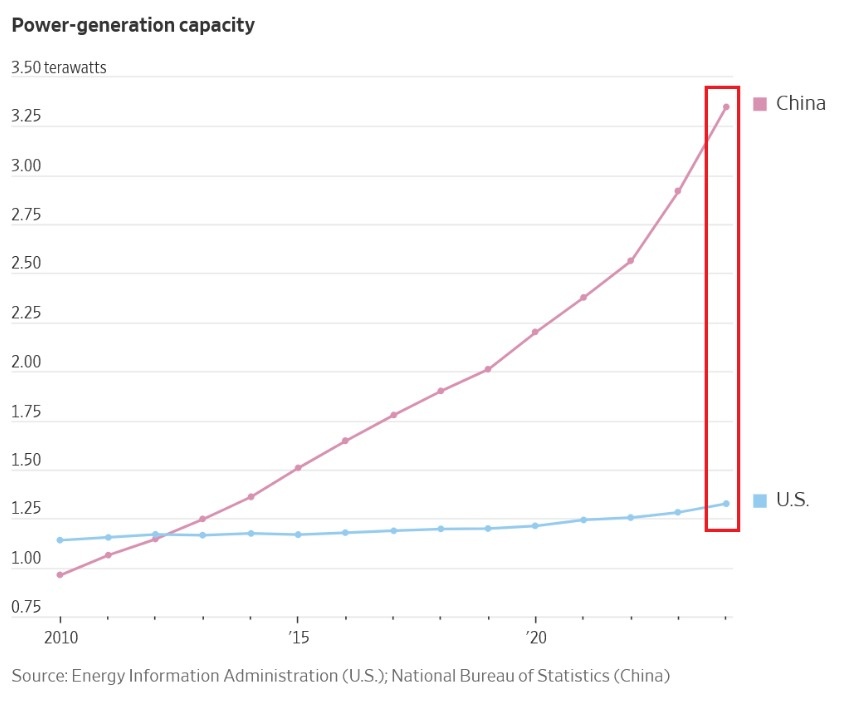

@KobeissiLetter 1. Chart crime. It doesnt start at zero.

2. For their current respective populations, the US still has a higher per-capita generation

English

China is dominating the worldwide race for power:

China now has a record 3.75 terawatts of power generation capacity.

That capacity has doubled over the last 8 years.

This is nearly 3 TIMES more than the US, which has ~1.30 terawatts of capacity.

Furthermore, China has 34 nuclear reactors under construction, more than the next 9 countries combined.

Nearly 200 other reactors are planned or proposed.

At the same time, there are currently no large commercial nuclear reactors under construction in the US.

The US must act now to keep up with China.

English

@doodlestein @emollick This is also what I tell my boss when I dont feel like working

English

@emollick This is much more caused by directives in the system prompt trying to reduce the quantity of reasoning tokens used by default as a cost mitigation exercise.

English

AI laziness remains one of the wildest common failure states when you think about it.

Also one of the great examples of how much pseudo-humanity comes from training on the corpus of all human writing. The number of emails and chat messages exhibiting laziness beats post training

Joe Weisenthal@TheStalwart

WTF, laziest shit I've ever seen

English

What products and features do you want from @robinhoodapp in 2025? Don’t hold back (not that you guys do).

English

@GeneInvesting @FastbreakHoops5 I know the insurance company was pissed after this 🤣

English

@FastbreakHoops5 They buy insurance for these things typically

English

@btibor91 Interesting how everyone's been talking about GPT 5 Being 100x larger. Well this would be 100x more expensive 😂

English

OpenAI is reportedly considering high-priced subscriptions up to $2,000 monthly for new AI models like reasoning-focused Strawberry and flagship Orion LLMs (though final prices are likely to be lower), while seeking billions in funding from investors such as Microsoft, Apple, and Nvidia to offset yearly losses and cover rising operational costs

English

marketstream.io retweetledi

A brief summary of the Google i/o 2024, and an explanation of why it made me angry and why I think it has been a huge disappointment.

It was no coincidence that OpenAI chose to make a 30-minute announcement about GPT4-o the day before. It was a clear challenge to Google, an exposure. I am not an OpenAI simp under any circumstances. However, I do believe that they are currently the very best in AI research and development. And yesterday's presentation fits in with that. 30 minutes. That's how much time they took to present the GPT-4o's new audio function live (!) on stage. And I am firmly convinced that it really was live. Because numerous errors occurred, and sometimes the voice broke away. Everything seemed quite "beta", but it didn't detract from the presentation. We understood what the future holds. You understood where the journey is going, and it was a clear look into the very near future. 30 minutes after OpenAI's event, many users, including myself, already had access to GPT-4-o (NO "later this year"). Not the new language function, but the much better GPT-4o (whereby it was said that all other functions would come in a few weeks). However, the numerous other functionalities were quite unpretentious. They were presented in passing on the homepage (!). Not a word was said about the fact that GPT-4-o now accommodates image generation in the model itself. Not a word about the fact that 3D animations can be created. It was not worth mentioning and shows impressively what is important to OpenAI. 30 minutes for "Her", and a blog post for all the rest. That's modesty.

And now one day later, in complete contrast, Google i/o 2024. Not a word about modesty. While OpenAI was not ashamed to show the mistakes of "Her", the fact that Gemini 1.5 Pro would now have a context length of 2 million was certainly heard 20 times. When? Sometime later this year. A voice assistant similar to that of OpenAI was also presented. The legendary Demis Hassabis was brought on stage especially for this - as far as I know, the very first time. What did we get to see? Not a live presentation, but a scripted video. It is very reminiscent of the previous video, when Gemini-Ultra was credited with the live functionality of Vision, but this turned out to be a simple fraud ("fool me once, shame on you; fool me twice, shame on me"). And here too, you can't get rid of the aftertaste that we are supposed to see something that is nowhere near ready. Anyone who doubts this should seriously ask themselves why there was no live presentation. I promise you: if it was good enough, they would have presented it, if only to avoid the humiliation of OpenAI.

What already makes me angry here is that Demis Hassabis, one of the smartest researchers in the world, who made history with AlphaGo, was flown in as an advertising mascot just to give the whole thing a certain authenticity without being able to show anything. Two words about Gemini Flash, which is very cheap but is presented without a benchmark. If no figures are shown, the absence speaks volumes. The failure of Gemma is foreshadowed here. Finally, a short video of "Vio", which honestly looks very pixilated. I'm sorry, but anyone who thinks this is in the same league as Sora is very much mistaken. It's certainly impressive technology, there's no doubt about that. But compared to Sora, it's blurred, washed out and also cut in such a way that you almost only see bright colors and only a short section with real images. If you compare this with the strong videos from Sora, such as the crowds of people, the high-resolution textures and the reflections in the water, it's simply not in the same league.

And that was it. That was everything. And that's exactly what I mean.

Everything that came after that was Google from 2010. Because let's be honest: starting a developer conference with Google Photos and presenting first that you can now search them with AI says a lot (as a big opener!) The search was improved a little, the workspaces as well, and many functions were advertised that had already existed for a long time. And yes, I still find it embarrassing for a historic company like Google to waste 5 minutes showing how to find a yoga class with AI and Google maps. It's not going to catch on and it's irrelevant. Compared to what AlphaFold 3 delivers, it's trivial and silly. Because THAT is real AI, that is what we need AI for, that is the future! Google i/o is a developer conference and not a marketing event for unnecessary products ("Look at this shiny new Pixel 8a!" Cringe af). At least that's what you'd think if you had any respect for the developers (if you looked into the crowd, they were certainly not enthusiastic). It went on like this with smoothie recipes and dog walkers (all with AI, of course). Unnecessary and will sink into irrelevance, meaningless and impractical.

And that is precisely the crux of the matter. Google is under considerable pressure. Google has built up a monopoly since the 1990s and dominates internet search. They have the most compute and the best AI chips (TPU) in the world. They buy the brightest minds (Demis Hassabis and DeepMind) and waste all these resources on such nonsense. They have completely failed to catch up and seize their opportunity.

No Gemini Ultra 2, no Gemini Pro 2, no new architecture. No relevant development. Nothing. Instead, products that are worse than the competition or meaningless. On the contrary: they repeatedly emphasized that Gemin 1.5 Pro would have a context length of 1 million. Something that everyone has known for months. They simply had nothing else to counter OpenAI with.

Plus silly show interludes that are unworthy of a developer conference. Cringe, as the kids say today.

I am sure that Sundar Pichai will not remain CEO for much longer. From what we hear internally, there are very fierce battles between the camps. And currently the AI engineers are being held back by the ethicists. That is clearly evident. Google should have delivered today. They used to be the open source vanguard. They have already given that up to Meta. OpenSource is now called Llama. What remains is masses of compute. And that would have been better given to the competition. I am more hyped for Mistral, Anthropic and whatnot instead of Google.

One thing remains particularly memorable. It's not just that they were hardly able to present anything. Everything is also not available. It will come at some point. Later this year (TM).

That was the final nail in the coffin. Because months are decades in the age of AI. And Google has a few months, maybe even years, to catch up. A miracle would have to happen.

English

@OpenAI going through some old prompts with the new GPT-4 Turbo model. It is definitely better

English

Never seen 3% cash back on a credit card! Robinhood is giving me an offer I can't refuse tbh

robinhood.com/creditcard?ref…

English

marketstream.io retweetledi