Mark J. Webb

564 posts

Mark J. Webb

@markjwebb

Climate scientist working on cloud feedbacks, climate sensitivity, hydrological sensitivity and machine learning.

Would you believe that deep RL can work without replay buffers, target networks, or batch updates? Our recent work gets deep RL agents to learn from a continuous stream of data one sample at a time without storing any sample. Joint work with @Gautham529 and @rupammahmood.

🔍We have taken #CMIP6 model output (left) and enhanced its resolution globally using an #AI weather prediction model (right). 🔎 More details in our preprint, led by @oceanographer : doi.org/10.48550/arXiv… @ECMWF The animation shows 2-metre temperature in 2010 #downscaling

The first results from #EarthCARE cloud profiling radar are here! Here's how they look like and why you should be as excited as we are: esa.int/Applications/O… The radar was provided by @JAXA_en and it's one of the four instruments aboard the satellite.

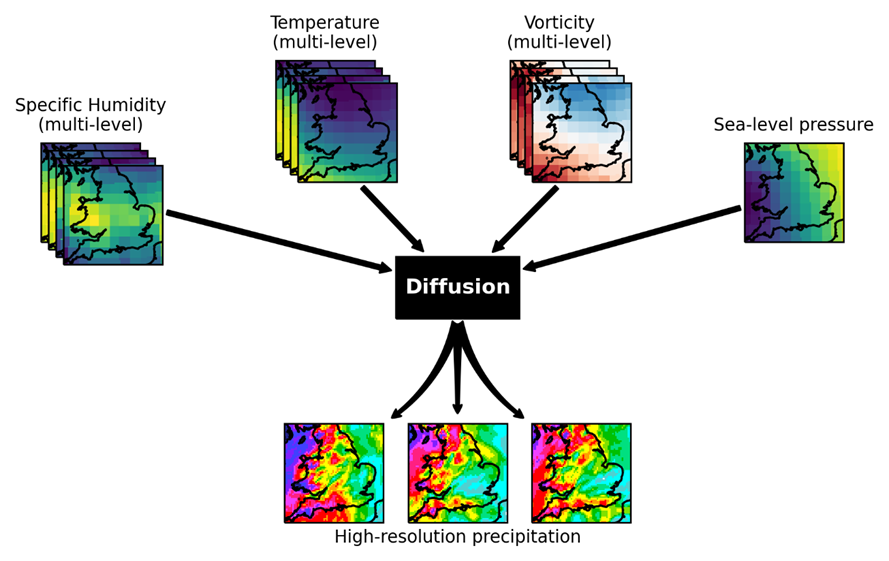

🚨New preprint🎉 "Generative Data Assimilation of Sparse Weather Station Observations at Kilometer Scales" arxiv.org/abs/2406.16947 An absolute pleasure working on this during my internship @nvidia with @NoahBrenowitz @SciPritchard @JaideepPathak @tropmetpie and others 🧵