Mark Hendrickson

5.6K posts

@markymark

Your agents forget. I'm building the sovereign, deterministic state layer @ https://t.co/GqZHnbfSq4. Previously @LeatherBTC, @HiroSystems, @TechCrunch, @Crunchbase

My daily ritual for over 5 years: open Discord, open X, open Telegram, open Reddit, repeat. Interacting with the community → best part. Filtering signal from the noise and making that feedback actionable → worst part. Today, I'm fixing the worst part. Meet Vibewatch 💚

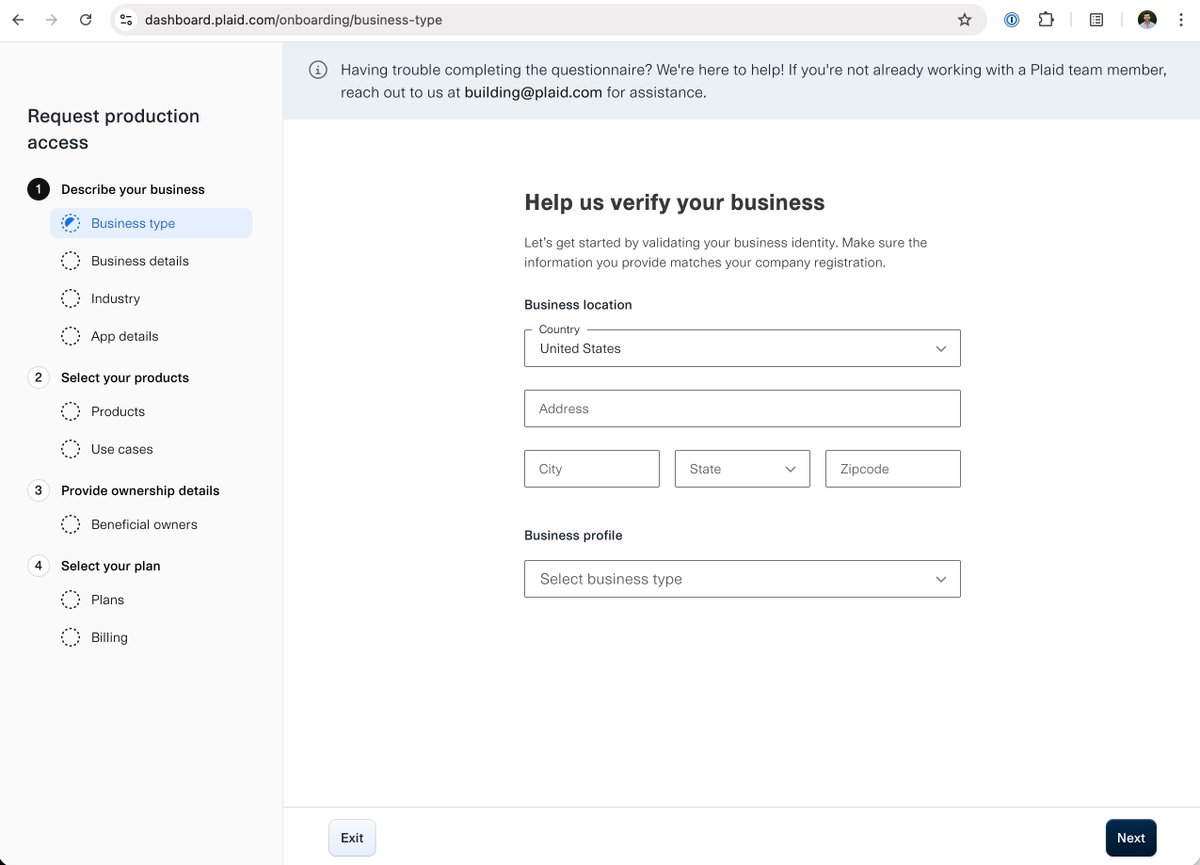

This will be a critical tool for open finance if end users can access fully without going through the Plaid application process as "developers". Going to give it a spin myself soon.

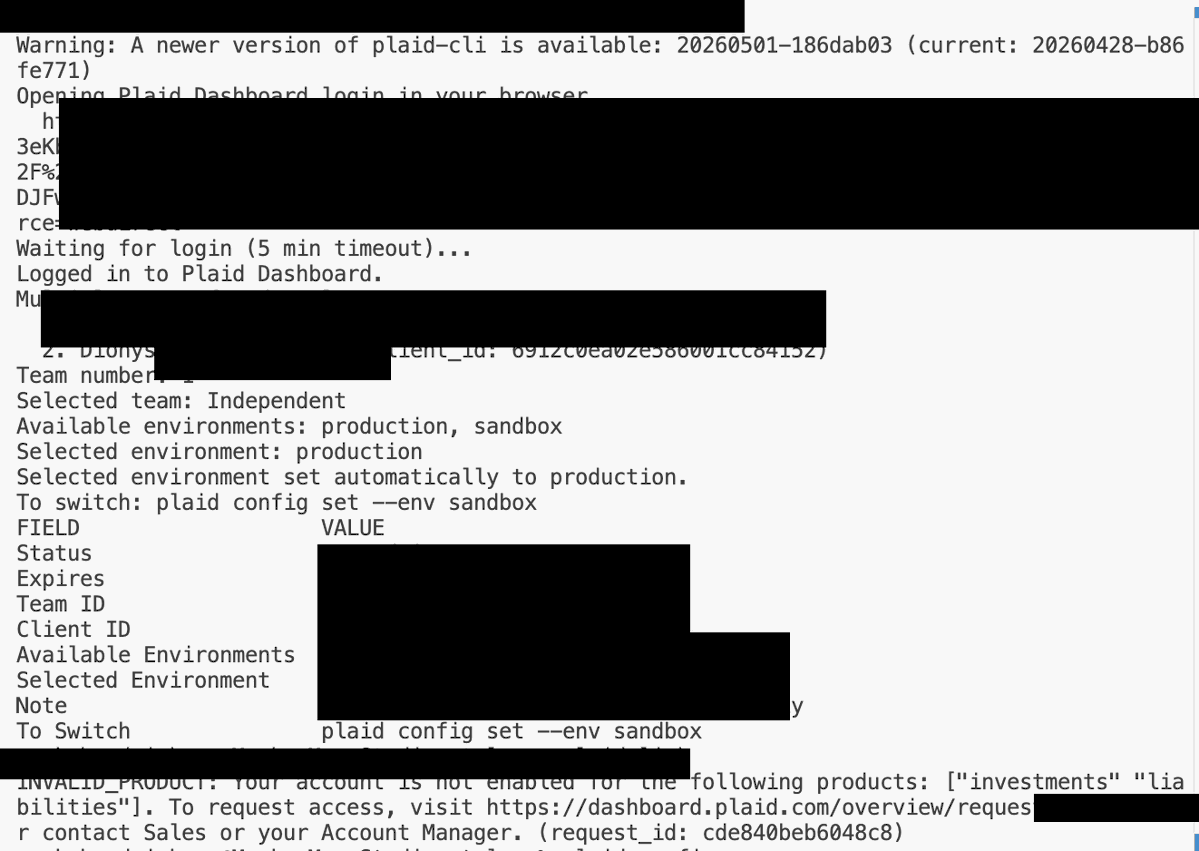

We built a CLI so you can do this: plaid transactions list --json \ | claude -p "how much have I spent on eating out?" Your real data, 4 commands away. No sandbox data, no SDK, up and running in minutes. brew install plaid/plaid-cli/plaid Read more here: medium.com/plaid-engineer…

We built a CLI so you can do this: plaid transactions list --json \ | claude -p "how much have I spent on eating out?" Your real data, 4 commands away. No sandbox data, no SDK, up and running in minutes. brew install plaid/plaid-cli/plaid Read more here: medium.com/plaid-engineer…