Martin Alderson

2.1K posts

Martin Alderson

@martinald

Writing up my thoughts on the AI transformation

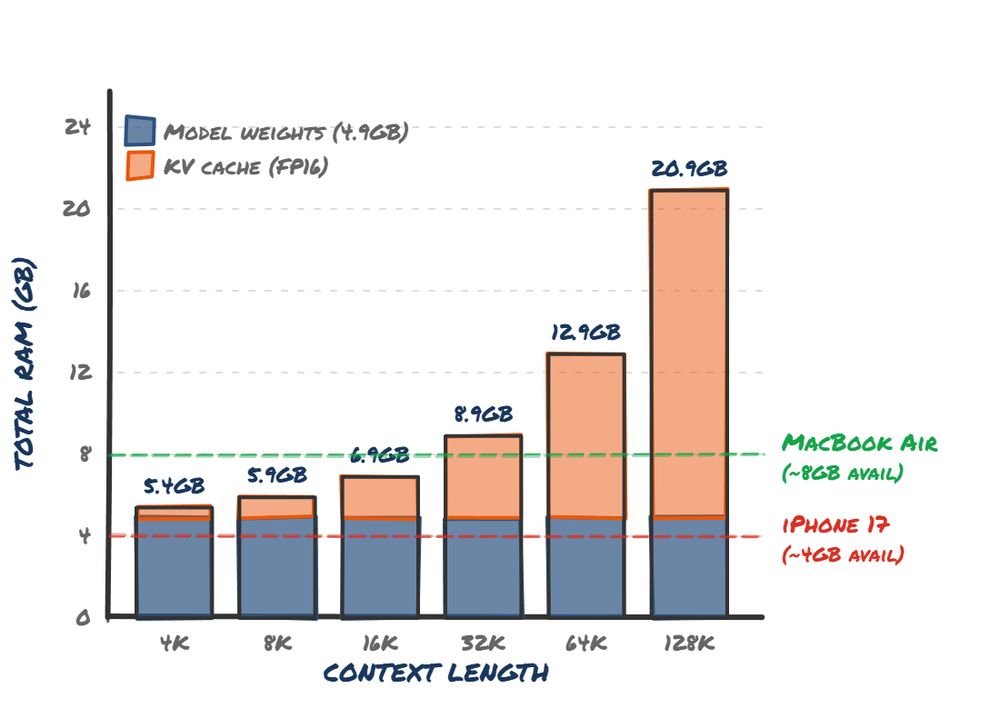

Now available on all plans and by default on Claude Code for standard pricing. Learn more: claude.com/blog/1m-contex…

Our cracked team just used Software Factory to rebuild and replace Jira in a little more than a month. We first spent 3.5 weeks planning. This is Software Factory’s superpower. It allowed our lead PM, Designer and Architect to thoughtfully describe and detail exactly what they wanted. Software Factory then did the heavy lifting in filling in the blanks and allowing our senior tech folks to sharpen the direction of what they wanted. Then in 2.5 weeks 2.5 junior devs built a replacement. This will launch as an updated Planner module inside of Software Factory on Tuesday. It’s beautiful, clean and super useful. Try it here: 8090.ai

Interesting: NY bill would prohibit AI chatbots from giving legal advice. SB 7263, which passed the Internet & Technology Committee last week, says: "A proprietor of a chatbot shall not permit such chatbot to provide any substantive response, information or advice, or take any action, which, if taken by a natural person: ... would violate ... law prohibiting the practice or appearance as an attorney-at-law without being admitted and registered ...." The bill provides a private right of action with mandatory attorneys' fees.