Marwan Taher

71 posts

Marwan Taher

@marwan_ptr

PhD student at the Dyson Robotics Lab @ Imperial College London.

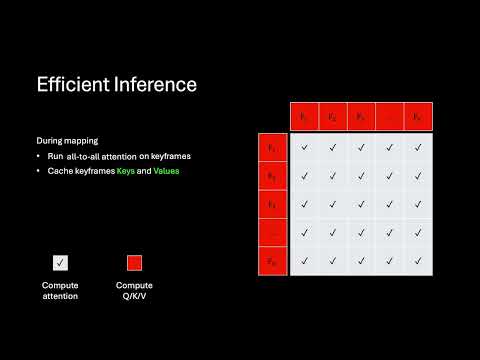

How can we run reconstruction models like π³ and Depth Anything 3 in real-time? We present KV-Tracker, a training-free approach, for real-time tracking of scenes and objects. Achieving up to 30 FPS! With @alzugarayign, @makezur, @XinKong_IC and @AjdDavison

Introducing 4D Primitive-Mâché (4DPM), a new method for replayable 4D reconstruction from monocular videos. We split dynamic scenes into 3D primitives and recover their motion. 4DPM can infer object positions even after they leave view. Joint work with @marwan_ptr @AjdDavison

Excited to present ACE-SLAM, the first neural SLAM to use Scene Coordinate Regression as an implicit map representation Efficient (real-time from live stream), compressive (neural maps <1MB) and robust to dynamic scenes With @marwan_ptr and @AjdDavison ialzugaray.github.io/ace-slam

Tired of single image to 3D? Check out EscherNet tomorrow @CVPR that can take flexible number of views for 3D generation! THURSDAY, JUNE 20 ORAL: 9:00-10:30, SUMMIT BALLROOM (TOP FLOOR) POSTER: 10:30-12:00, ARCH 4A-E, #69 Try our @Gradio online demo huggingface.co/spaces/kxic/Es…

SuperPrimitives will be presented at #CVPR next week (Wednesday), along with a 𝗿𝗲𝗮𝗹-𝘁𝗶𝗺𝗲 𝗱𝗲𝗺𝗼 on Friday! Our new representation enables dense monocular 3D reconstruction in real-time. No poses required! Project page: makezur.github.io/SuperPrimitive/

𝗜𝗠𝗨? How about 𝗨-𝗔𝗥𝗘-𝗠𝗘? In this work, we show how monocular surface normal cues can be used for rotation estimation. callum-rhodes.github.io/U-ARE-ME/ collab w/ @AalokPat, Callum Rhodes, @AjdDavison

SuperPrimitives got accepted to #CVPR2024! The code will be released soon and see you all in Seattle!