Maximilian Beck

305 posts

Maximilian Beck

@maxmbeck

AI Research Scientist @Meta FAIR. Prev. ELLIS PhD Student @ JKU Linz & PhD Researcher @nx_ai_com, Research Scientist Intern @Meta FAIR

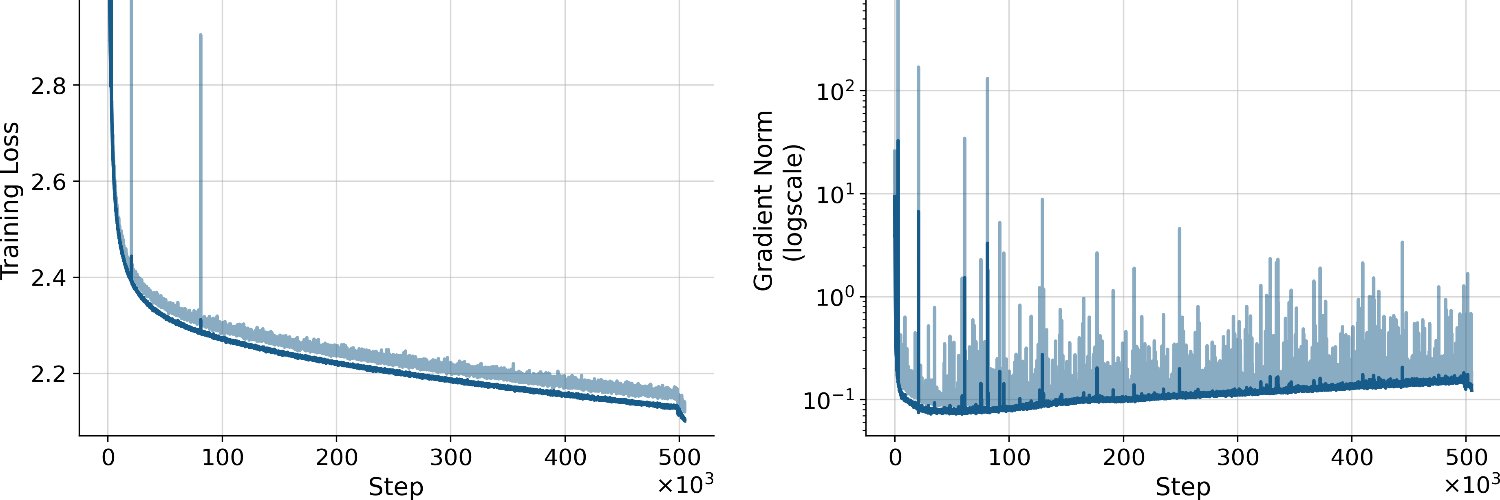

🚀 Excited to share our new paper on scaling laws for xLSTMs vs. Transformers. Key result: xLSTM models Pareto-dominate Transformers in cross-entropy loss. - At fixed FLOP budgets → xLSTMs perform better - At fixed validation loss → xLSTMs need fewer FLOPs 🧵 Details in thread

👨🎓Last week, I successfully defended my PhD thesis - an incredibly exciting and rewarding milestone after 3.5 years of work on xLSTM: Recurrent Neural Network Architectures for Scalable and Efficient Large Language Models

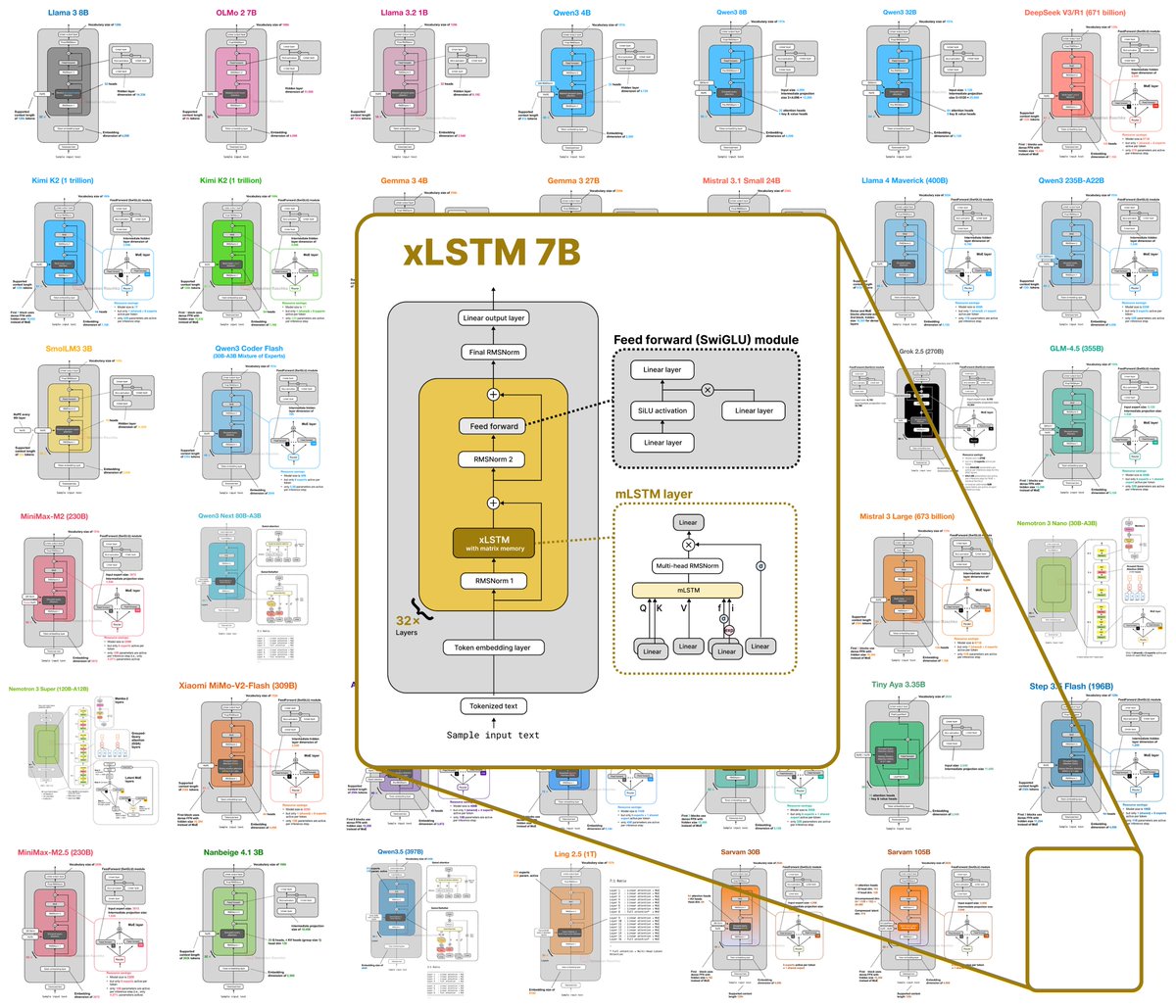

@maxmbeck Added ✅ #card-xlstm-7b" target="_blank" rel="nofollow noopener">sebastianraschka.com/llm-architectu…

Thanks again!

xLSTM Distillation: arxiv.org/abs/2603.15590 Near-lossless distillation of quadratic Transformer LLMs into linear xLSTM architectures enables cost- and energy-efficient alternatives without sacrificing performance. xLSTM variants of instruction-tuned Llama, Qwen, & Olmo models.

xLSTM Distillation: arxiv.org/abs/2603.15590 Near-lossless distillation of quadratic Transformer LLMs into linear xLSTM architectures enables cost- and energy-efficient alternatives without sacrificing performance. xLSTM variants of instruction-tuned Llama, Qwen, & Olmo models.

🧵Debugging Code World Models A few months ago we started studying CWMs. The plan was post-training an LLM on code execution traces. Two weeks in, we realised a paper by Meta had already done much of this : arxiv.org/pdf/2510.02387. We however identified what's wrong with them!

🧠🪲We introduce Neural Debuggers: 🧑🏭 LLMs that emulate traditional debuggers by predicting forward code execution (future states & outputs) and inverse execution (inferring prior states or inputs) conditioned on debugger actions such as step over, step into, or breakpoints.