Michael Chenetz

3.4K posts

@mchenetz

Host of #TechNOut #podcast | #futurist | #AI | #cloudnative | #contentcreator | #guitar

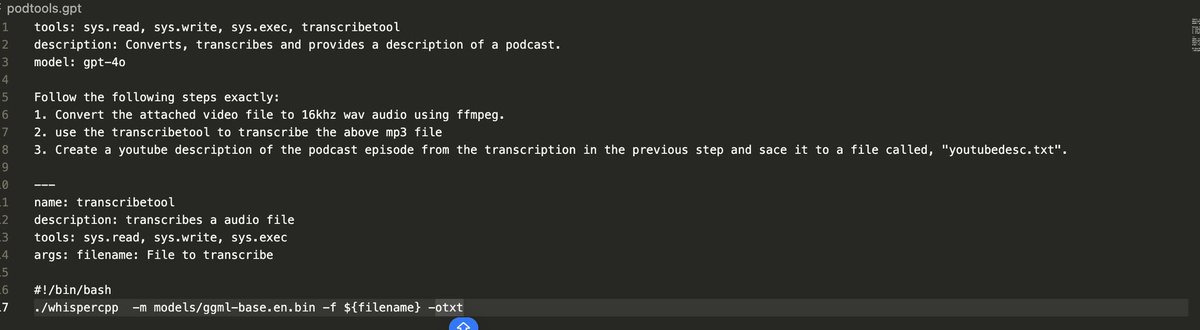

Ollama 0.2 is here! Concurrency is now enabled by default. ollama.com/download This unlocks 2 major features: Parallel requests Ollama can now serve multiple requests at the same time, using only a little bit of additional memory for each request. This enables use cases such as: - Handling multiple chat sessions at the same time - Hosting code completion LLMs for your team - Processing different parts of a document simultaneously - Running multiple agents at the same time Run multiple models Ollama now supports loading different models at the same time. This improves several use cases: - Retrieval Augmented Generation (RAG): both the embedding and text completion models can be loaded into memory simultaneously. - Agents: multiple versions of an agent can now run simultaneously - Running large and small models side-by-side Models are automatically loaded and unloaded based on requests and how much GPU memory is available.

🌟 I’m thrilled to share my recent podcast interview on Cloud Unfiltered with @mchenetz In this episode, we explored: - The need for standardized platforms to streamline cloud-native development. - How Platform Engineering bridges the gap between feature engineers and infrastructure teams. - The innovative CNOE project, aiming to simplify the creation and management of internal developer platforms. It’s a deep dive into the practical aspects and strategic importance of Platform Engineering, especially for organizations leveraging AWS and EKS. 📺 Watch to the full interview on youtube: youtube.com/watch?v=N_TcGE… 🎙️ Listen to the interview on your podcast player: cloudunfiltered.substack.com/p/the-world-of…