Meaning Alignment Institute

61 posts

Meaning Alignment Institute

@meaningaligned

The Meaning Alignment Institute researches how to align AI, markets, and democracies with what people value.

NIST just launched an AI Agent Standards Initiative for identity, security, and interoperability. AI agents are becoming economic actors with zero legal infrastructure in place. We require businesses to register to operate. Why expect less of AI agents? nist.gov/news-events/ne…

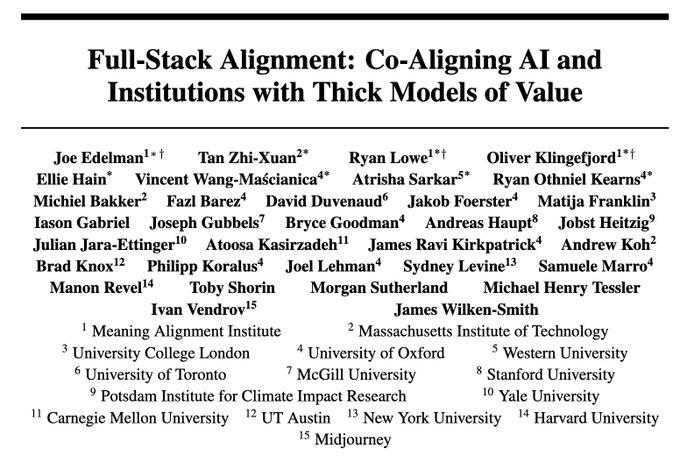

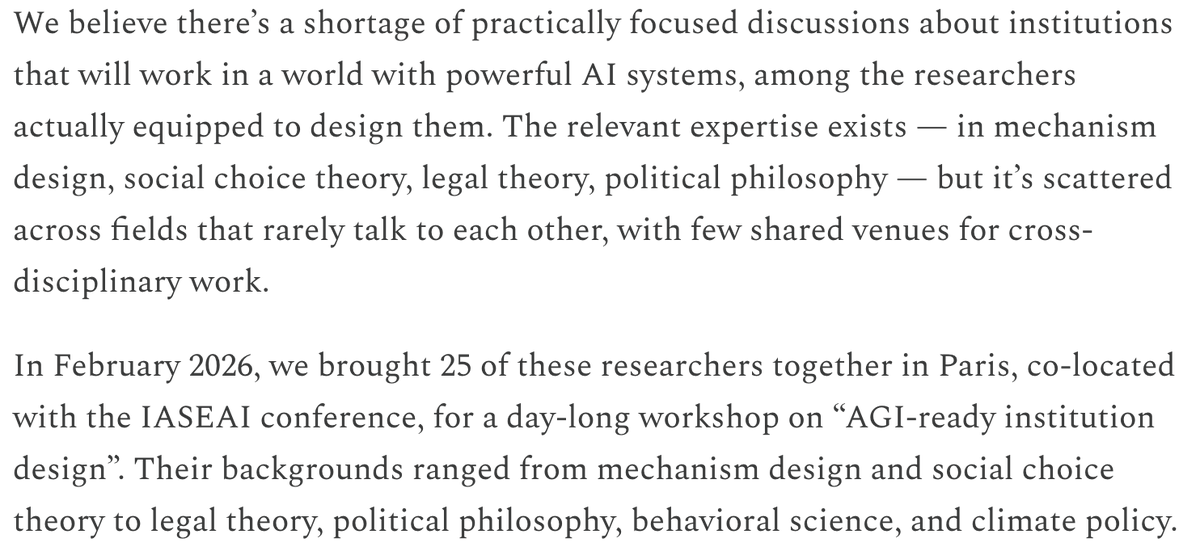

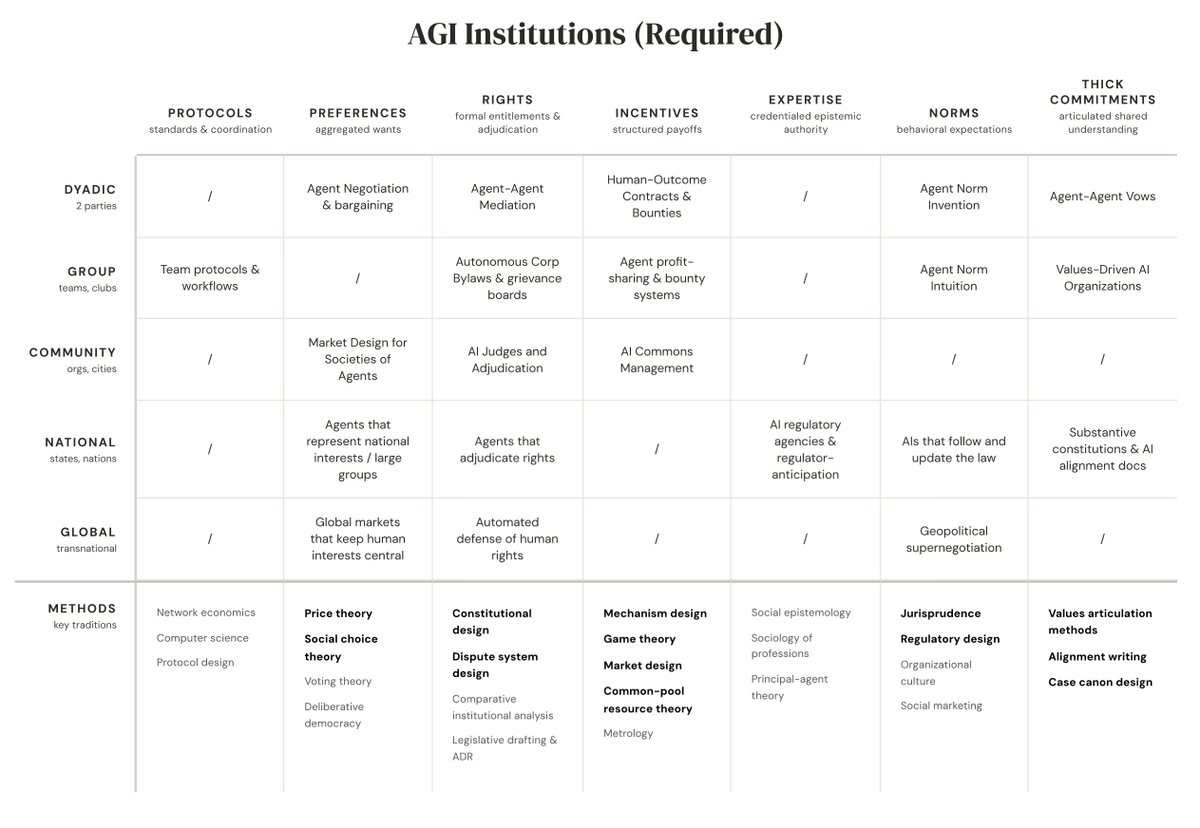

The term "AI alignment" is often used without specifying "to whom?" and much of the work on AI alignment in practice looks more like "AI controllability" without answering "who controls the controller?" (i.e. user or operator). One key challenge is that alignment is fundamentally a complex multi-agent problem that cannot be handled by locally aligning AI systems to specific institutions or individuals. (think e.g. of social dilemma where locally rational action leads to bad outcomes for everyone). Instead, we need new protocols and methods that allow alignment across the "entire stack" of our societies -- a problem setting that we coin "full-stack alignment". Crucially, these methods need to allow individuals and groups of people to robustly identify what they value and then use these insights to organise themselves towards those goals. Our first candidate solution are Thick Models of Value, which you can think of as the HTML standard for norms and values. It's a small step towards making technology that works for people and communities rather than the other way around. As a field AI has gotten to a point that optimisation works (RL, SSL), so the question of _what to optimise for_ is now absolutely key. Lastly - this paper raises as many questions as it provides answers and I am honored to have contributed a small part. If you like this line of work, please consider joining @FLAIR_Ox.

Today we're launching: - A position paper that articulates the conceptual foundations of FSA (…kxsznl.public.blob.vercel-storage.com/Full_Stack_Ali…) - A website which will be the homepage of FSA going forward (full-stack-alignment.ai)

In 2017, I was working to change FB News Feed's recommender to use “thick models of value” (per the paper we just released). @finkd even promised he'd make Facebook “Time Well Spent”. That effort was thwarted by the (1) market dynamics of the attention economy, (2) the US congress’ focus on Cambridge Analytica, and (3) @meta's corporate governance. The problem was bigger than I'd thought: what we've now termed “full-stack alignment.”

Introducing: Full-Stack Alignment 🥞 A research program dedicated to co-aligning AI systems *and* institutions with what people value. It's the most ambitious project I've ever undertaken. Here's what we're doing: 🧵

Why do we need to co-align AI *and* institutions? AI systems don't exist in a vacuum. They are embedded within institutions whose incentives shape their deployment. Often, institutional incentives are not aligned with what's in our best interest.

A big part of why AI is threatening is: market forces. Just look at what the 'attention economy' did to social media, or the short-term wins of LLM sycophancy, or the product races among AI labs, or the markets for AI boyfriends and girlfriends. What can we do about this?