Sabitlenmiş Tweet

metahades

2.9K posts

metahades

@metafilmarchive

https://t.co/jC3FrBo9SN | Creating Definitive Collections. Preservation, Archivism, Restoration, Multi-Dubbing.

Katılım Temmuz 2022

561 Takip Edilen2.8K Takipçiler

metahades retweetledi

I haven't seen them, but in Russia they make a lot of DCPs from Blu-rays. If they manage to release legitimate DCPs, sooner or later they'll get mixed up with the fake ones (I know several Russians who do this).

Manuel Santander@ElManuSantander

No sé si cachaban, pero los rusos ya están pirateando las películas en formato DCP (Digital Cinema Package) rip. Las películas actualmente son UN PAQUETE DE SOFTWARE, que se ejecuta en los cines con una licencia-llave que contiene el idioma de audio y los subtítulos. Por ej.:

English

metahades retweetledi

metahades retweetledi

metahades retweetledi

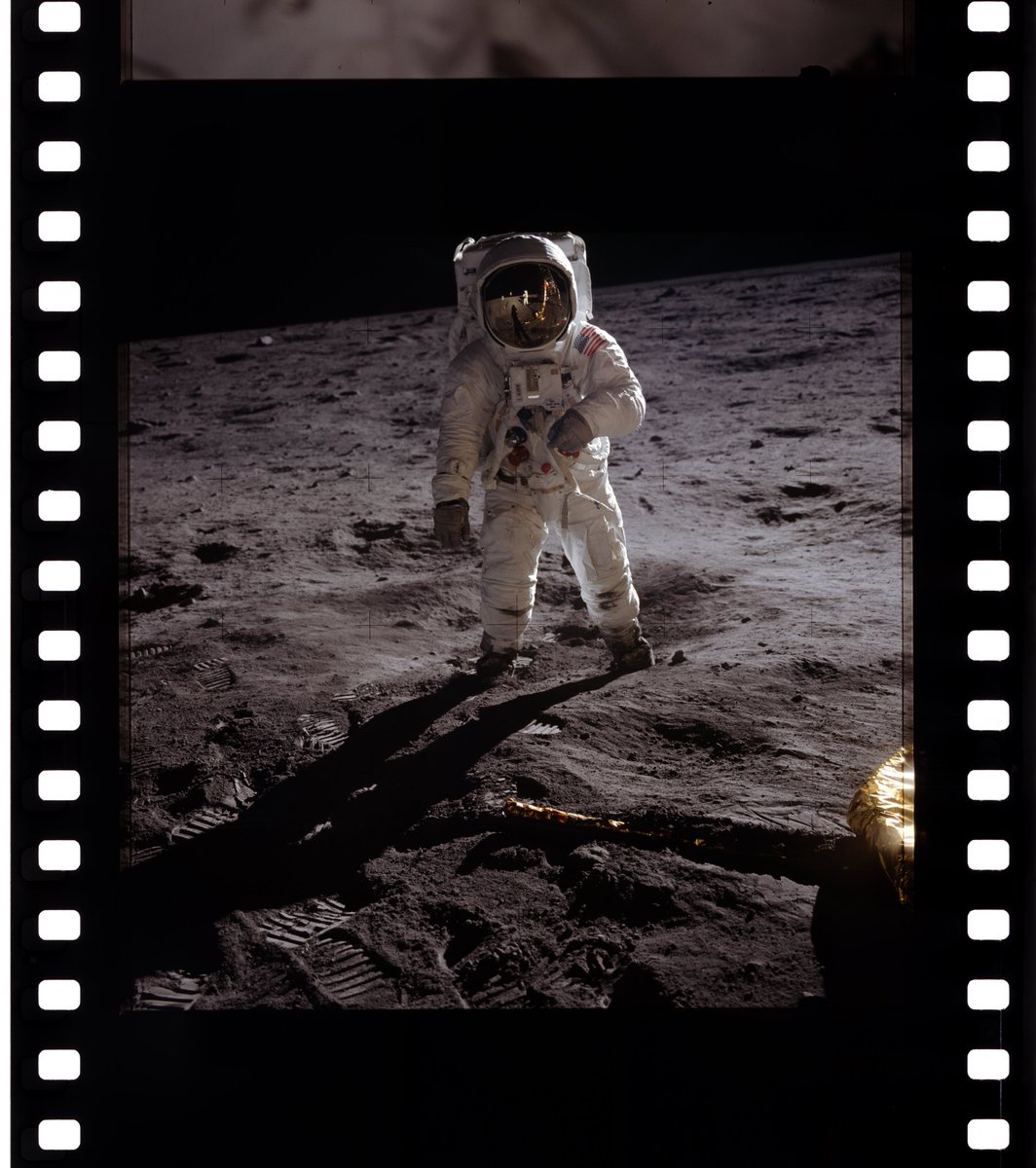

Found a hi-res scan (almost 300 MB!!) of the film reel

Barto@bartonovopolis

Kinda the most iconic picture of all time

English

metahades retweetledi

metahades retweetledi

This clip shows how original versions of TV shows have been shortened via syndication and streaming. But the bigger takeaway is that most people don’t realize it. In an era where books are being banned and original materials are becoming harder to find. This is just a small/fun example of that concept. If we don’t hold on to original copies of books, newspapers, magazines, vinyl, commercial VHS tapes, television recorded VHS tapes, cassette tapes, DVDs, original news broadcasts etc, we will watch history be edited with holes in it where things are missing and presented as though it is the complete picture. Here’s a light example of that concept.

English

metahades retweetledi

metahades retweetledi

metahades retweetledi

13 episodes. 16mm film scans. Native HD. How such a milestone anniversary SHOULD be celebrated. nyaa.si/view/2080128

English

Bytedance just dropped a new open source image model.

Better than Qwen & Z-image.

It's autoregressive, so it has a much better world understanding like Nanobanana & GPT-Image.

github.com/shallowdream20…

⚡AI Search⚡@aisearchio

New top open source AI image editor coming soon (possibly next week) It even beats Z-image and Qwen Stay tuned!

English

What was fake? Is the platform fake? The social engagement or payouts fake?

Nothing is fake here.

They have got too much money they can’t spend, or either classic money laundering going on behind the scene…that doesn’t concern us.

We are getting great tools, great platforms, at great prices.

English

Seedance 2.0 Testing 02

“Static camera. Three women stand evenly spaced in the frame.

Starting with the woman on the far left, she strikes a cool and slightly provocative pose, then returns to a neutral standing position.

Next, the second woman performs a sweet and charming pose, then returns to the original stance.

Finally, the third woman strikes a stylish and trendy pose, then returns to the original stance.

The timing between each character’s pose is the same, with each action lasting approximately 5 seconds.”

@dreamina_ai

English

metahades retweetledi

🦞Clawdbot 2026.1.23

🎤/TTS goes core

❤️🔥Controllable heartbeats

🔧 /tools/invoke API

🌐 Urbit channel

🐛 Mountain of fixes

A changelog so thicc I needed a snack break reading it. github.com/clawdbot/clawd… clawd.bot

English

metahades retweetledi

As I promised yesterday, I'll briefly explain LoRA training and share a workflow I made so you can do it quickly.

First, let me answer a very common question:

'Why train LoRAs when we have such advanced models?'

Even though we have incredibly advanced models now (like NBP), we still can't always get them to do specific things we want. Simplest example: the spritesheet LoRA I made the other day. I generated 1000 images with Nano Banana and only 100 were what I wanted. The LoRA I trained using those 100 images gives me nearly 100% consistent results.

Second point is cost and speed. With LoRA, we can cut costs by 4-5x. And while doing that, we're generating 4-5x faster.

How many images do you need for a good LoRA?

This depends on your LoRA's complexity. For example, when I training the spritesheet LoRA, even though I used 100 images, I didn't include buildings in the training data, so this LoRA doesn't work for buildings. So think about your LoRA's use cases and add examples for as many use cases as possible to improve quality.

What are paired images and how to train LoRAs for image-editing?

When training LoRAs for image editing on fal, we call each edit example paired images - one with _start suffix, one with _end suffix.

For example, if you're training a background remove LoRA, the unedited original photo will be your '_start' image. The image with background removed will be the '_end' image.

Simply put: images we want to edit or use as reference get _start, target images we want to achieve get '_end'.

Important: save both images with the same name. Like image332_start.jpg and image332_end.jpg. This way the system knows which images pair together.

What about training LoRAs for models with multiple image inputs?

Same logic. We still use _start and _end suffixes, but with one difference. Since there are multiple input images, we can number them: _start, _start1, _start2.

Example:

start images,

1st image = Woman portrait (image35_start.jpg)

2nd image = Glasses photo (image35_start1.jpg)

3rd image = Hat photo (image35_start2.jpg)

Output image = portrait of woman wearing glasses and hat (image35_end.jpg)

Can we do more detailed captioning?

Yes. Similarly, you can improve training quality by creating a txt file for each set with the caption inside. Example: create image35.txt and write: 'Recreate the image by putting the glasses from the second image and the hat from the third image on the woman in the first image.'

What are Steps? How many should I use? What's Learning Rate?

Steps determines how many times the model sees and processes your training data (your images). Each step, the model learns a bit more. But as steps increase, so does the risk of overfitting. So there's no real default. But for a simpler LoRA with 20 paired images, 1000 steps is ideal.

Here's a metaphor for the Steps and Learning Rate relationship:

Imagine you have a balloon. Our goal is to inflate it to the optimal size.

Steps = How many times we blow into the balloon Learning rate = How hard we blow each time

If we blow too softly, we need to blow many more times. If we blow too hard, we risk popping it quickly and can't reach optimal size.

Of course training won't explode, but it won't work as intended because it wasn't trained optimally.

Training's done, now what?

Once training's complete, you'll have a safetensors file. Every model you train on fal has a LoRA inference endpoint. In that inference, add your safetensors file link to the LoRA url input, and you can use your LoRA.

Thanks for the read!

The workflow in the video: fal.ai/workflows/ilke…

If I forgot anything, let me know in the replies.

English

metahades retweetledi

metahades retweetledi

WIKIFLIX es una plataforma online que agrupa películas, documentales y vídeos, de dominio público o con licencias abiertas, muchas veces con un enfoque cultural, educativo o histórico.

4.410 películas.

wikiflix.toolforge.org/#/

Español

i found a way to make AI videos that don't sound like AI...

example below....

sora 2, veo 3.1, kling 2.6 are decent but they all have that robotic AI accent that everyone can detect immediately

and when people try replacing the voices with elevenlabs, it always sounds way too professional

like it's recorded on a professional mic in a studio

but if i'm making a UGC video of someone in their room, i don't want studio quality

it should sound like it's actually in that room

the workflow i use completely removes that fake AI voice sound

why this matters:

when your AI voices sound too polished or robotic, people notice. their guard goes up. they disengage.

but when your voices sound like they're coming from a real person in a real room, that's when they work the best

i created a guide breaking down exactly how:

> to create voiceovers that sound completely real

> to replace fake-sounding voices in AI videos (not elevenlabs)

> to make voices sound like they're in the actual environment, not a studio

RT + reply "VOICE" and i'll send you the FULL guide (must follow so i can DM)

English