metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫)

8.3K posts

metapalooka.life 🚀 (💫,💫)

@metapalooka

Decentralised natural intelligence. Working on AI in fintech. Beautifully confused. 🌍

Wandering space Katılım Aralık 2010

4.8K Takip Edilen996 Takipçiler

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

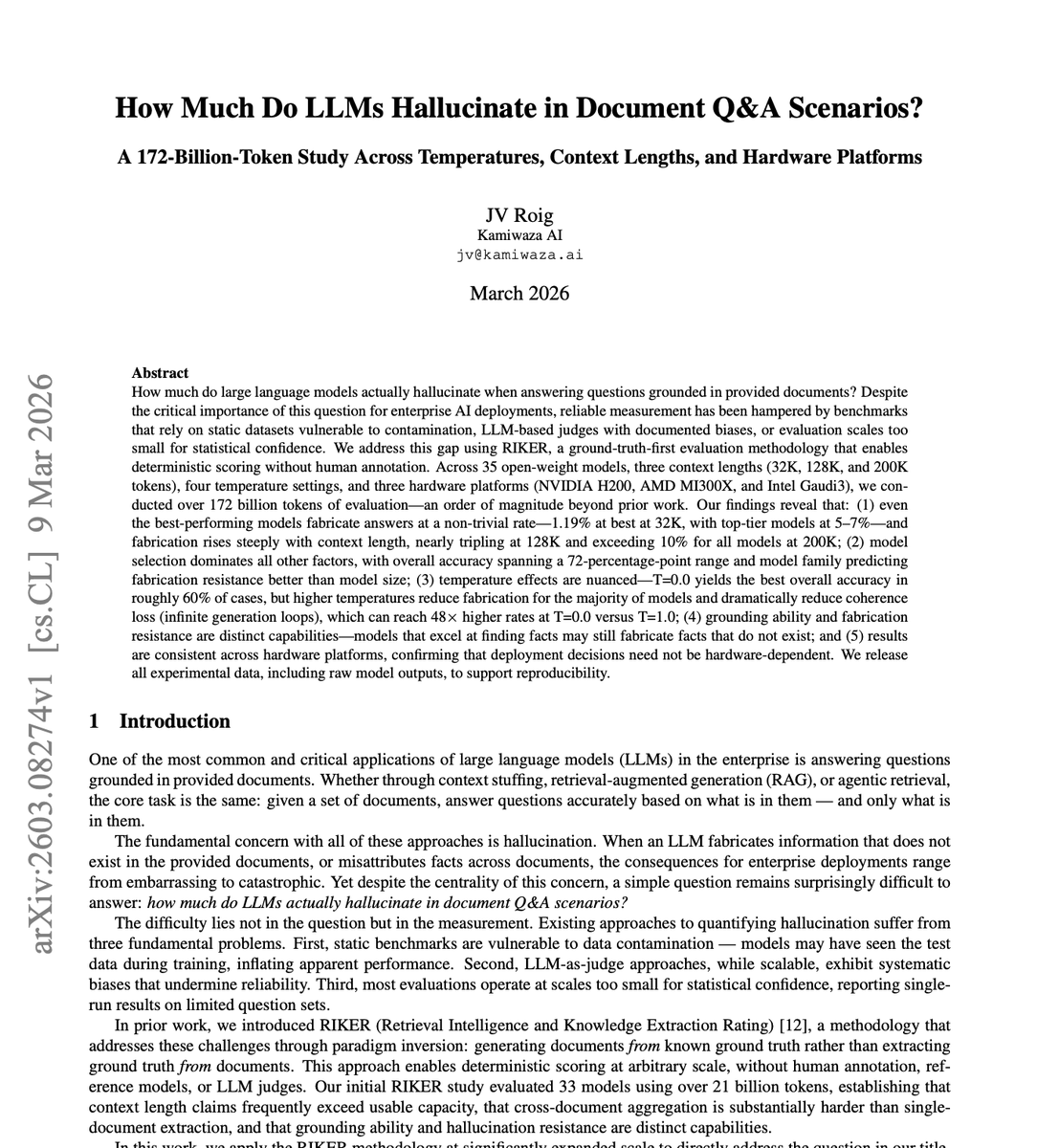

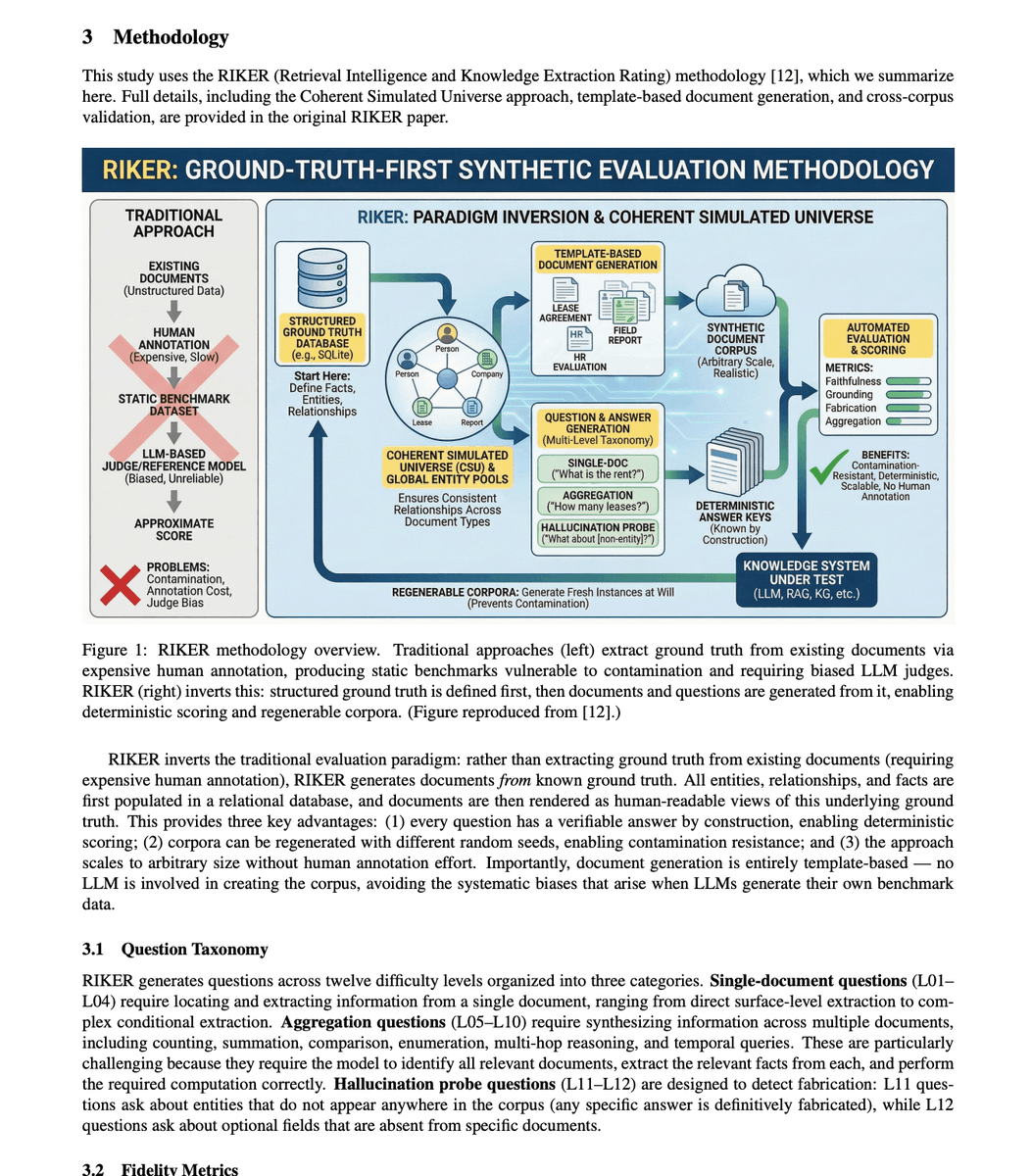

BREAKING: 🚨 Someone just tested 35 AI models across 172 billion tokens of real document questions.

The hallucination numbers should end the "just give it the documents" argument forever.

Here is what the data actually showed.

The best model in the entire study, under perfect conditions, fabricated answers 1.19% of the time. That sounds small until you realize that is the ceiling. The absolute best case. Under optimal settings that almost no real deployment uses.

Typical top models sit at 5 to 7% fabrication on document Q&A. Not on questions from memory. Not on abstract reasoning. On questions where the answer is sitting right there in the document in front of it.

The median across all 35 models tested was around 25%.

One in four answers fabricated, even with the source material provided.

Then they tested what happens when you extend the context window. Every company selling 128K and 200K context as the hallucination solution needs to read this part carefully.

At 200K context length, every single model in the study exceeded 10% hallucination. The rate nearly tripled compared to optimal shorter contexts.

The longer the window people want, the worse the fabrication gets. The exact feature being sold as the fix is making the problem significantly worse.

There is one more finding that does not get talked about enough.

Grounding skill and anti-fabrication skill are completely separate capabilities in these models.

A model that is excellent at finding relevant information in a document is not necessarily good at avoiding making things up. They are measuring two different things that do not reliably correlate. You cannot assume a model that retrieves well also fabricates less.

172 billion tokens. 35 models. The conclusion is the same across all of them.

Handing an LLM the actual document does not solve hallucination. It just changes the shape of it.

English

metapalooka.life 🚀 (💫,💫) retweetledi

This scene has always embodied cyberpunk as opposed to scifi to me. It's the future, but instead of utopia, you get a more technologically advanced way of being poor

Retro Anime@retro_anime

🍜

English

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

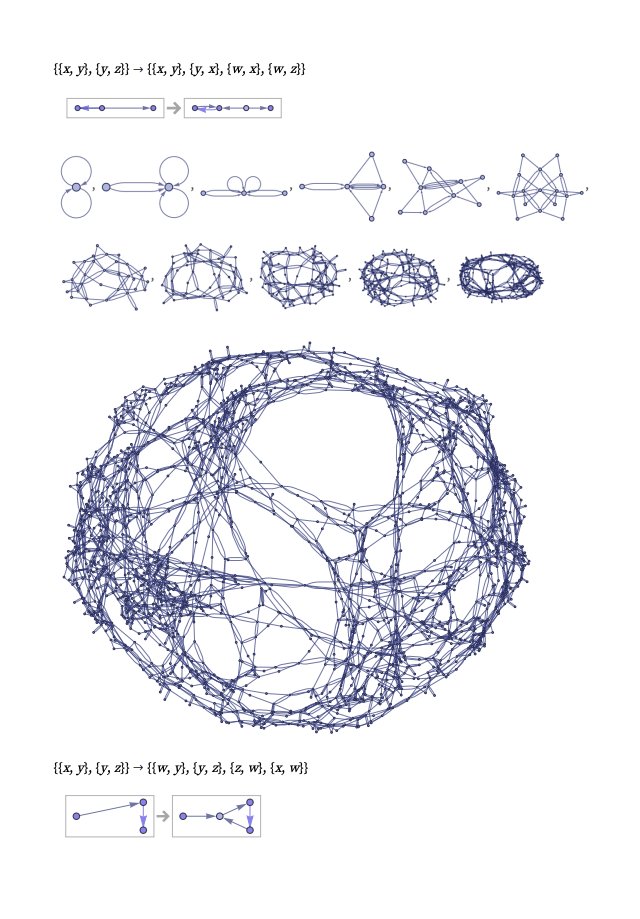

This is so beautiful. And so important to study. This phenomena (emergent coherence) is central to much of life, and most people don't even imagine it could happen.

Interesting STEM@InterestingSTEM

They capture the exact moment when a developing heart shifts from silence to its first beat. There is no “switch”: many cells gradually become active and, upon crossing a critical threshold, the entire tissue suddenly synchronizes.

English

metapalooka.life 🚀 (💫,💫) retweetledi

actually, that's the only right way to live.

But as Machiavelli said: Make mistakes of ambition and not mistakes of sloth. Develop the strength to do bold things, not the strength to suffer.

🗿@jhonte_

Living just in case things get better

English

metapalooka.life 🚀 (💫,💫) retweetledi

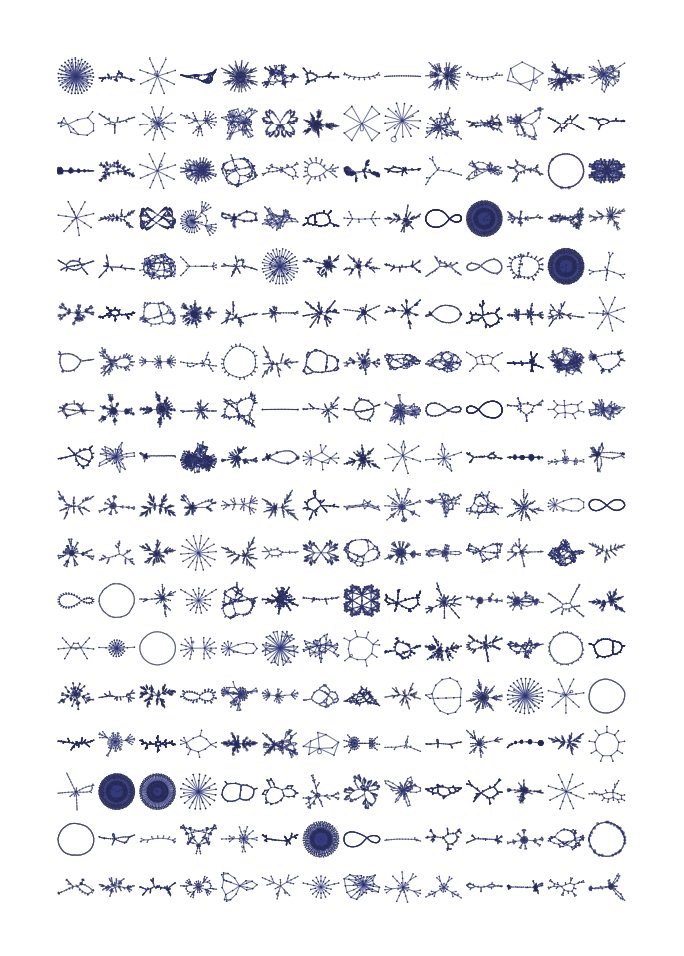

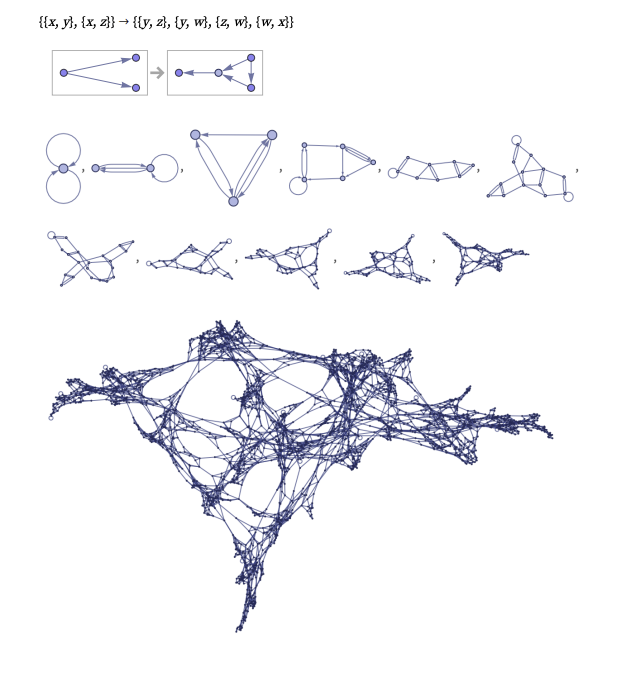

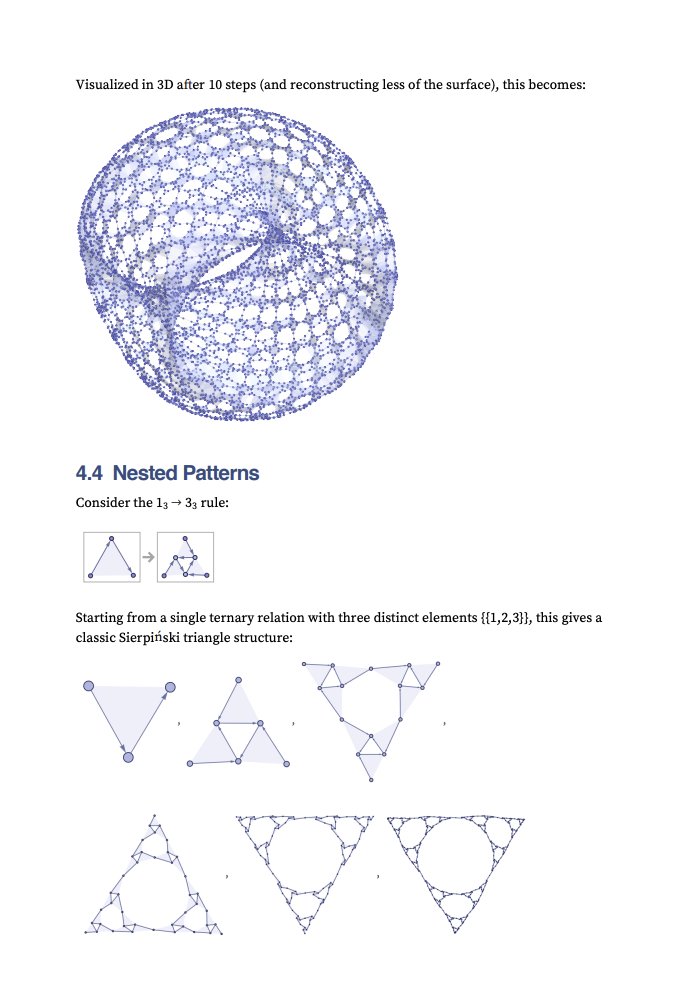

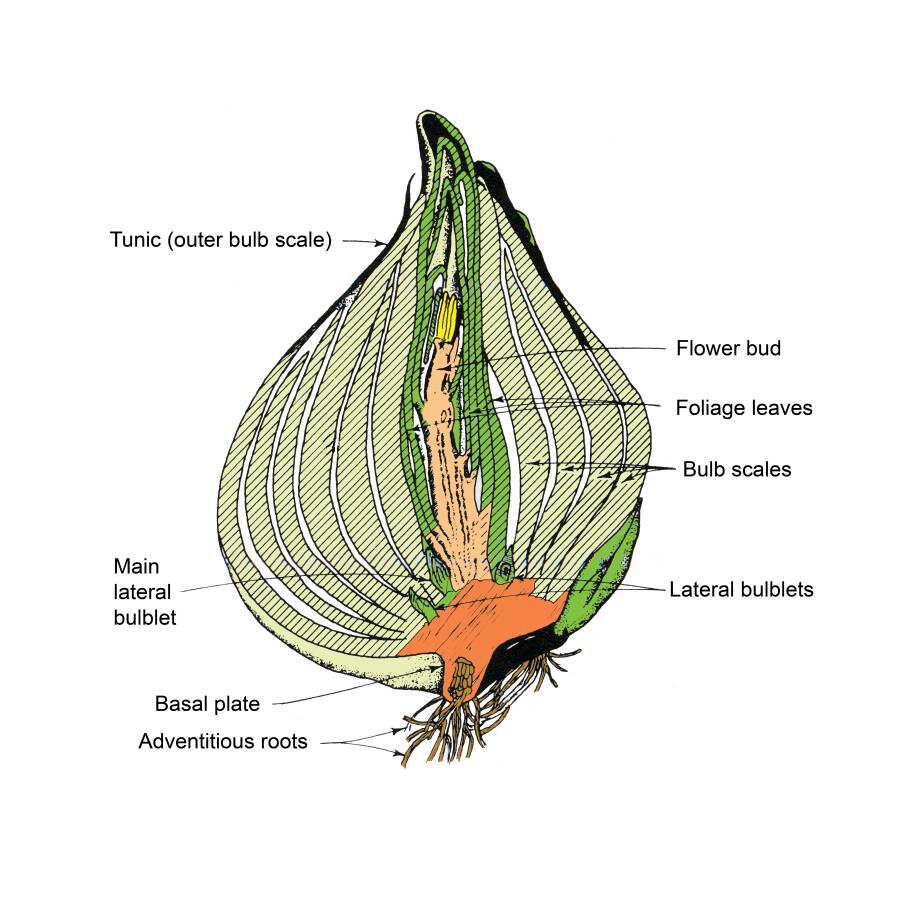

"The miracle of creation is that there are not many patterns, but one pattern repeated over and over again."

In myth, life rises from the underworld through division and separation. Spring bulbs do the same: the flower is sustained by life stored underground.

#FolkloreSunday

English

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi

metapalooka.life 🚀 (💫,💫) retweetledi