Metriqual

35 posts

Metriqual

@metriqual

The Rust-based AI Gateway. less than 4ms Overhead. Zero GC Pauses.

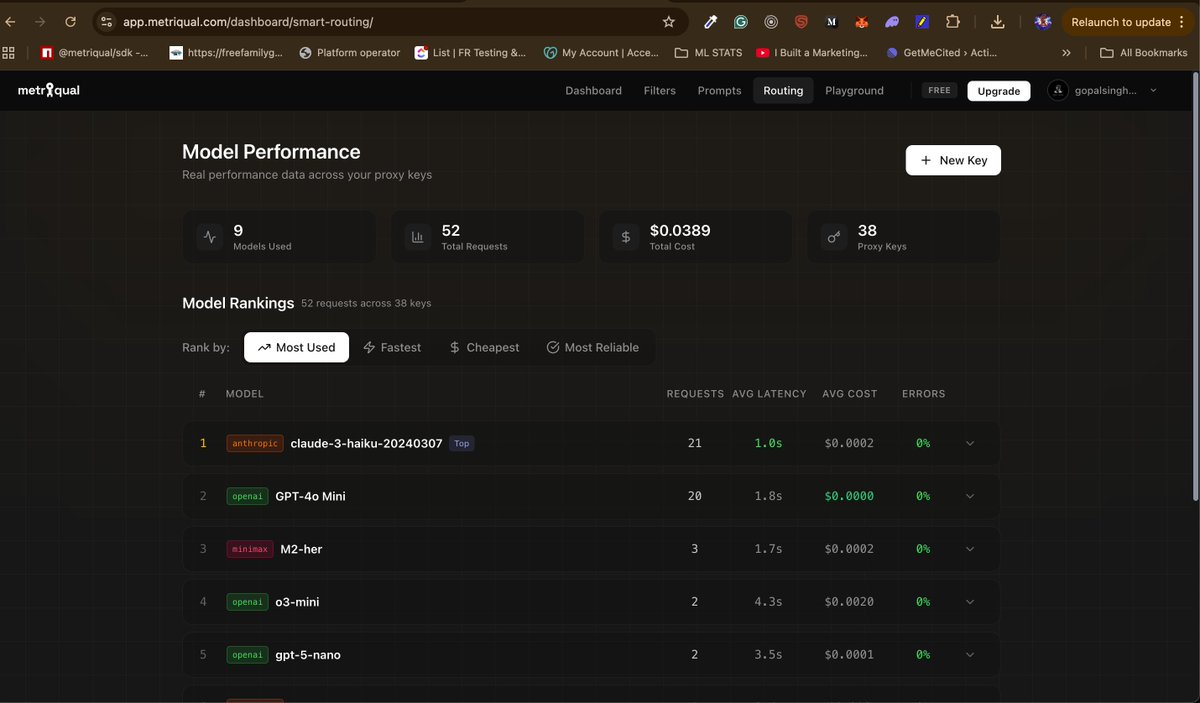

Hello there people, so we recently cut a client's LLM costs from $40K to $16K/month. One SDK change. Zero refactoring. The problem you face is that your app talks directly to OpenAI/Anthropic. There is no intelligent routing → No fallbacks when providers go down → No PII protection → AND DEF No cost control So we came up with the solution and built the middleware layer that should exist. You just have to import metriqual client = metriqual.OpenAI(api_key="your-key") THE SAME INTERFACE BUT NOW: Intelligent routing (cost/latency optimized) 2. Automatic failovers between providers 3. PII detection & redaction 4. Request caching 5. Prompt versioning without code changes 6. Sub-millisecond Rust infrastructure If you're spending $5K+/month on LLMs, we'll show you where 40-60% is leaking. Now is this not great? Demo: metriqual.com

Latest video breaks down why your AI backend is fragile: hardcoded prompts that require redeploys, custom PII filtering services adding latency, usage limits written as if statements in your app code. Metriqual moves all of this to the gateway layer. System prompts become infrastructure you update in a dashboard. Compliance is a checkbox. Cost controls reject requests at the edge before they hit your wallet. Built in Rust because this has to run at network speed. Sub-4ms overhead, zero-copy streaming, memory safety by design. You can't clone this with a library. You'd have to replicate the entire infrastructure layer. Your app becomes language-agnostic. Switch from Python to Go to Rust and your guardrails stay identical. That's the architecture shift. video featuring: @theunblunt and @intermurphi

what is metriqual? check out the video.. And plsss plss be humble it is our first video. 😖