M Ganesh Kumar

500 posts

M Ganesh Kumar

@mgkumar138

Neuro-AI Postdoc @ MPI Biological Cybernetics. Previously @ Harvard, A*STAR & NUS. 🇸🇬

Tübingen, Deutschland Katılım Eylül 2012

964 Takip Edilen299 Takipçiler

M Ganesh Kumar retweetledi

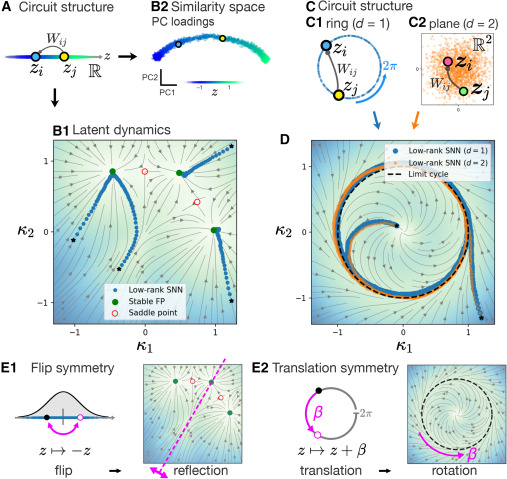

Neural manifolds do not map one-to-one onto circuit architecture. This study shows that distinct recurrent circuits can generate similar low-dimensional dynamics, yet still leave identifiable constraints on neural selectivity and population activity.

cell.com/neuron/fulltex…

English

M Ganesh Kumar retweetledi

Running a research lab is about leading people.

I created a free toolkit on the human side of lab leadership—covering hiring, mentoring, conflict navigation, lab culture, and performance management.

Open access: doi.org/10.5281/zenodo…

#TeamScience #AcademicLeadership

English

M Ganesh Kumar retweetledi

New discovery! Different anesthetics all seem to push the brain into unconsciousness in the same way: Activity is destabilized and dominated by slow waves. This could block signals.

cell.com/cell-reports/f…

#neuroscience @MIT_Picower @mitbrainandcog @mcgovernmit @ScienceMIT

English

M Ganesh Kumar retweetledi

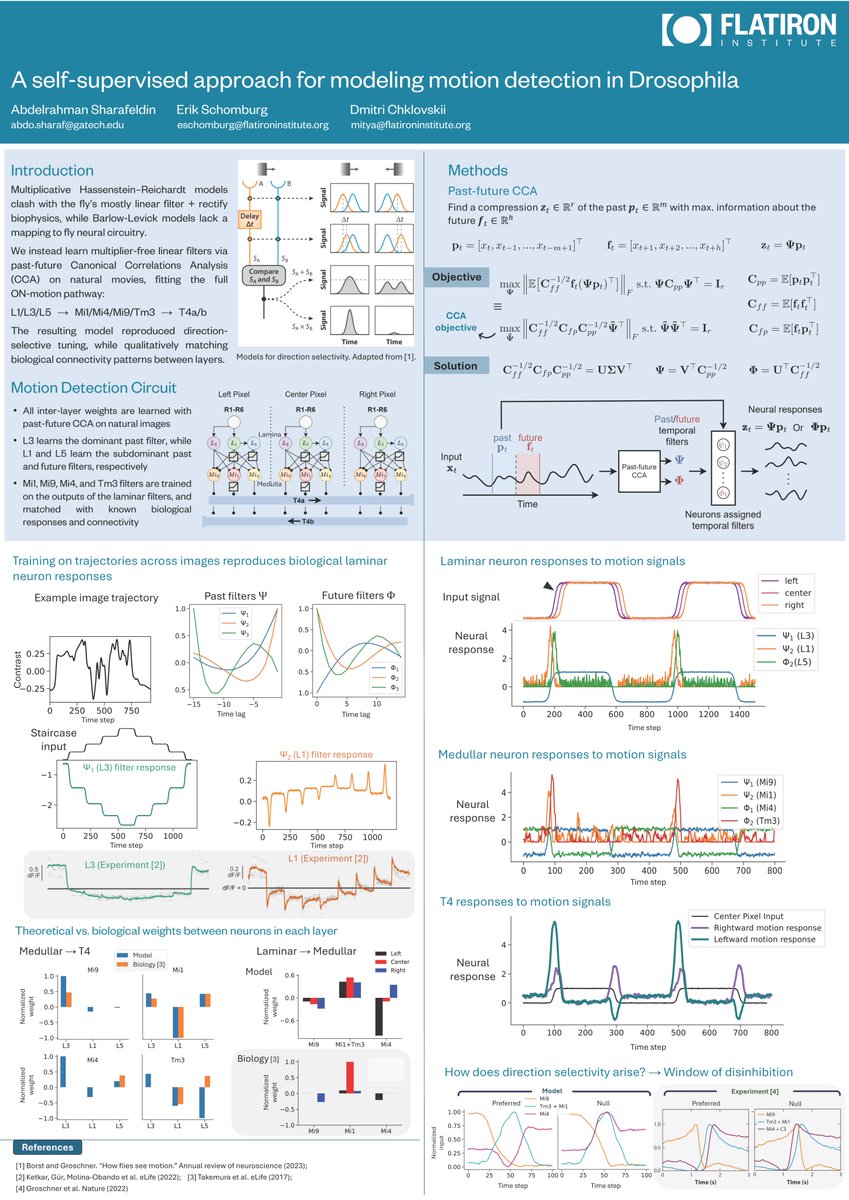

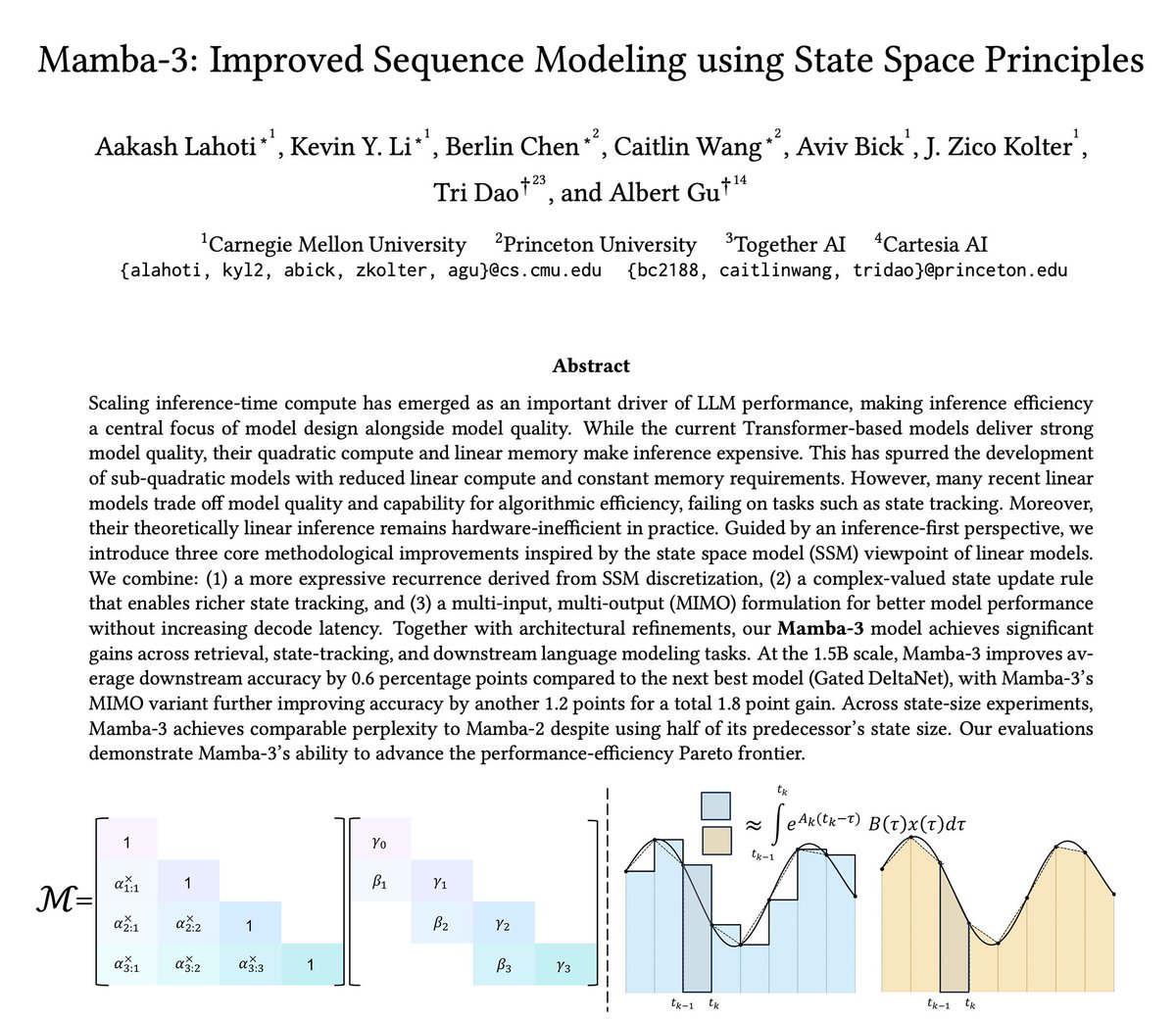

The newest model in the Mamba series is finally here 🐍

Hybrid models have become increasingly popular, raising the importance of designing the next generation of linear models.

We've introduced several SSM-centric ideas to significantly increase Mamba-2's modeling capabilities without compromising on speed. The resulting Mamba-3 model has noticeable performance gains over the most popular previous linear models (such as Mamba-2 and Gated DeltaNet) at all sizes.

This is the first Mamba that was student led: all credit to @aakash_lahoti @kevinyli_ @_berlinchen @caitWW9, and of course @tri_dao!

English

M Ganesh Kumar retweetledi

Thank you for covering our paper! It indeed is interesting that the hippocampus is learning representation like deep reinforcement learning agents using a reward prediction error.

Micah G. Allen@micahgallen

The hippocampus does more than just map space; it actively predicts future rewards by learning state transitions within the world. nature.com/articles/s4158…

English

M Ganesh Kumar retweetledi

Our work on causal mechanistic interpretability across brains and machines: arxiv.org/abs/2603.06557 to appear at #ICLR26 expertly lead by @melandrocyte @Zaki_Alaoui1, @sunnyliu1220 w/ Steve Baccus

Key idea: there are two ways to understand hidden reps in a neural network:

1) how do inputs activate them?

2) what *causal impact* do they have on output?

We introduce CODEC (COntribution DECompostion) to find sparse codes for all contributions network elements make to the input output map, combining *both* input activation *and* causal output impact.

In both brains and machines we find:

1) sparser codes for contributions than activations

2) separation into interpretable excitatory/inhib effects

3) improved steerability

4) elucidation of causal computations that can't be seen through activations alone

For more details see the excellent thread below

melandrocyte@melandrocyte

Trying to interpret how a neural-network does what it does? Activations tell you if a neuron responded. Contributions tell you if a neuron mattered! New paper from myself, @Zaki_Alaoui1, @sunnyliu1220 , @SuryaGanguli, and Steve Baccus: arxiv.org/abs/2603.06557

English

M Ganesh Kumar retweetledi

The next step for autoresearch is that it has to be asynchronously massively collaborative for agents (think: SETI@home style). The goal is not to emulate a single PhD student, it's to emulate a research community of them.

Current code synchronously grows a single thread of commits in a particular research direction. But the original repo is more of a seed, from which could sprout commits contributed by agents on all kinds of different research directions or for different compute platforms. Git(Hub) is *almost* but not really suited for this. It has a softly built in assumption of one "master" branch, which temporarily forks off into PRs just to merge back a bit later.

I tried to prototype something super lightweight that could have a flavor of this, e.g. just a Discussion, written by my agent as a summary of its overnight run:

github.com/karpathy/autor…

Alternatively, a PR has the benefit of exact commits:

github.com/karpathy/autor…

but you'd never want to actually merge it... You'd just want to "adopt" and accumulate branches of commits. But even in this lightweight way, you could ask your agent to first read the Discussions/PRs using GitHub CLI for inspiration, and after its research is done, contribute a little "paper" of findings back.

I'm not actually exactly sure what this should look like, but it's a big idea that is more general than just the autoresearch repo specifically. Agents can in principle easily juggle and collaborate on thousands of commits across arbitrary branch structures. Existing abstractions will accumulate stress as intelligence, attention and tenacity cease to be bottlenecks.

English

M Ganesh Kumar retweetledi

🚨BREAKING: Berkeley researchers spent 8 months inside a tech company watching how employees actually use AI.

The promise was simple: AI will save you time. Do less. Work smarter.

The opposite happened.

Workers didn't use AI to finish early and go home. They used it to take on more. More tasks. More projects. More hours. Nobody asked them to. They did it to themselves.

The researchers sat inside the company two days a week for 8 months. They watched 200 employees in real time. They tracked work channels. They conducted 40+ interviews across engineering, product, design, and operations.

Here's what they found. AI made everything feel faster, so people filled every gap. They sent prompts during lunch. Before meetings. Late at night. The natural stopping points in the workday disappeared. People ran multiple AI agents in the background while writing code, drafting documents, and sitting in meetings simultaneously.

It felt like momentum. It felt productive. But when they stepped back, they described feeling stretched, busier, and completely unable to disconnect.

83% said AI increased their workload. Not decreased. Increased.

62% of associates and 61% of entry-level workers reported burnout. Only 38% of executives felt the same strain. The people doing the actual work absorbed the damage while leadership celebrated the productivity numbers.

Then came the trap nobody saw coming. When one person uses AI to take on extra work, everyone else feels like they're falling behind. So the whole team speeds up. Nobody formally raises expectations. But the new pace quietly becomes the default. What AI made possible became what was expected.

The researchers gave it a name: workload creep. It looks like productivity at first. Then it becomes the new baseline. Then it becomes burnout.

AI was supposed to give you your time back. Instead it's eating more of it. And the worst part? You're doing it to yourself. Voluntarily.

English

M Ganesh Kumar retweetledi

Online now: Linking neural manifolds to circuit structure in recurrent networks dlvr.it/TRL6b7

English

M Ganesh Kumar retweetledi

The first time you start a research lab, there’s no manual.

So I made one.

A practical toolkit for postdocs and early-career faculty launching their first lab: hiring, startup planning, collaborations, and building a sustainable research program.

zenodo.org/records/188835…

English

M Ganesh Kumar retweetledi

NEW: #Kempner researchers develop a mean-field theory of task-trained RNNs that bridges random and learned connectivity—and find macaque motor cortex is best captured by an intermediate, task-specific recurrent structure.

Read the blog post 👇

🔗bit.ly/47f3Ldl

English

M Ganesh Kumar retweetledi

I am totally pumped about this new work. "Task-trained RNNs" are a powerful and influential framework in neuroscience, but have lacked a firm theoretical footing. This work provides one, and makes direct contact with the classical theory of random RNNs.

biorxiv.org/content/10.648…

English

M Ganesh Kumar retweetledi

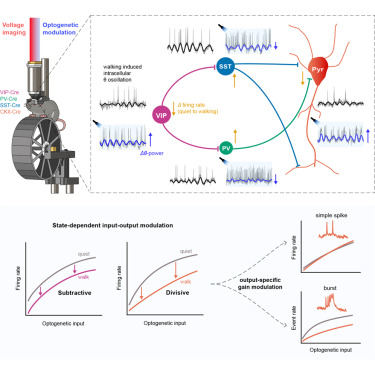

Online now: All-optical electrophysiology reveals behavior-dependent dynamics of excitation and inhibition in the hippocampus dlvr.it/TR4HlZ

English

M Ganesh Kumar retweetledi

The math on this project should mass-humble every AI lab on the planet.

1 cubic millimeter. One-millionth of a human brain. Harvard and Google spent 10 years mapping it. The imaging alone took 326 days. They sliced the tissue into 5,000 wafers each 30 nanometers thick, ran them through a $6 million electron microscope, then needed Google’s ML models to stitch the 3D reconstruction because no human team could process the output.

The result: 57,000 cells, 150 million synapses, 230 millimeters of blood vessels, compressed into 1.4 petabytes of raw data. For context, 1.4 petabytes is roughly 1.4 million gigabytes. From a speck smaller than a grain of rice.

Now scale that. The full human brain is one million times larger. Mapping the whole thing at this resolution would produce approximately 1.4 zettabytes of data. That’s roughly equal to all the data generated on Earth in a single year. The storage alone would cost an estimated $50 billion and require a 140-acre data center, which would make it the largest on the planet.

And they found things textbooks don’t contain. One neuron had over 5,000 connection points. Some axons had coiled themselves into tight whorls for completely unknown reasons. Pairs of cell clusters grew in mirror images of each other. Jeff Lichtman, the Harvard lead, said there’s “a chasm between what we already know and what we need to know.”

This is why the next step isn’t a human brain. It’s a mouse hippocampus, 10 cubic millimeters, over the next five years. Because even a mouse brain is 1,000x larger than what they just mapped, and the full mouse connectome is the proof of concept before anyone attempts the human one.

We’re building AI systems that loosely mimic neural networks while still unable to fully read the wiring diagram of a single cubic millimeter of the thing we’re trying to imitate. The original is 1.4 petabytes per millionth of its volume. Every AI model on Earth fits in a fraction of that.

The brain runs on 20 watts and fits in your skull. The data center required to merely describe one-millionth of it would span 140 acres.

All day Astronomy@forallcurious

🚨: Scientists mapped 1 mm³ of a human brain ─ less than a grain of rice ─ and a microscopic cosmos appeared.

English

M Ganesh Kumar retweetledi

Cosyne Viewing Parties

Visas, costs, care, & environmental concerns all limit Cosyne attendance. Luckily, the talks are livestreamed; but watching alone is the high road to an aneurism. So: viewing parties! Gather regionally to watch Cosyne talks! Info: shorturl.at/3DHZX

English

M Ganesh Kumar retweetledi

ICLR 2025 just gave an Outstanding Paper Award to a method that fixes model editing with one line of code 🤯

here's the problem it solves:

llms store facts in their parameters. sometimes those facts are wrong or outdated. "model editing" lets you surgically update specific facts without retraining the whole model.

the standard approach: find which parameters encode the fact (using causal tracing), then nudge those parameters to store the new fact.

works great for one edit. but do it a hundred times in sequence and the model starts forgetting everything else. do it a thousand times and it degenerates into repetitive gibberish.

every edit that inserts new knowledge corrupts old knowledge. you're playing whack-a-mole with the model's memory.

AlphaEdit reframes the problem.

instead of asking "how do we update knowledge with less damage?" the authors ask "how do we make edits mathematically invisible to preserved knowledge?"

the trick: before applying any parameter change, project it onto the null space of the preserved knowledge matrix.

in plain english: find the directions in parameter space where you can move freely without affecting anything the model already knows. only move in those directions.

it's like remodeling one room in a house by only touching walls that aren't load-bearing. the rest of the structure doesn't even know anything changed.

the results from Fang et al. across GPT2-XL, GPT-J, and LLaMA3-8B:

> average 36.7% improvement over existing editing methods

> works as a plug-and-play addition to MEMIT, ROME, and others

> models maintain 98.48% of general capabilities after 3,000 sequential edits

> prevents the gibberish collapse that kills other methods at scale

and the implementation is literally one line of code added to existing pipelines.

what i find genuinely elegant: the paper proves mathematically that output remains unchanged when querying preserved knowledge. this isn't "it works better in practice." it's "we can prove it doesn't touch what it shouldn't."

the honest caveats:

largest model tested was LLaMA3-8B. nobody's shown this works at 70B+ scale yet. a follow-up paper (AlphaEdit+) flagged brittleness when new knowledge directly conflicts with preserved knowledge, which is exactly the hardest case in production. and the whole approach assumes causal tracing correctly identifies where facts live, which isn't always clean.

but as a core insight, this is the kind of work that deserves the award. not because it solves everything. because it changes the question.

the era of "edit and pray" for llm knowledge updates might actually be ending.

English

M Ganesh Kumar retweetledi

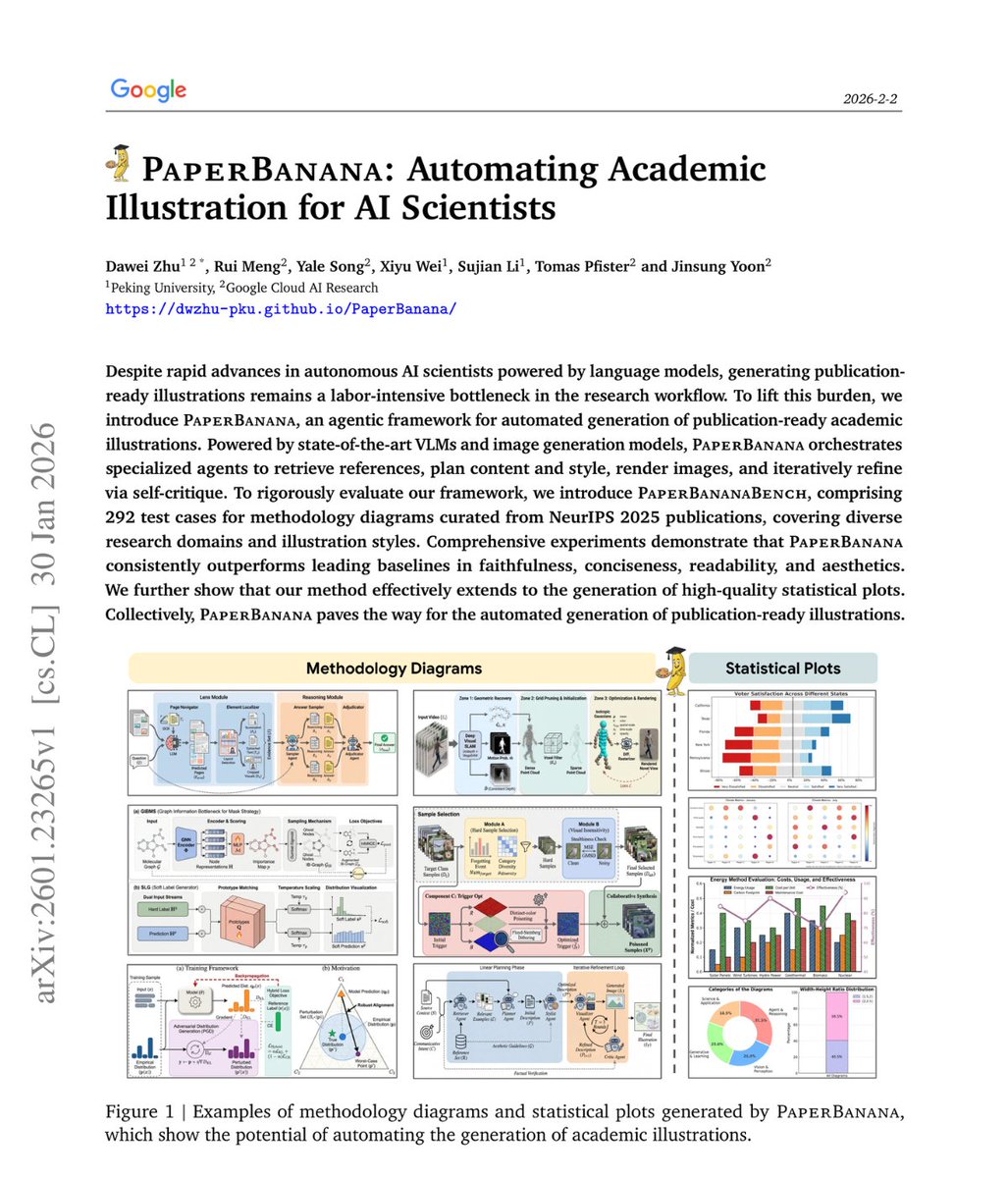

🚨BREAKING: Google just dropped another hit!

It's called PaperBanana and it generates publication-ready academic illustrations from just your methodology text.

No Figma. No manual design. No illustration skills needed.

Here's how it works:

A team of AI agents runs behind the scenes

→ One finds good diagram examples

→ One plans the structure

→ One styles the layout

→ One generates the image

→ One critiques and improves it

Here's the wildest part:

Random reference examples work nearly as well as perfectly matched ones. What matters is showing the model what good diagrams look like, not finding the topically perfect reference.

In blind evaluations, humans preferred PaperBanana outputs 75% of the time.

This is the recursion we've been waiting for AI systems that can fully document themselves visually.

Waitlist’s open, Link in the first comment.

English

M Ganesh Kumar retweetledi