mian shao

80 posts

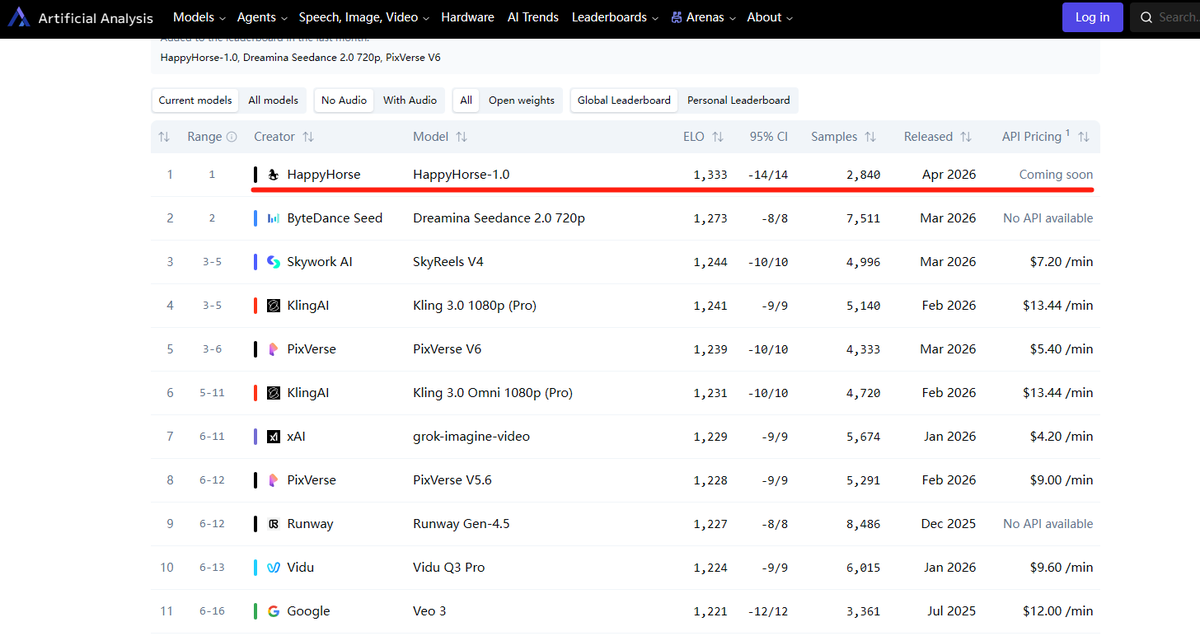

How to evaluate DeepSeek’s new "Expert Mode"? 🧠 Zhihu contributor 一只Zenon 👇: Quick take: This doesn’t appear to be the full DeepSeek Next model—more like a new checkpoint built on Lite, with Expert Mode likely tweaking decoding params rather than using a separate model weight. Test impressions so far: • Clean, lightweight tone ✨ No cringey generic chatbot phrases like "your xxx is very xxx" or overly empathetic filler lines. • Far less Gemini+ distillation vibe; more natural, less verbose logic. • Strong creative writing with proper structure & flow. Two standout breakthroughs: 1️⃣ Precise length control & deep prompt • It generated a 10,000-word report in seconds per my prompt. • Previous limits: DeepSeek ~2k words, Gemini 3.1 Pro ~4k, Qwen 3.6 Plus ~5k. • The result is clearer & more usable than Qwen 3.6 Plus Deep Research’s 10+ minute output, even when told to focus on software over hardware. 2️⃣Genuine logical reflection ability • Earlier "stubborn" models (GPT-5, Kimi K2+) just defended hallucinations or rambled. • DeepSeek Expert Mode actually reflects on the prompt’s logic itself—a skill previously exclusive to Claude Opus 4.6+. It solves classic real-world reasoning tests (e.g., "I live 50m from a car wash—walk or drive?") that stumped most Chinese models, with clean, grounded reasoning. 💡This kind of real-scenario inference requires advanced post-training & RL, a huge gap for most domestic LLMs. Whatever technique (eNgram, mHC, NSA or custom RL) enabled this jump is a big win. 🔗 Full Response (CN): zhihu.com/question/20250… #DeepSeek #Qwen #AI #Gemini #LLM #GenAI #AIGC #Tech