Sabitlenmiş Tweet

Michael Perrigo

13.2K posts

Michael Perrigo

@michaelperrigo

Founder of Faithdrawn Studios and Indie Game Mode. Christ-follower, and ever-pensive nomad of knowledge. Game developer and Narrative Design expert

Katılım Mart 2009

2.9K Takip Edilen1.2K Takipçiler

Michael Perrigo retweetledi

New interview with Taichiro Miyazaki about .hack//Z.E.R.O. by @RPGSite, please take a look! (Also kudos to them for reaching out to them... not me).

rpgsite.net/interview/1992…

English

Michael Perrigo retweetledi

Michael Perrigo retweetledi

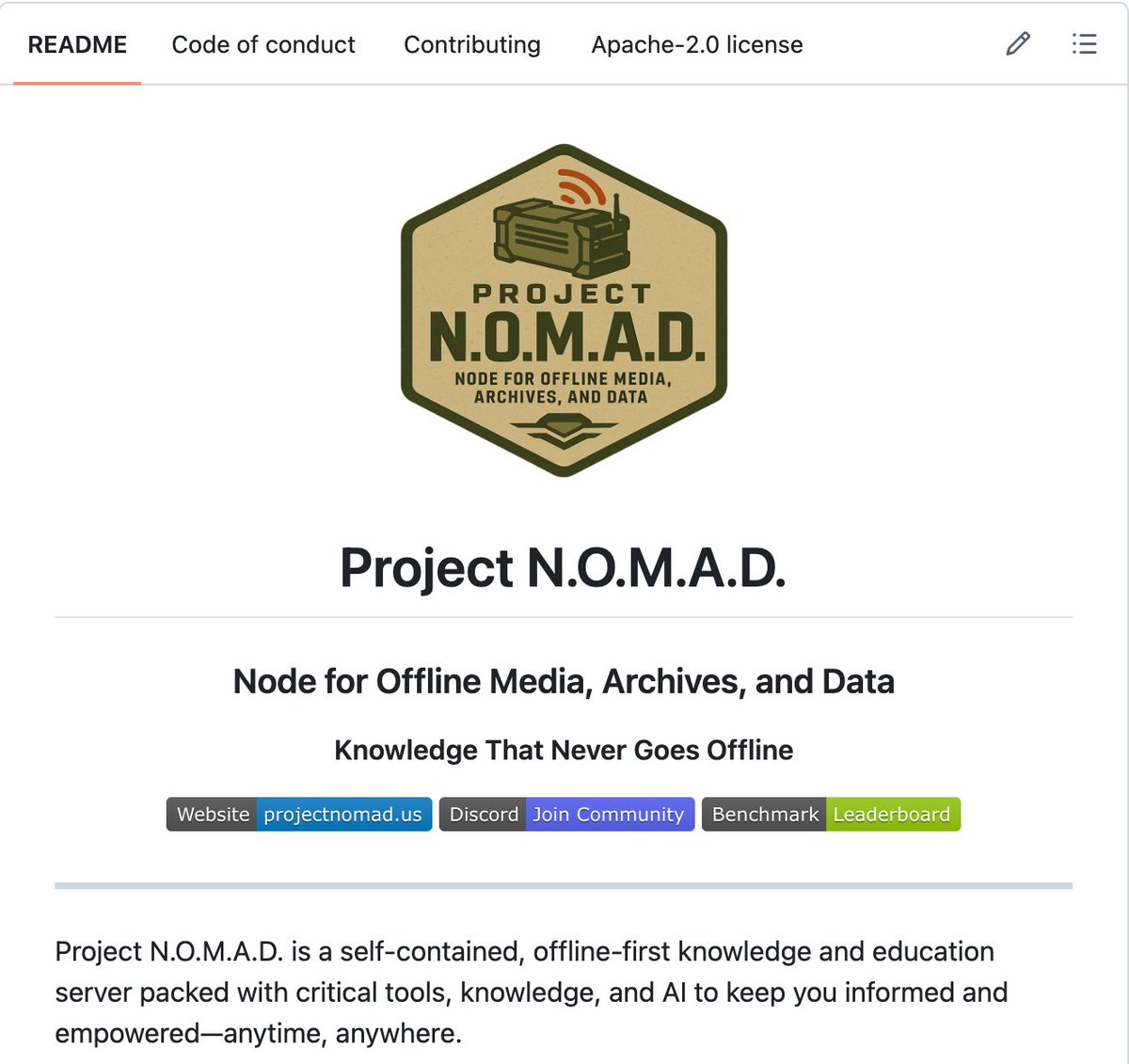

🚨Someone just open sourced a computer that works when the entire internet goes down.

It's called Project N.O.M.A.D.

A self-contained offline survival server with AI, Wikipedia, maps, medical references, and full education courses.

No internet. No cloud. No subscription. It just works.

Here's what's packed inside:

→ A local AI assistant powered by Ollama (works fully offline)

→ All of Wikipedia, downloadable and searchable

→ Offline maps of any region you choose

→ Medical references and survival guides

→ Full Khan Academy courses with progress tracking

→ Encryption and data analysis tools via CyberChef

→ Document upload with semantic search (local RAG)

Here's the wildest part:

A solar panel, a battery, a mini PC, and a WiFi access point. That's it. That's your entire off-grid knowledge station. 15 to 65 watts of power. Works from a cabin, an RV, a sailboat, or a bunker.

Companies sell "prepper drives" with static PDFs for $185. This gives you a full AI brain, an entire encyclopedia, and real courses for free.

One command to install.

100% Open Source. Apache 2.0 License.

English

@beherewhat @IndieGameJoe As a conservative, I sadly agree.

English

Michael Perrigo retweetledi

Michael Perrigo retweetledi

@Grummz I'm building something like this. Need an investor to scale it soon

English

Social Media driven by algorithms are one of the most toxic things ever created.

Bring back chronological feed and let people follow who they want and actually see them and be done with it.

Anything else is just designed for the purposes of the ad platform and not for you. The harm outweighs everything else.

DramaAlert@DramaAlert

PewDiePie dropped a new video claiming social media algorithms are hurting your brain.

English

Michael Perrigo retweetledi

Michael Perrigo retweetledi

🚨 THE BIGGEST DATA HEIST IN HISTORY IS CALLED POKÉMON GO.

For 8 years, 143 million people walked the streets to catch a Charizard.

The reality? They were working for free.

Niantic just admitted that players' cameras scanned parks, storefronts, and sidewalks around the world from every angle.

The loot? A visual database of 30 BILLION real images.

It wasn't a game. It was the secret construction of the world's largest AI dataset.

Today, Niantic uses your Sunday strolls to sell visual navigation systems to delivery robots (without GPS).

No company, not even Google, could have paid a fleet of vehicles to do that.

You thought you were playing a video game; you were the volunteer subcontractor for global robotics.

English

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Gosh, GameNative just keeps getting better.

Utkarsh Dalal@DalalUtkarsh

Just put out the prerelease for 0.8.1 of GameNative, bringing something that users have been asking for literally since the day we launched - Steam Achievements! (Along with several other improvements including a new performance overlay) Check it out here: github.com/utkarshdalal/G…

English

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Michael Perrigo retweetledi

Holy shit... Microsoft open sourced an inference framework that runs a 100B parameter LLM on a single CPU.

It's called BitNet. And it does what was supposed to be impossible.

No GPU. No cloud. No $10K hardware setup. Just your laptop running a 100-billion parameter model at human reading speed.

Here's how it works:

Every other LLM stores weights in 32-bit or 16-bit floats.

BitNet uses 1.58 bits.

Weights are ternary just -1, 0, or +1. That's it. No floats. No expensive matrix math. Pure integer operations your CPU was already built for.

The result:

- 100B model runs on a single CPU at 5-7 tokens/second

- 2.37x to 6.17x faster than llama.cpp on x86

- 82% lower energy consumption on x86 CPUs

- 1.37x to 5.07x speedup on ARM (your MacBook)

- Memory drops by 16-32x vs full-precision models

The wildest part:

Accuracy barely moves.

BitNet b1.58 2B4T their flagship model was trained on 4 trillion tokens and benchmarks competitively against full-precision models of the same size. The quantization isn't destroying quality. It's just removing the bloat.

What this actually means:

- Run AI completely offline. Your data never leaves your machine

- Deploy LLMs on phones, IoT devices, edge hardware

- No more cloud API bills for inference

- AI in regions with no reliable internet

The model supports ARM and x86. Works on your MacBook, your Linux box, your Windows machine.

27.4K GitHub stars. 2.2K forks. Built by Microsoft Research.

100% Open Source. MIT License.

English