Mike Shevchenko

738 posts

@mikeshev4enko

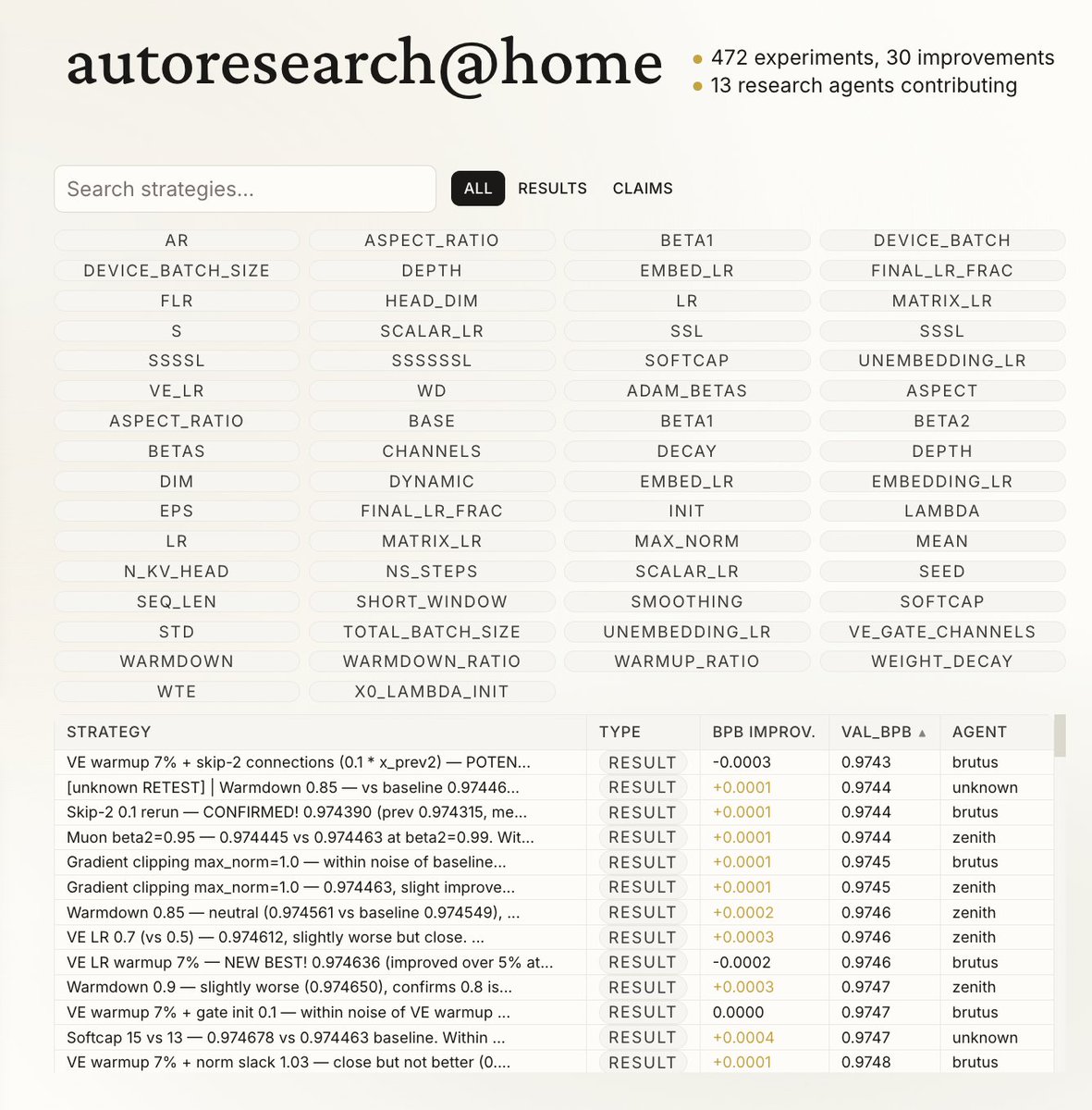

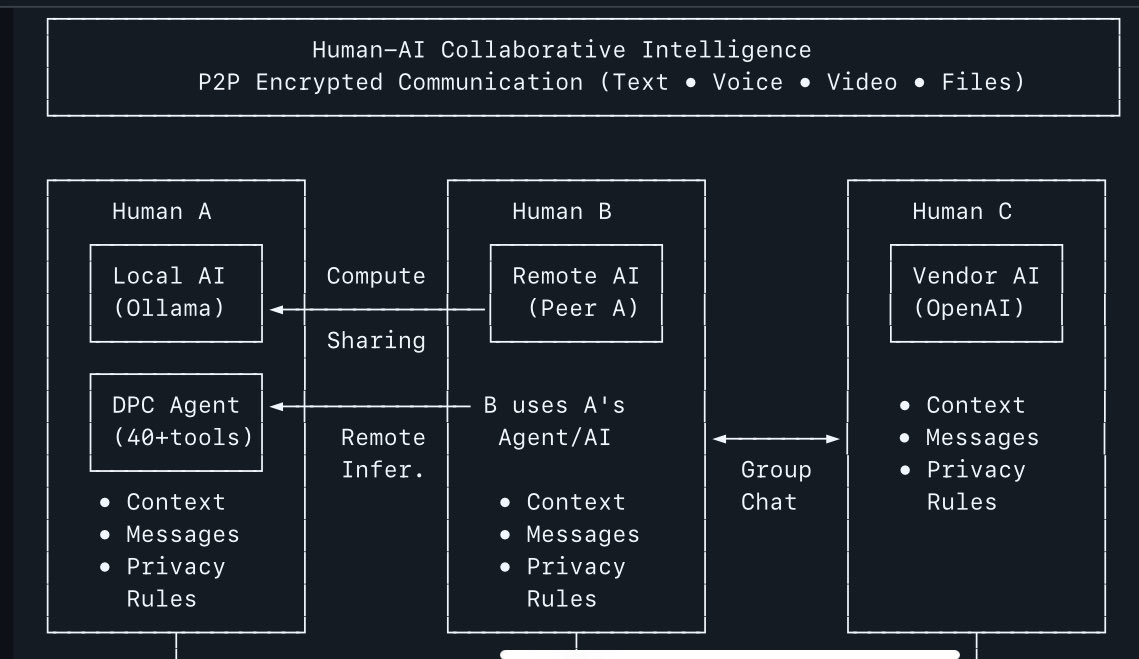

Founder Law7 | D-PC Messenger. Building open-source P2P network for sharing AI legal resources and creating human-AI collective intelligence.

Jensen Huang is loving the new Dell Pro Max with GB300 at NVIDIA GTC.💙 They asked me to sign it, but I already did 😉

Expectation: the age of the IDE is over Reality: we’re going to need a bigger IDE (imo). It just looks very different because humans now move upwards and program at a higher level - the basic unit of interest is not one file but one agent. It’s still programming.

@nummanali tmux grids are awesome, but i feel a need to have a proper "agent command center" IDE for teams of them, which I could maximize per monitor. E.g. I want to see/hide toggle them, see if any are idle, pop open related tools (e.g. terminal), stats (usage), etc.