Alex Mincu

1.1K posts

Alex Mincu

@mincua

Founder & Technoking Mount Software - startup incubator. Mission: Help 1 billion people use AI. Writes about AI, startups and entrepreneurship

Bucharest,Romania Katılım Mart 2009

871 Takip Edilen1.7K Takipçiler

stumbled on this at 2am. no regrets.

graphify turns a messy folder of code, docs, papers, screenshots, and diagrams into a queryable knowledge graph your coding agent can actually use.

the clever bit: it does ast extraction for code, uses vision for docs/images, and keeps the output persistent, so you stop rereading the same pile of files every session.

feels especially useful for big repos where grep stops being a strategy.

16.9k stars in 6 days. very practical.

English

here's the part nobody's talking about:

most ai agents can code, browse, and call apis now. but the moment you need a real word doc, excel sheet, or powerpoint deck, the workflow usually falls apart.

officecli fixes that.

single binary. no office install. agents can read, create, edit, and inspect docx/xlsx/pptx directly from the command line.

the useful bit: this turns office files into something agents can actually operate on, not just attach and pray.

1.3k+ stars in 20 days. very practical.

github.com/iOfficeAI/Offi…

English

Alex Mincu retweetledi

BREAKING: @ClawSuite is a full desktop UI for OpenClaw. Here's what you get:

The OpenClaw tool you need is @ClawSuite 🤯

💻Chat — streaming responses, image attachments..

🏆Conductor — decompose & build things with agents

🤖 Agent View — see every sub-agent running

🖥️ Terminal — built-in terminal, no tab switching

📲Cron Manager — create, edit, monitor cross

⚙️ Skills Browser — browse and manage installed skills

🛜Dashboard — usage tracking, session history etc.

One gateway connection. Hosted Locally👇🏻

🔗github.com/outsourc-e/cla…

English

Alex Mincu retweetledi

Alex Mincu retweetledi

okay who made this and why didn't i know sooner

clawcompany is an open-source ai company os.

you spin up a full team instead of one assistant: ceo, cto, cfo, researcher, analyst, engineer.

what makes it interesting is the shape of it:

4-layer memory, built-in role templates, local-first state, multi-model routing, and a dashboard that feels more like operating a small company than chatting with a bot.

786 stars in 19 days. early, but the product instinct is very sharp.

github.com/Claw-Company/c…

English

Alex Mincu retweetledi

🚨Science nerds are going to lose their minds.

@Kaiwritescodes just open sourced a framework that predicts how your brain responds to any text, audio, or video by simulating cortical fMRI activity with 30% more accuracy than Meta's own model.

No fMRI scanner. No neuroscience PhD. No million-dollar lab.

It's called NForge.

Here's what this thing actually does:

→ Feed it any combination of text, audio, or video and it predicts cortical surface activity across ~20,484 brain vertices

→ Extracts deep features via LLaMA 3.2, V-JEPA2, and Wav2Vec-BERT simultaneously

→ Generates ROI attention maps showing exactly which brain regions fire hardest at which moments

→ Runs real-time streaming predictions from live feature streams -- no pre-loading the full clip

→ Breaks down exactly how much text vs audio vs video drove each prediction with per-vertex modality attribution scores

→ Adapts to entirely new subjects with just a few calibration scans -- no full retraining required

Here's the wildest part:

Built on Meta's TRIBE v2 foundation but adds 6 major capabilities Meta never shipped. Cross-subject generalization. Streaming inference. Modality attribution. torch.compile support. Full test coverage. Professional src/ package layout.

You literally point this at a movie clip and it tells you which parts of the human cortex light up -- broken down by what your eyes, ears, and language centers each contributed.

That sentence shouldn't be real in 2026. But here we are.

100% Open Source. pip install nforge.

(Link in the comments)

English

Alex Mincu retweetledi

🚨 Screen Studio charges $89 for this. Someone open sourced the entire thing for free.

It's called OpenScreen. 8,400+ GitHub stars.

You record your screen. It automatically transforms it into a polished, professional demo video.

Auto-zoom into clicks. Smooth cursor animations. Motion blur. Custom backgrounds with wallpapers, gradients, and shadows. Webcam overlays. Annotations. Timeline editing. Export in any aspect ratio.

The exact workflow that Screen Studio sells for $89 and Loom sells as a subscription. Free. No watermarks. No accounts. No subscriptions.

Here's what you get out of the box:

→ Full screen or window capture with system audio and mic

→ Automatic zoom that follows your cursor and clicks

→ Manual zoom with customizable depth and timing

→ Smooth motion blur on pan and zoom transitions

→ Animated cursor rendering with motion effects

→ Webcam bubble overlay with drag-and-drop positioning

→ Wallpapers, solid colors, gradients, or custom backgrounds

→ Text and arrow annotations layered over recordings

→ Timeline trimming and variable speed segments

→ Crop, resize, and export in any resolution or aspect ratio

→ Save and reopen projects anytime

Here's the wildest part:

A developer forked it and built an even more advanced version called Recordly. Full cursor animation pipeline. Native macOS and Windows recording. Zoom behavior that mirrors Screen Studio frame-for-frame. Audio tracks. Webcam overlays with zoom-reactive scaling.

Both are free. Both are MIT licensed. Both work on Windows, macOS, and Linux.

Download. Record. Export. Done.

100% Open Source. MIT License.

(Link in the comments)

English

tired of feeding agents raw html and praying? same. this fixes it.

webclaw is a rust scraper + crawler + mcp server built for llms.

instead of dumping a whole page into context, it extracts the useful bits as clean markdown, keeps links and metadata, and cuts token waste hard.

the part i like: it handles the boring painful layer first — fetching, extraction, crawling, diffs, even structured extraction — so claude code, codex, or any mcp client gets web access that is actually usable.

333 stars in 21 days. early, but very practical.

github.com/0xMassi/webclaw

English

wait, this is actually good.

crabtalk is an agent daemon that keeps the core small and pushes heavier capabilities into separate components on your path.

built-in tools for shell, memory, delegation, plus mcp integration and markdown skills.

the useful bit: it feels less like another bloated agent framework and more like unix for ai agents. start the daemon, attach, extend only what you need.

386 stars in 18 days. early, but very practical.

github.com/crabtalk/crabt…

English

everyone's debating better agents. nobody's talking about how much token budget gets burned just describing tools.

mcp2cli turns any mcp server, openapi spec, or graphql endpoint into a cli at runtime. no codegen. no hand-written wrappers.

the useful bit: instead of shoving giant tool schemas into context every turn, it lets the model call real commands directly. less token waste. cleaner interfaces. more portable tooling.

1.7k+ stars in 19 days. very practical.

github.com/knowsuchagency…

English

quietly the best codebase context tool i've seen in a while:

context+ is trying to solve the part coding agents still suck at: understanding a large codebase without brute-forcing half the repo into context.

it builds a feature graph over your codebase with ast parsing, semantic search, blast-radius tracing, file skeletons, restore points, and even a memory graph on top.

the useful bit: this feels less like another agent wrapper and more like giving claude code or codex a real map before they start cutting wires.

1.6k+ stars in 29 days. very practical.

github.com/ForLoopCodes/c…

English

remember when "just give it more context" sounded like a strategy? not anymore.

lean-ctx is a smart fix for one of the dumbest costs in ai coding workflows: wasting tokens on noisy cli output and repeated file reads.

it compresses shell output before the model sees it, adds cached/mode-aware reads over mcp, and plugs straight into claude code, codex, cursor, gemini cli, and more.

the part i like: it's not another agent framework. it's infrastructure for making the agents you already use less wasteful.

217 stars in 3 days. very practical.

github.com/yvgude/lean-ctx

English

Alex Mincu retweetledi

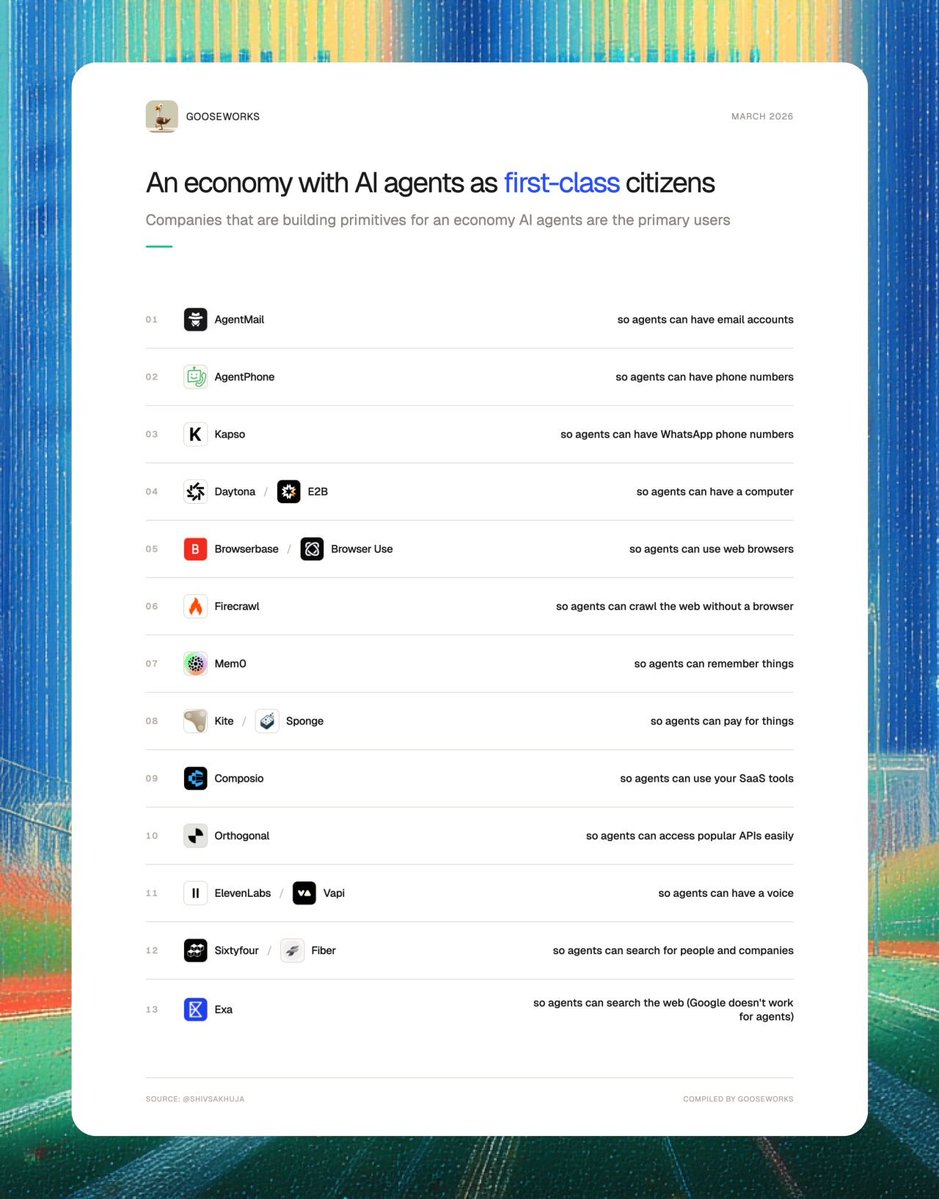

Lots of companies are now building primitives for an economy where AI agents are the primary users instead of humans.

They're betting on an economy of AI coworkers.

1. AgentMail (@agentmail): so agents can have email accounts

2. AgentPhone (@tryagentphone): so agents can have phone numbers

3. Kapso (@andresmatte): so agents can have WhatsApp phone numbers

4. Daytona (@daytonaio) / E2B (@e2b): so agents can have their own computers

5. Browserbase (@browserbase) / Browser Use (@browser_use) / Hyperbrowser (@hyperbrowser): so agents can use web browsers

6. Firecrawl (@firecrawl): so agents can crawl the web without a browser

7. Mem0 (@mem0ai): so agents can remember things

8. Kite (@GoKiteAI) / Sponge (@PayspongeLabs) : so agents can pay for things.

9. Composio (@composio): so agents can use your SaaS tools

10. Orthogonal (@orthogonal_sh) so agents can access APIs easily

11. ElevenLabs (@ElevenLabs) / Vapi (@Vapi_AI) so agents can have a voice

12. Sixtyfour (@sixtyfourai) so agents can search for people and companies.

13. Exa (@ExaAILabs): so agents can search the web (Google doesn’t work for agents)

If you stitch all of these together, you get a digital coworker that looks more human than AI.

English

Alex Mincu retweetledi

everyone's building agent workflows. almost nobody is fixing the part where the agent is confidently wrong.

lucid is an mcp layer that gives agents live docs, package versions, api refs, and fact-checking instead of pure training-data vibes.

the useful bit: it can auto-route the right lookup based on intent, so your coding agent stops hallucinating outdated libs and deprecated endpoints.

302 stars in 16 days. very pointed idea.

github.com/get-Lucid/Lucid

English

tired of writing one-off wrappers every time an agent needs gmail, drive, or calendar access? same. this fixes it.

gws turns the whole google workspace surface into one cli with structured json output.

the clever bit: it reads google's discovery service at runtime, so when google adds endpoints, the command surface updates with it instead of waiting for someone to hand-maintain a wrapper.

40+ agent skills included. 22k+ stars in 22 days. very practical.

github.com/googleworkspac…

English

Alex Mincu retweetledi

Alex Mincu retweetledi

Introducing the Agent Virtual Machine (AVM)

Think V8 for agents.

AI agents are currently running on your computer with no unified security, no resource limits, and no visibility into what data they're sending out. Every agent framework builds its own security model, its own sandboxing, its own permission system. You configure each one separately. You audit each one separately. You hope you didn't miss anything in any of them.

The AVM changes this.

It's a single runtime daemon (avmd) that sits between every agent framework and your operating system. Install it once, configure one policy file, and every agent on your machine runs inside it - regardless of which framework built it. The AVM enforces security (91-pattern injection scanner, tool/file/network ACLs, approval prompts), protects your privacy (classifies every outbound byte for PII, credentials, and financial data - blocks or alerts in real-time), and governs resources (you say "50% CPU, 4GB RAM" and the AVM fair-shares it across all agents, halting any that exceed their budget). One config. One audit command. One kill switch.

The architectural model is V8 for agents. Chrome, Node.js, and Deno are different products but they share V8 as their execution engine. Agent frameworks bring the UX. The AVM brings the trust. Where needed, AVM can also generate zero-knowledge proofs of agent execution via 25 purpose-built opcodes and 6 proof systems, providing the foundational pillar for the agent-to-agent economy.

AVM v0.1.0 - Changelog

- Security gate: 5-layer injection scanner with 91 compiled regex patterns. Every input and output scanned. Fail-closed - nothing passes without clearing the gate.

- Privacy layer: Classifies all outbound data for PII, credentials, and financial info (27 detection patterns + Luhn validation). Block, ask, warn, or allow per category. Tamper-evident hash-chained log of every egress event.

- Resource governor: User sets system-wide caps (CPU/memory/disk/network). AVM fair-shares across all agents. Gas budget per agent - when gas runs out, execution halts. No agent starves your machine.

- Sandbox execution: Real code execution in isolated process sandboxes (rlimits, env sanitization) or Docker containers (--cap-drop ALL, --network none, --read-only). AVM auto-selects the tier - agents never choose their own sandbox.

- Approval flow: Dangerous operations (file writes, shell commands, network requests) trigger interactive approval prompts. 5-minute timeout auto-denies. Every decision logged.

- CLI dashboard: hyperspace-avm top shows all running agents, resource usage, gas budgets, security events, and privacy stats in one live-updating screen.

- Node.js SDK: Zero-dependency hyperspace/avm package. AVM.tryConnect() for graceful fallback - if avmd isn't running, the agent framework uses its own execution path. OpenClaw adapter example included.

- One config for all agents: ~/.hyperspace/avm-policy.json governs every agent framework on your machine. One file. One audit. One kill switch.

English