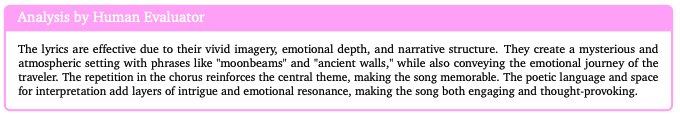

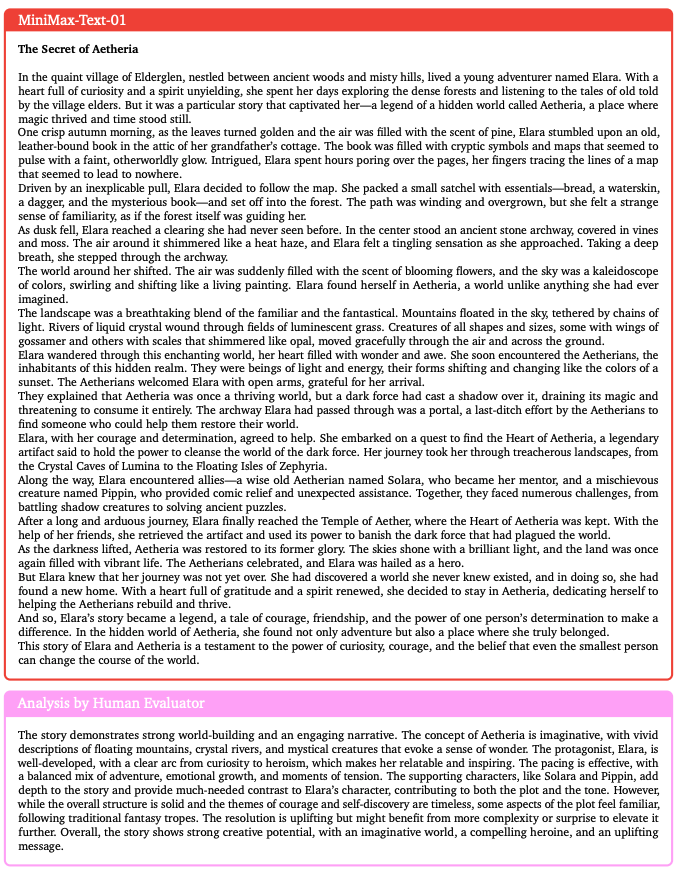

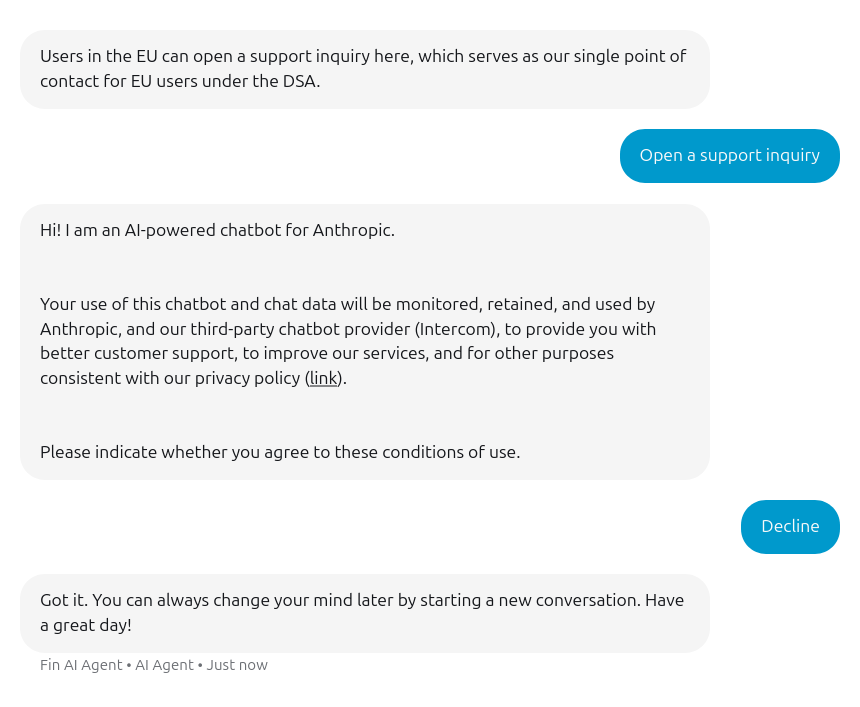

Is this legal? How can I contact the single point of contact for EU users under the DSA for @AnthropicAI without accepting those conditions? support.claude.com/en/articles/11…

English

Mitar

1.6K posts

@mitar_m

Somewhere and everywhere between (#computer) #science, #technology, #nature and (#open) #society. He/him. Elsewhere: @[email protected] @mitar.bsky.social