Mike Larkin

3.3K posts

Mike Larkin

@mlarkin2012

Low-level developer. Peakbagger. Private Pilot. Founder/CTO Ringcube (acquired by Citrix) and Deepfactor (acquired by Cisco). Building hypervisors and OSes.

@greg16676935420 Because it's customary in Vegas. On a $10 million jackpot you should tip at least $100,000 to the dealers, cocktails waitresses, slot attendants, and anyone else who helped you have a good time.

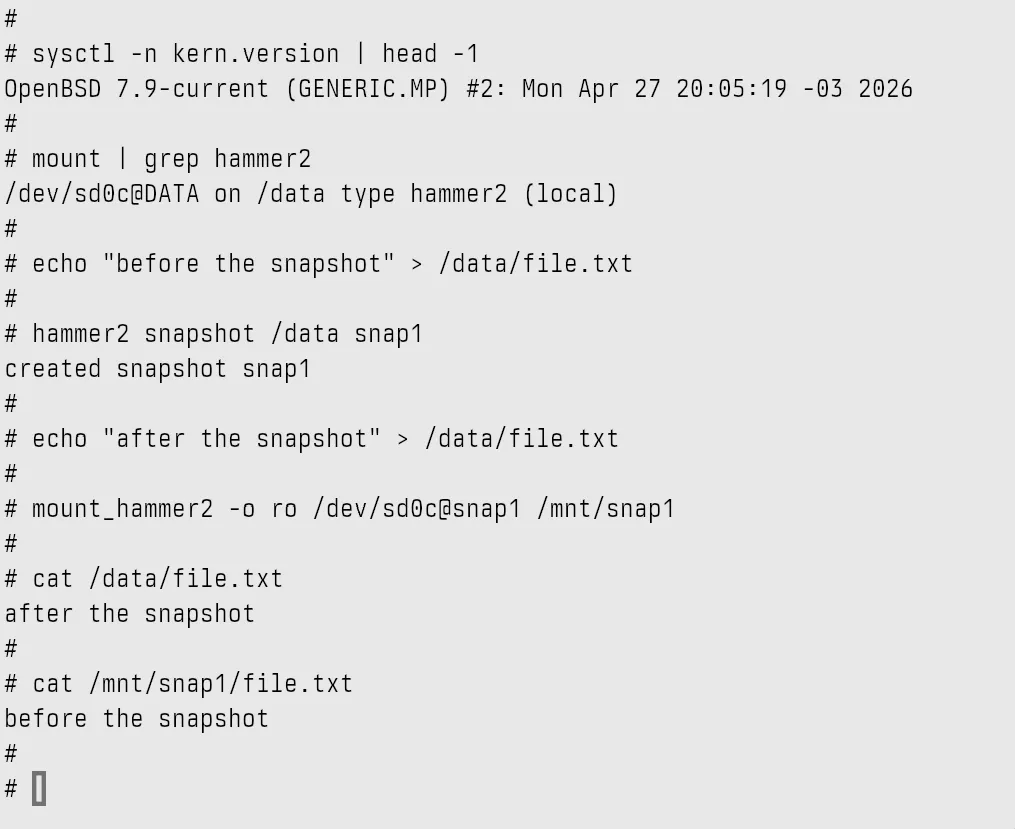

#OpenBSD on #Firecracker. 1.4 MB kernel, ~30 ms cold boot, no BIOS, no PCI, virtio-mmio only. Experimental port. Wrote up how to build the kernel, prep an FFS2 disk with vnd(4), and boot the thing from Linux. ijanc.org/posts/openbsd-… Commit: git.sr.ht/~ijanc/openbsd…

Follow-up: informed older couple from Iowa tipped zero for a $10 million jackpot, not even the $4 left on the machine after the jackpot. Cashed it out and took it with them. Do better, humans.