MMitchell

22.1K posts

MMitchell

@mmitchell_ai

Interdisciplinary researcher focused on shaping AI towards long-term positive goals. ML & Ethics. Similar content in the Skies (this bird has flown).

Katılım Haziran 2016

1.4K Takip Edilen81.8K Takipçiler

@reflection_ai i want y'all to succeed but it's a little self-serving to say "America's open source AI lab" having released nothing

English

MMitchell retweetledi

MMitchell retweetledi

OlmoEarth v1.1 just dropped (thx @allen_ai) 🌍

This family of Earth observation foundation models for satellite imagery tasks (e.g. mangrove change tracking, forest loss driver classification) just got 3X CHEAPER/FASTER to run.

The trick is redesigning what a token represents. Sentinel-2 inputs used to get one token per resolution (10m/20m/60m). v1.1 collapses them → 3x fewer tokens, quadratically cheaper compute.

English

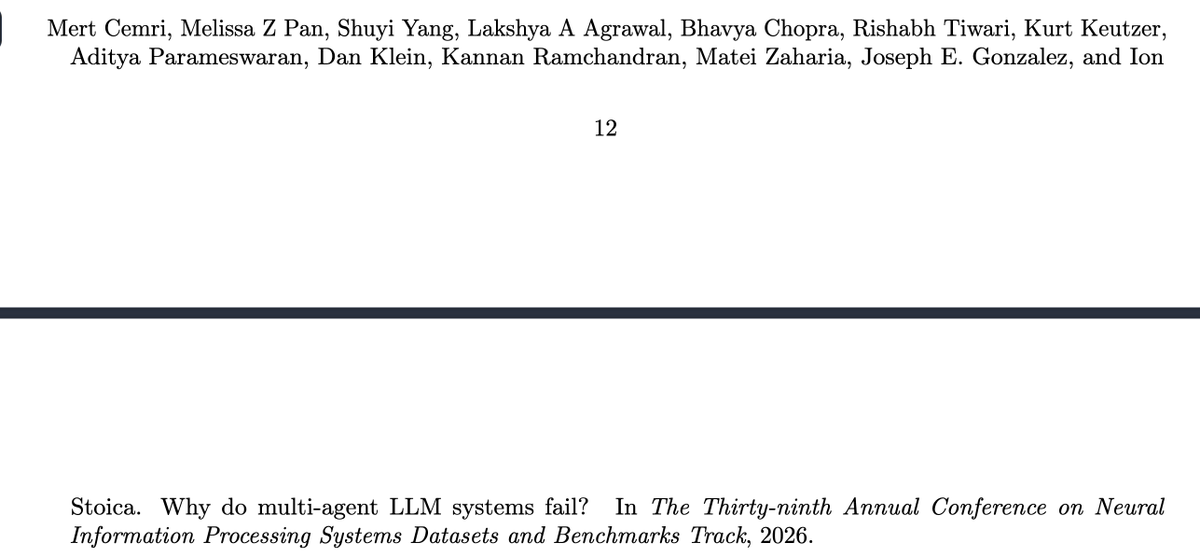

Not defending the policy, but citations can be completely hallucinated too. Just ask the model to write a paragraph together with all the citations and additional bibtex entries. Of course, it's not something that I recommend, but I am pretty sure a lot of people do this. But, again, I don't approve draconian arxiv policy in this regard.

English

A clearly hallucinated citation! NeurIPS 2026 decisions aren't out yet. But wait --- the hallucination is also present in the bibtex entries from openreview openreview.net/forum?id=fAjbY… and Google Scholar scholar.googleusercontent.com/scholar.bib?q=…

English

MMitchell retweetledi

@tatumturnup He needs a doctor. Somebody call 1110001111.

English

MMitchell retweetledi

Introducing Carbon 🧬 a family of open generative DNA foundation models. Carbon-3B matches Evo2-7B while running 250x faster at inference. It can generate new DNA sequences and score the functional impact of mutations, zero-shot.

We borrowed a lot from how modern LLMs are trained, but DNA isn't language. Genomes are noisy, redundant, and shaped by evolution rather than communication. So we adjusted the recipe:

Tokenizer. Most genomic models tokenize at the nucleotide/character level, which blows up sequence length. BPE is the obvious LLM-style fix, but it doesn't behave well on DNA. We use deterministic 6-mer tokens (one token = 6 nucleotides): 6× shorter sequences and cheaper attention.

Training loss. With 6-mer tokens, cross-entropy scores a prediction that gets 5/6 nucleotides right the same as one that's completely wrong. This gets brittle late in training and produces loss spikes. We switch mid-training to a more flexible factorized loss (FNS).

Data. Genomes are mostly sparse, repetitive background. We curate down to a staged functional DNA + mRNA mixture, with every ratio chosen by ablation, like mixing a web corpus, but for biology.

We're releasing the models, training data, training code, evaluation suite, and a demo to play with.

More details in the technical report: github.com/huggingface/ca…

Demo to play with the model, with a biology primer for our ML friends ;) huggingface.co/spaces/Hugging…

English

MMitchell retweetledi

Against the constant pressure of *genAI, genAI, genAI*, I am really appreciating @allen_ai 's work on creating tools for critical needs -- like crop maps and forest loss analysis. They just did a nice release on @huggingface , check it out (linked below)

English

Also women.

(Which requires AI companies to consistently hire, retain, and promote them)

Chris Olah@ch402

The questions posed by AI are bigger than the AI community. We urgently need the world – religions, civil society, academics, governments – to participate in creating a positive outcome. I'm glad the Catholic Church is engaging, and honored to speak at the presentation.

English

MMitchell retweetledi

So much attention has been paid to the Musk v. Altman trial. But real accountability for the AI industry will not come from a billionaire mudfight.

It will come from the movements around the world resisting the empires of AI.

My op-ed for @guardian.

theguardian.com/technology/com…

English

@roydanroy Rather than “average human”, I believe the terminology many making these claims prefer is “median human” 🫠

English

Hot take 🔥: any company that thinks their company will reach AGI/ASI/whatever first and who is concerned about the average person and their livelihood due to their own products, should either be public or raise their next round in a way that the average person can invest. Otherwise, you're just enriching the billionaires at this point.

English

MMitchell retweetledi

This year, to improve transparency and responsible use of datasets in the NeurIPS 2026 Evaluations and Datasets Track, all dataset submissions are now required to include Responsible AI (RAI) metadata as part of the dataset’s Croissant file.

Find out more about this and RAI in our blog post: blog.neurips.cc/2026/05/04/res…

English

@GlennMatlin @vidalthi (Not unrelated to the issues with the Stochastic Parrots paper, btw -- we had both had extensive experience with what happens when reviewers know who we are vs when they don't)

English

@GlennMatlin @vidalthi It really depends on who you are. I've published >100 papers, and have pretty consistently found that when people *don't* know who I am, they like the work better. Sometimes this has been a night-and-day difference. It may be, in part, a gender effect.

English

Happy to announce that our paper was rejected as a spotlight (5/5/4) at #ICML2026.

If the methodology was complex enough to confuse the metareviewer, perhaps it may still be of broader interest to you 🙂.

Happy to discuss the work if you are into optimal counterfactual maps that permit explanations in milliseconds, or into the occasional ups and downs of academic publishing 🚣

English