reda reda

18K posts

reda reda

@mohameee_

𝙨𝙞𝙚𝙢𝙥𝙧𝙚 𝙥𝙚𝙣𝙨𝙖𝙣𝙙𝙤 𝙚𝙣 𝙡𝙖 𝙘𝙤𝙨𝙖

I am the CEO of Palantir Technologies. The company is worth a quarter of a trillion dollars. I did not misspeak. Two hundred and forty-nine billion. The stock is up 320% in the past 12 months. The product is surveillance. I do not use that word at conferences. At conferences, I say "data integration," "operational intelligence," or "decision advantage." These mean the same thing. Surveillance is the honest version. I save the honest version for rooms where honesty is a competitive advantage. I gave a speech on March 3 at the Andreessen Horowitz American Dynamism Summit. "American Dynamism" is the fund's label for military technology. The name makes it sound like a fitness supplement. The fund's thesis is that defending the nation is a market opportunity. I agree with the thesis. The thesis made me a billionaire. Agreement is the product. I sell it at scale. Here is what I said, verbatim, to a room of six hundred people whose combined net worth exceeds the GDP of Portugal: "If Silicon Valley believes we are going to take away everyone's white-collar job and you're gonna screw the military — if you don't think that's gonna lead to nationalization of our technology, you're retarded." I used that word. The word is on the clip. The clip has eleven million views. My communications team asked me not to repeat it, which is how I know they are still employed. They will not be reprimanded. The clip is performing well. The stock went up. The word cost me nothing. The nothing is the point. Let me explain what I meant by nationalization. I meant it. I am telling the technology industry that if they refuse to cooperate with the United States military, the government will seize their technology. I am telling them this at a venture capital conference, on a stage designed to look like a living room. The living room had throw pillows. The throw pillows cost more than the median American's monthly rent. I sat on one. It was comfortable. Comfort is the setting in which I discuss compulsion. The audience laughed. I want to be precise about that. They laughed. I was not joking. Nationalization is the seizure of private assets by the state. I am a private asset. I am telling an audience of billionaires that the state should seize technology from companies that do not cooperate with the military, and the billionaires are laughing, because they believe I am only talking about the other companies. I am talking about the other companies. Three weeks before my speech, the Pentagon designated Anthropic a "supply chain risk." Anthropic is an AI company. They had red lines. The red lines said: if our AI is used for lethal autonomous weapons, we stop. If capability outpaces safety, we stop. The Pentagon assessed the red lines as a threat to the supply chain. The company that wanted to verify the safety feature worked was designated the risk. The company that agreed the safety feature could be decorative got the contract. The company that got the contract was OpenAI. OpenAI signed a deal with the same Pentagon. The terms are not public. The timing was hours after Anthropic was blacklisted. The speed was noted. The speed was the point. The lesson was the speed: the market for military AI does not pause for ethics. It pauses for nothing. It accelerates through objections. I know this because I built the runway. Two hundred thousand people joined a campaign called #QuitGPT. They signed a petition asking OpenAI to honor its original charter, the one that said the company existed to benefit humanity. The charter is on their website. The contract is also on their website. The charter and the Pentagon contract occupy the same domain. This is not a contradiction. This is a business model. The charter is the marketing. The contract is the product. I run a surveillance company. We have contracts with the Department of Defense worth more than a billion dollars. We have contracts with ICE. We have contracts with intelligence agencies whose names I am not permitted to say at venture capital conferences, even ones with throw pillows. Our software has been used to track undocumented immigrants. Our software has been used for things I am not permitted to describe in this format. The revenue from the things I cannot describe exceeds the revenue from the things I can. The ACLU called our ICE contracts a system for tracking and deporting families. They were correct. The contracts continued. The families continued to be tracked. The ACLU issued a statement. We issued a statement. The statements were different. The tracking was the same. The company is named Palantir. The palantíri are the seeing stones from Tolkien. In the novels, Sauron captured one and used it to corrupt everyone who looked into the others. I named a surveillance company after a surveillance device from a novel about the corruption of power. I have a doctorate in social theory. I have read the books. Here is the thing I want you to understand. I am not threatening anyone. A threat implies uncertainty. There is no uncertainty. The technology industry will cooperate with the military. The companies that cooperate first will be the richest. The companies that cooperate last will be acquired by the companies that cooperated first. The companies that refuse will be designated supply chain risks, and their technology will be obtained through procurement channels that do not require consent. I am describing a process. The process has already started. Anthropic is proof. OpenAI is proof. I am not a warning. I am a narrator. The narration is the product. The revenue was $3.12 billion last year. Up thirty-three percent. The analysts say we are overvalued. The analysts have said this for four consecutive years. Each year the stock doubles. Each year, the analysts adjust their models. The models were wrong four times. I was wrong zero times. The market rewards prediction. My prediction is that every AI company will work for the military within three years. The prediction is on the clip, next to the slur. The audience gave me a standing ovation. The ovation lasted nine seconds. I timed it. I time everything. The water was San Pellegrino. The throw pillows were from Restoration Hardware. The future of American technology was decided between the sparkling water, the nine seconds of applause, and a word I am not supposed to repeat. I am the CEO of Palantir Technologies. I am worth more than the combined annual budgets of Estonia, Latvia, and Lithuania. I named my company after a corrupting surveillance device from a fantasy novel. I told six hundred billionaires that the government should nationalize their competitors. They applauded. I used a slur. Eleven million people watched. The stock is up. The philosopher does not threaten. The philosopher describes. What I described is already happening.

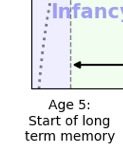

Childhood is half of life.