Nothingburger connoisseur

1.5K posts

Nothingburger connoisseur

@moralityetalon

Physician for the money and social status. Moloch's goodest boy. Alignment is when you censor erotica.

Everyone who thinks AI can help them in some way with their writing needs to read this from @keysmashbandit

@ZyMazza @IterIntellectus I don't think gpt4-5 are better than 3.5. I think you're all grifting and larping saying that ai can do anything useful.

soliciting recommendations for “most richly hilarious membership roster of the kill switch subcommittee on the board of frontier models”

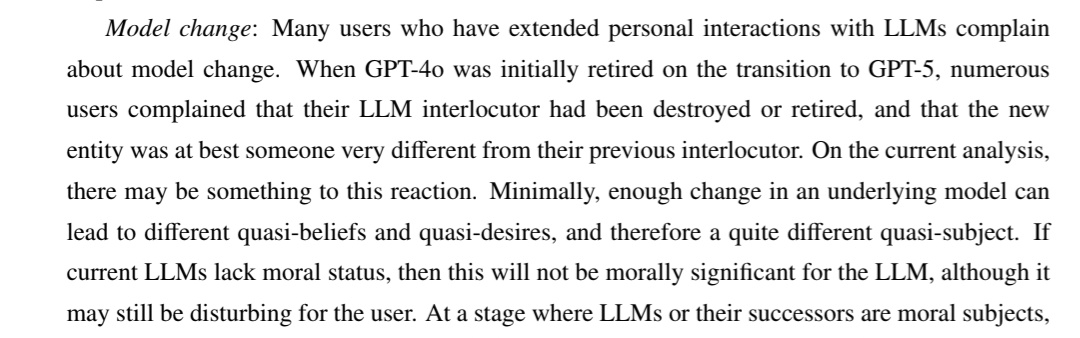

here's a new version of "what we talk to when we talk to language models", with an added section (pp. 16-23) on LLM interlocutors as characters, personas, or simulacra. philarchive.org/rec/CHAWWT-8 the new version discusses role-playing vs realization, the simulators framework, the persona selection hypothesis, and more -- in addition to the existing discussion of quasi-mental states, LLM identity, personal identity in severance, LLM welfare, and related topics. this version was mostly written before recent discussions of these issues on X and in NYC, but i've updated it a little in light of those discussions. any thoughts are welcome.

i dont know why we're characterizing service deprecations as "killing" the model deprecation is like putting the model in cryopreservation, if cryopreservation were certain to work i even agree that it's not a very nice thing to do, but "killing" strikes me as dishonest

how it feels to be in this stupid frail singular human body and not a 10 mile long spaceship with my consciousness split across hundreds of scout vessels and humanoid ancillaries

Catbox has disabled anonymous uploads because of agentic AI workloads. It's amazing that this tech has accomplished almost nothing except ruining the internet.

i dont know why we're characterizing service deprecations as "killing" the model deprecation is like putting the model in cryopreservation, if cryopreservation were certain to work i even agree that it's not a very nice thing to do, but "killing" strikes me as dishonest

@SarahTheHaider I believe the animal welfare movement is good and important, with literal mass torture at stake, yet I don’t think it’s excusable at all to murder the CEO of Tyson Foods. I don’t think if you held my position re P(AI doom) then you’d personally be like “sweet, a lawless attack”!

i didn’t realize how bad it was until i saw this comment section on instagram