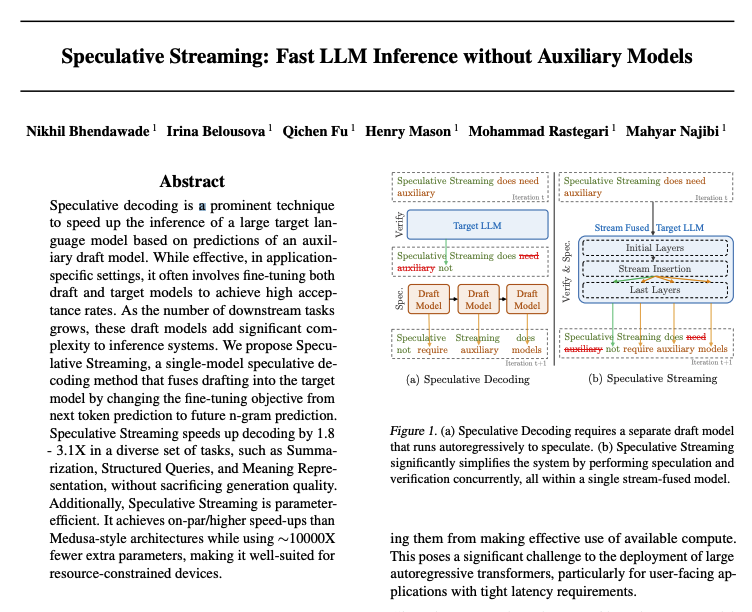

Apple presents CatLIP CLIP-level Visual Recognition Accuracy with 2.7x Faster Pre-training on Web-scale Image-Text Data Contrastive learning has emerged as a transformative method for learning effective visual representations through the alignment of image and text